Documente Academic

Documente Profesional

Documente Cultură

r5310406 Digital Communication

Încărcat de

sivabharathamurthy0 evaluări0% au considerat acest document util (0 voturi)

7 vizualizări1 paginăExplain u-law and a-law companding technique. Compare Delta modulation with Pulse code modulation technique. Give the comparisons between BFSK and PSK schemes.

Descriere originală:

Drepturi de autor

© Attribution Non-Commercial (BY-NC)

Formate disponibile

PDF, TXT sau citiți online pe Scribd

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentExplain u-law and a-law companding technique. Compare Delta modulation with Pulse code modulation technique. Give the comparisons between BFSK and PSK schemes.

Drepturi de autor:

Attribution Non-Commercial (BY-NC)

Formate disponibile

Descărcați ca PDF, TXT sau citiți online pe Scribd

0 evaluări0% au considerat acest document util (0 voturi)

7 vizualizări1 paginăr5310406 Digital Communication

Încărcat de

sivabharathamurthyExplain u-law and a-law companding technique. Compare Delta modulation with Pulse code modulation technique. Give the comparisons between BFSK and PSK schemes.

Drepturi de autor:

Attribution Non-Commercial (BY-NC)

Formate disponibile

Descărcați ca PDF, TXT sau citiți online pe Scribd

Sunteți pe pagina 1din 1

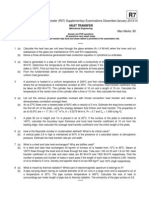

Code No: R5310406 R5

III B.Tech I Semester(R05) Supplementary Examinations, November 2010

DIGITAL COMMUNICATION

(Electronics & Communication Engineering)

Time: 3 hours Max Marks: 80

Answer any FIVE Questions

All Questions carry equal marks

?????

1. (a) Explain µ - law and A - law companding technique?

(b) Approximate µ-law curve with µ = 100 by two-linear segments, one for 0<x≤ 0.1 and the other

for 0.1 < x ≤ 1. Calculate S/Nq for S = -40, -10. Where x(t) is input signal. [8+8]

2. (a) Explain with a neat block diagram the operation of a continuously variable slope delta modulator

(CVSD).

(b) Compare Delta modulation with Pulse code modulation technique. [8+8]

3. (a) Explain with neat diagrams coherent BFSK transmitter and receiver. Also Explain single space

diagram for coherent BFSK systems.

(b) Give the comparisons between FSK and PSK schemes. [10+6]

4. (a) Draw the block diagram of duo - binary PAM system and explain.

(b) Mention the drawbacks in duo - binary correlative level coding with neat waveforms. [10+6]

5. One experiment has four mutual exclusive outcomes Aj , j =1, 2, 3, 4 and second experiment has three

mutually exclusive outcomes Bj , j=1, 2, 3. The joint probabilities P (A, B) are

P (A1 , B1 ) = 0.10 P (A1 , B2 ) = 0.08 P (A1 , B3 ) = 0.13

P (A2 , B1 ) = 0.05 P (A2 , B2 ) = 0.03 P (A2 , B3 ) = 0.09

P (A3 , B1 ) = 0.11 P (A3 , B2 ) = 0.04 P (A3 , B3 ) = 0.06

With the given joint probabilities P (Ai , Bj ), if the outcomes Ai , i=1, 2, 3, 4 of experiment A.

Determine the Average Mutual Information I (B; A). [16]

6. (a) Define the channel capacity in terms of average signal-power and noise power.

(b) Mention the two important implications of Shannon-Hartley theorem? [16]

7. What is parity Check matrix H? Explain the procedure to verify a code word C is generated by the

matrix G or not ? [16]

8. (a) What is the importance of Viterbi Algorithm? Explain.

(b) Explain Viterbi Algorithm with considering appropriate encoder. [16]

?????

S-ar putea să vă placă și

- Control Systems (CS) Notes As Per JntuaDocument203 paginiControl Systems (CS) Notes As Per Jntuasivabharathamurthy100% (3)

- SSC Telugu (FL) (AP)Document232 paginiSSC Telugu (FL) (AP)sivabharathamurthyÎncă nu există evaluări

- SSC Social Textbook (AP)Document100 paginiSSC Social Textbook (AP)sivabharathamurthyÎncă nu există evaluări

- R7312301 Transport Phenomena in BioprocessesDocument1 paginăR7312301 Transport Phenomena in BioprocessessivabharathamurthyÎncă nu există evaluări

- R7311205 Distributed DatabasesDocument1 paginăR7311205 Distributed DatabasessivabharathamurthyÎncă nu există evaluări

- 07A4EC01 Environmental StudiesDocument1 pagină07A4EC01 Environmental StudiessivabharathamurthyÎncă nu există evaluări

- R7410506 Mobile ComputingDocument1 paginăR7410506 Mobile ComputingsivabharathamurthyÎncă nu există evaluări

- 9A05707 Software Project ManagementDocument4 pagini9A05707 Software Project ManagementsivabharathamurthyÎncă nu există evaluări

- R7410407 Operating SystemsDocument1 paginăR7410407 Operating SystemssivabharathamurthyÎncă nu există evaluări

- R5410201 Neural Networks & Fuzzy LogicDocument1 paginăR5410201 Neural Networks & Fuzzy LogicsivabharathamurthyÎncă nu există evaluări

- 9A13701 Robotics and AutomationDocument4 pagini9A13701 Robotics and AutomationsivabharathamurthyÎncă nu există evaluări

- R7311506 Operating SystemsDocument1 paginăR7311506 Operating SystemssivabharathamurthyÎncă nu există evaluări

- R7310106 Engineering GeologyDocument1 paginăR7310106 Engineering GeologysivabharathamurthyÎncă nu există evaluări

- R7310506 Design & Analysis of AlgorithmsDocument1 paginăR7310506 Design & Analysis of AlgorithmssivabharathamurthyÎncă nu există evaluări

- Code: R7311306: (Electronics & Control Engineering)Document1 paginăCode: R7311306: (Electronics & Control Engineering)sivabharathamurthyÎncă nu există evaluări

- 9A10505 Principles of CommunicationsDocument4 pagini9A10505 Principles of CommunicationssivabharathamurthyÎncă nu există evaluări

- R7311006 Process Control InstrumentationDocument1 paginăR7311006 Process Control InstrumentationsivabharathamurthyÎncă nu există evaluări

- R7310406 Digital CommunicationsDocument1 paginăR7310406 Digital CommunicationssivabharathamurthyÎncă nu există evaluări

- 9A15502 Digital System DesignDocument4 pagini9A15502 Digital System Designsivabharathamurthy100% (1)

- R7310206 Linear Systems AnalysisDocument1 paginăR7310206 Linear Systems AnalysissivabharathamurthyÎncă nu există evaluări

- R5310406 Digital CommunicationsDocument1 paginăR5310406 Digital CommunicationssivabharathamurthyÎncă nu există evaluări

- R7310306 Heat TransferDocument1 paginăR7310306 Heat Transfersivabharathamurthy100% (1)

- R5310204 Power ElectronicsDocument1 paginăR5310204 Power ElectronicssivabharathamurthyÎncă nu există evaluări

- 9A14503 Principles of Machine DesignDocument8 pagini9A14503 Principles of Machine DesignsivabharathamurthyÎncă nu există evaluări

- 9A23501 Heat Transfer in BioprocessesDocument4 pagini9A23501 Heat Transfer in BioprocessessivabharathamurthyÎncă nu există evaluări

- 9A04504 Digital IC ApplicationsDocument4 pagini9A04504 Digital IC ApplicationssivabharathamurthyÎncă nu există evaluări

- 9A21506 Mechanisms & Mechanical DesignDocument8 pagini9A21506 Mechanisms & Mechanical DesignsivabharathamurthyÎncă nu există evaluări

- 9A03505 Heat TransferDocument4 pagini9A03505 Heat TransfersivabharathamurthyÎncă nu există evaluări

- 9A05505 Operating SystemsDocument4 pagini9A05505 Operating SystemssivabharathamurthyÎncă nu există evaluări

- 9A02505 Electrical Machines-IIIDocument4 pagini9A02505 Electrical Machines-IIIsivabharathamurthyÎncă nu există evaluări

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeDe la EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeEvaluare: 4 din 5 stele4/5 (5783)

- The Yellow House: A Memoir (2019 National Book Award Winner)De la EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Evaluare: 4 din 5 stele4/5 (98)

- Never Split the Difference: Negotiating As If Your Life Depended On ItDe la EverandNever Split the Difference: Negotiating As If Your Life Depended On ItEvaluare: 4.5 din 5 stele4.5/5 (838)

- Shoe Dog: A Memoir by the Creator of NikeDe la EverandShoe Dog: A Memoir by the Creator of NikeEvaluare: 4.5 din 5 stele4.5/5 (537)

- The Emperor of All Maladies: A Biography of CancerDe la EverandThe Emperor of All Maladies: A Biography of CancerEvaluare: 4.5 din 5 stele4.5/5 (271)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceDe la EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceEvaluare: 4 din 5 stele4/5 (890)

- The Little Book of Hygge: Danish Secrets to Happy LivingDe la EverandThe Little Book of Hygge: Danish Secrets to Happy LivingEvaluare: 3.5 din 5 stele3.5/5 (399)

- Team of Rivals: The Political Genius of Abraham LincolnDe la EverandTeam of Rivals: The Political Genius of Abraham LincolnEvaluare: 4.5 din 5 stele4.5/5 (234)

- Grit: The Power of Passion and PerseveranceDe la EverandGrit: The Power of Passion and PerseveranceEvaluare: 4 din 5 stele4/5 (587)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaDe la EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaEvaluare: 4.5 din 5 stele4.5/5 (265)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryDe la EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryEvaluare: 3.5 din 5 stele3.5/5 (231)

- On Fire: The (Burning) Case for a Green New DealDe la EverandOn Fire: The (Burning) Case for a Green New DealEvaluare: 4 din 5 stele4/5 (72)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureDe la EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureEvaluare: 4.5 din 5 stele4.5/5 (474)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersDe la EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersEvaluare: 4.5 din 5 stele4.5/5 (344)

- The Unwinding: An Inner History of the New AmericaDe la EverandThe Unwinding: An Inner History of the New AmericaEvaluare: 4 din 5 stele4/5 (45)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyDe la EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyEvaluare: 3.5 din 5 stele3.5/5 (2219)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreDe la EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreEvaluare: 4 din 5 stele4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)De la EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Evaluare: 4.5 din 5 stele4.5/5 (119)

- Her Body and Other Parties: StoriesDe la EverandHer Body and Other Parties: StoriesEvaluare: 4 din 5 stele4/5 (821)