Documente Academic

Documente Profesional

Documente Cultură

Statistics For Exam

Încărcat de

Prochetto DaDescriere originală:

Titlu original

Drepturi de autor

Formate disponibile

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentDrepturi de autor:

Formate disponibile

Statistics For Exam

Încărcat de

Prochetto DaDrepturi de autor:

Formate disponibile

STATISTICS

CHAPTER 1 : CORRELATION

1. Definition of correlation

Correlation analysis attempts to determine the degree of relationship between variables. In other word, correlation is an analysis of the co-variation between two or more variables. The correlation expresses the relationship or inter-dependence of two sets of variables One variable may be called independent and the other dependent variable. For instance, agricultural production depends on rainfall. Rainfall causes increase in the agricultural production. But increase in the agricultural production has no impact on the rainfall. Therefore, rainfall is independent and production is dependent.

2. Usefulness of correlation

Correlation is useful in natural and social sciences. We shall, however, study the uses of relation in business and economics.

1. Correlation is very useful to economists to study the relationship between variables, like price and quantity demanded. 2. Correlation analysis helps in measuring the degree of relationship between the variables like supply and demand, price and supply, income and expenditure, etc. 3. Correlation analysis help to reduce the range of uncertainty of our prediction. 4. Correlation analysis is the basis for regression analysis.

3. Different Types of correlation

There are different types of correlation, but the important types are:

o o o o

Positive and negative Simple and multiple Complex (Partial and total) Linear and non-linear

3.1 Positive and negative correlation

Positive and negative correlations depend upon the direction of change of the variables. If two variables tend to move together in the same direction, it called is positive or direct correlation. Example : Rainfall and yield of crops, price and supply are examples of positive correlation.

If two variables tend to move together in opposite directions, it is called negative or inverse correlation. Example : Price and demand, yield of crops and price, etc., are examples of negative correlation.

3.2 Simple and multiple

When we study only two variables, the relationship is described as simple correlation; Example : supply of money and price level, demand and price, etc. In a multiple correlation we study more than two variables simultaneously, Example : the relationship of price, demand and supply of a commodity.

3.3 Partial and total

When there is a multifactor relationship, but we study the correlation of only two variables excluding other, it is called partial correlation. For example : there is relationship among price, demand and supply, but when we study the correlation between price and demand, excluding the impact of the supply it is a partial study of correlation. In total correlation, all the factors affecting the result are taken into account.

3.4 Linear and non-linear (curvilinear) correlation

If the ratio of change between two variables is constant (same), then there is a linear correlation between these variables. In such case, if we plot the variables on the graph, we get a straight line. The example below shows linear correlation between X and Y variables:

X Y

5 4

10 8

15 12

20 16

25 20

In a non-linear (or curvilinear) correlation the ratio of change between two variables is not constant (same). The graph of non-linear (or curvilinear) relationship forms a curve.

4. Methods of calculating correlation

There are following different methods of finding out the relationship between two variables: The different methods of finding out the relationship between two variables are

Graphic method

1. Scatter Diagram or Scattergrarn 2. Simple Graph or Correlogram

Mathematical method

o Karl Pearson's Coefficient of Correlation o Spearman's Rank coefficient of Correlation o Coefficient of Concurrent Deviation

o Method of least squares.

5. Graphic method for the calculation of coefficient of correlation

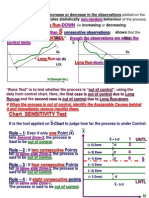

4.1.1 Scatter Diagram method This is the simplest method of finding out whether there is any relationship present between two variables. In this method, the given data are plotted on a graph paper in the form of dots. X variables are plotted on the horizontal axis and Y variables on the vertical axis. As a result, we have the dots and we can find out the relationship of different points.

Diagram 1

Diagram 2

Diagram 3

Diagram 4

Diagram 5

4.1.2 Simple graph or Correlogram If the values of the two variables X and Y are plotted on a graph paper, we get two curves, one for X variables and another for Y variables. These two curves reveal whether or not the variables are related. If both the curves move in the same direction parallel to each other, upward or downward the correlation between X and Y is said to be positive. On the other hand, if they move in the opposite directions, then the correlation is said to be negative.

5. Mathematical Method for the calculation of coefficient of correlation

4.2.1 Karl Pearson's Coefficient of Correlation

Karl Pearson a statistician suggested a mathematical method for measuring the magnitude of linear relationship between two variables. It is the most widely used method, which is denoted by the symbol r. The formulas for calculating Pearsonian Coefficient of Correlation are: xy r= Nxy (1)

xy r= x2y2 Where, x = (X X) (2)

y = (Y Y) x = Standard deviation of series X y = Standard deviation of series Y

The value of the coefficient of correlation lies between +I and -1. When r = + 1, then there is perfect positive correlation between the variables. When r = - I, then there is perfect negative correlation between the variables. When r = 0, then there is no relationship between the variables. Normally, the value lies between + 0.8 and - 0.5; When r = + 0.8, then there is positive correlation, because r is positive and the magnitude of correlation is 0.8.

6. Error of measurement for coefficient of correlation

Coefficient of correlation is more reliable if the error of measurement is reduced to the minimum. 1 - r2 Probable Error (P.E.r) = 0.6745 N

Functions of Probable Error

If the value of r is less than the probable error, the value of r is not at all significant. If the value of r is more than six times the probable error, the value of r is significant. If the probable error is less than 0.3, the correlation should not be considered at all. If the probable error is small, the correlation definitely exists.

7. Rank Correlation Coefficient

Qualitative characteristics cannot be measured quantitatively, as in the case of Pearsons coefficient of correlation Rank method is useful in dealing with qualitative characteristics such as intelligence, beauty, morality, character, etc. Rank method is based on the ranks given to the observations. It can be used when the items are irregular, extreme, erratic or inaccurate Rank correlation is applicable on:

6D2 R=1N (N2-1)

Where: R =Rank coefficient of correlation D2 = Sum of the squares of the differences of two ranks N = Number of paired observations

The value of R lies between + -1 and 1.

If P = + 1, then there is complete agreement in the order of ranks and the direction of the rank is also the same. If R = -1, then there is complete disagreement in the order of ranks and they are in opposite directions.

We may come across two types of problems

Where ranks are given Where ranks are not given

When the actual ranks are given, the steps followed are

Compute the difference of the two ranks (R1 and R2) and denote by D. Square the D to get D2. Compute D2 Apply the formula.

CHAPTER 2 : REGRESSION

1. Definition

Regression estimates or predicts the value of one variable from the given value of other variable.

For instance, price and supply are correlated. So, we can find out the expected amount of supply for a given price.

In ' other words, regression helps us to estimate one variable or the dependent variable from the other variable or the independent variable.

2. Uses of Regression Analysis

Following are the uses of regression analysis: 1. Regression analysis is used in many scientific studies. It is widely used in social sciences like economics, natural and physical sciences.

2.

It is used to estimate the relation between two economic variables like income and expenditure. If we know the income, we can find out the probable expenditure.

3.

Regression analysis predicts the value of dependent variables from the values of independent variables.

3. Differences between correlation and regression:

Correlation

Regression

helps from the the

Correlation estimates the relationship Regression analysis between two or more variables, which dependent variable move in the same or opposite direction. independent variable. Both the variables (dependent independent) vary random.

& In regression analysis one variable is dependent on the other.

Correlation estimates the degree of Regression analysis indicates cause relationship between two (more variables) and effect of the relationship between but not the cause and effect the variables The measure of coefficient of correlation is The measure of regression coefficient relative and varies between + 1 and 1. is absolute. If the value of one variable is known, the other could be measured. In correlation estimation there may be In regression there is no senseless senseless correlation between two (more) relationship. variables. Correlation has limited application. It is Regression has wider application. It is confined only to linear relationship. applicable to linear and non-linear relationship. Correlation is not applicable for further Regression is applicable for further mathematical treatment. mathematical treatment. If coefficient of correlation is positive, the Regression coefficient explains that if two variables are positively correlated and one variable increases other vice versa. decreases.

4. Method of Studying Regression

There are following two methods for studying regression:

Graphic method Algebraic method

4.1 Graphic Method In this method the corresponding values of the independent and dependent variables are plotted on a graph paper. The independent variables are plotted on horizontal axis and the dependent variables on the vertical axis.

4.2 Algebraic Method In graphical expression, a regression line is a straight line fitted to the data by the method of least squares. A regression line is the line of best fit. Always there are two regression lines between two variables, say, X and Y. One regression line shows regression of X upon Y, and the other shows the regression of Y upon X. The regression line of X on Y gives the most probable values of X for any given value of Y. In the same manner, the regression line of Y on X gives the most probable values of Y for any given value of X.

Following are the conditions of correlation between two variables: If there is perfectly positive correlation (+1) between two variables, the two regression lines (X of Y & Y of X) coincide with each other (Annex-2). If there is perfectly negative correlation (-1) between two variables, the two regression lines (X of Y & Y of X) coincide with each other (Annex-2). If there is a higher degree of correlation, the regression lines are nearer to each other (Figure 1). If there is lesser degree of correlation, the two lines are away from each other (Figure 2). If coefficient of correlation is zero (r = 0), the two variables are independent. In this case, both the regression lines cut each other at right angle (Figure 3).

The two regression lines (X of Y & Y of X) cut each other at the point of average of X and Y. If we draw a perpendicular from the point to the X-axis we get the average value of X and if we draw a perpendicular from the point to the Y-axis we get the average value of Y.

High degree of correlation High degree of correlation,High degree of correlation

CHAPTER 3 : INDEX

1. Concepts and Applications : INDEX

Index numbers occupy a very important place in business statistics.

An index number may be described as a specialised average designed to measure the change in the level of a phenomenon with respect to time, geographic location or other characteristics such as income, etc. For proper understanding of the term index number, the following points are to consider:

Index numbers are specialized averages.

An index number represents the general level of magnitude of the changes between two or more situations of a number of variables taken as a whole.

Index numbers arc quantitative measures of the general level of growth of prices, production, inventory and other quantities of economic interest

2. Uses of Index Numbers

Index numbers help in framing suitable policies. Many of the economic and business policies are guided by index numbers. For example, for deciding the increase in dearness allowance of the employees, the employer has to depend primarily upon the cost of living index. If wages and salaries are not adjusted in accord the cost of living, often strikes and lockouts cause considerable waste of resources. Though index numbers are most widely used in the evaluation of business and economic conditions there are a large number of other fields also where index numbers are useful. For example, sociologist may speak of population indices; psychologists measure intelligence quotients, which are essentially index numbers comparing a person's intelligence score with that of an average for his or her age; health authorities prepare indices to display changes in the adequacy of hospital facilities; and educational research organizations have devised formulae to measure changes in effectiveness of school systems.

Index numbers are very useful in defining. Index numbers are used to adjust the original data for price changes, or to adjust wage for cost of living changes and thus transform nominal wages into real wages.

3. Methods of Constructing Index Numbers

A large number of formulae have been devised for constructing index numbers. They can be grouped under following two heads (Figure below):

(a) Unweighted indices (b) Weighted indices

In the unweighted indices weights are not expressly assigned whereas in the weighted indices weights are assigned to the various items. Each of these types may further be divided under following two heads (Figure below)

Simple Aggregative Simple Average

Simple Aggregative Unweighted indices Simple Aggregative Index Numbers Index Numbers Unweighted indices Simple Average

4. Unweighted Index Numbers

4.1 Simple Aggregative Method

This is the simplest method of constructing index numbers. When this method is used to construct a price index, the total of current year prices for the various commodities in question is divided by the total of base year prices and the quotient is multiplied by 100. In other word it means:

Total of current year prices for various commodities Index = Total of base year prices for various commodities

Symbolically,

P1 I= P0 Where: I = Index P1 = Total of current year prices for various commodities (Current year prices of all commodities are added) P0 = Total of base year prices for various commodities (Prices of all commodities from the base year are added)

4.2 Simple Average or Relatives Method

When this method is used to construct price index, relative prices for the items those are included in the index are calculated and then an average of these relative prices is calculated using any one of the methods for the calculation of average (central tendency), i.e., arithmetic mean, median, mode, geometric mean or harmonic mean. If arithmetic mean is used for the calculation of relative (averaging) price, the formula for computing the index is:

P1 P0 I= N Where: I = Index P1 = Current year prices of the commodity P0 = Base year prices of the commodity N = Number of commodities relative price change are considered 100

Although any methods of average (central tendency) can be used to obtain the overall index, price relatives are generally averaged either by the arithmetic or the geometric mean.

5. Weighted Index Numbers

Weighted index numbers are of two types:

Weighted Aggregative Index Numbers, and Weighted Average of Relative Index Numbers

5.1 Weighted Aggregative Index Numbers

Weighted Aggregative Index Numbers are of the simple aggregative type. There are various methods of assigning weights.

Laspeyres method Paasche method Dorbish and Bowleys method Fishers Ideal method Marshal-Edgeworth method, and Kelly's method

All these methods carry the name of persons who have suggested them.

5.1.1 Laspeyres Method

Laspeyres Index attempts to answer the question:

What is the change in aggregate value of the base period list of goods when valued at given period prices.

The formula for constructing index is p1q0 I= p0q0 Steps:

100

Multiply the base year prices of the commodities with base year weights and obtain p1q0 Multiply the base year prices of the commodities with base year weights and obtain p0q0 Divide p1q0 by p0q0 and multiply the quotient by 100. This gives us the price index

5.1.2 Paasche Method

In this method the current year quantities are taken as weights. The formula for constructing the index is: p1q1 I= p0q1 Steps:

100

Multiply the current year prices of the commodities with current year weights and obtain p1q1 Multiply the base year prices of the commodities with current year weights and obtain p0q1 Divide p1q1, by p0q1, and multiply the quotient by 100.

CHAPTER 6 : HYPOTHESIS

1. What Is a Hypothesis?

A hypothesis is a statement about a population.

Hypothesis A statement about a population developed for the purpose of testing.

Five Steps in Hypothesis Testing:

1. Specify the Null Hypothesis 2. Specify the Alternative Hypothesis 3. Set the Significance Level (a) 4. Calculate the Test Statistic and Corresponding P-Value 5. Drawing a Conclusion

Step 1: Specify the Null Hypothesis The null hypothesis (H0) is a statement of no effect, relationship, or difference between two or more groups or factors. In research studies, a researcher is usually interested in disproving the null hypothesis.

Step 2: Specify the Alternative Hypothesis The alternative hypothesis (H1) is the statement that there is an effect or difference. This is usually the hypothesis the researcher is interested in proving. The alternative hypothesis can be one-sided(only provides one direction, e.g., lower) or two-sided.

Step 3: Set the Significance Level (a) The significance level (denoted by the Greek letter alpha a) is generally set at 0.05. This means that there is a 5% chance that you will accept your alternative hypothesis when your null hypothesis is actually true. The smaller the significance level, the greater the burden of proof needed to reject the null hypothesis

Step 4: Calculate the Test Statistic and Corresponding P-Value In another section we present some basic test statistics to evaluate a hypothesis. Hypothesis testing generally uses a test statistic that compares groups or examines associations between variables. When describing a single sample without

establishing relationships between variables, a confidence interval is commonly used. The p-value describes the probability of obtaining a sample statistic as or more extreme by chance alone if your null hypothesis is true. This p-value is determined based on the result of your test statistic. Your conclusions about the hypothesis are based on your p-value and your significance level.

Step 5: Drawing a Conclusion

1. P-value <= significance level (a) => Reject your null hypothesis in favor of your alternative hypothesis. Your result is statistically significant. 2. P-value > significance level (a) => Fail to reject your null hypothesis. Your result is not statistically significant.

LEVEL OF SIGNIFICANCE

The methods of inference used to support or reject claims based on sample data are known as tests of significance.

Every test of significance begins with a null hypothesis H0. H0 represents a theory that has been put forward. For example, in a clinical trial of a new drug, the null hypothesis might be that the new drug is no better, on average, than the current drug. We would write H0: there is no difference between the two drugs on average.

The alternative hypothesis, Ha, is a statement of what a statistical hypothesis test is set up to establish. For example, in a clinical trial of a new drug, the alternative hypothesis might be that the new drug has a different effect, on average, compared to that of the current drug. We would write Ha: the two drugs have different effects, on average. The alternative hypothesis might also be that the new drug is better, on average, than the current drug. In this case we would write Ha: the new drug is better than the current drug, on average.

ONE-SIDED or TWO-SIDED

Hypotheses are always stated in terms of population parameter, such as the mean An alternative hypothesis may be one-sided or two-sided. .

A one-sided hypothesis claims that a parameter is either larger or smaller than the value given by the null hypothesis. A two-sided hypothesis claims that a parameter is simply not equal to the value given by the null hypothesis -- the direction does not matter.

Hypotheses for a one-sided test for a population mean take the following form: H0: Ha: or H0: Ha: =k >k =k < k.

Hypotheses for a two-sided test for a population mean take the following form: H0: Ha: =k k.

TYPE 1 ERROR & TYPE 2 ERROR

Type I Error

Rejecting the null hypothesis when it is in fact true is called a Type I error.

When a hypothesis test results in a p-value that is less than the significance level, the result of the hypothesis test is called statistically significant.

Type II Error Not rejecting the null hypothesis when in fact the alternate hypothesis is true is called a Type II error.

Note: "The alternate hypothesis" in the definition of Type II error may refer to the alternate hypothesis in a hypothesis test, or it may refer to a "specific" alternate hypothesis.

Example: In a t-test for a sample mean , with null hypothesis "" = 0" and alternate hypothesis " > 0", we may talk about the Type II error relative to thegeneral alternate hypothesis " > 0", or may talk about the Type II error relative to the specific alternate hypothesis " > 1". Note that the specific alternate hypothesis is a special case of the general alternate hypothesis.

S-ar putea să vă placă și

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeDe la EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeEvaluare: 4 din 5 stele4/5 (5795)

- Pass - SupplierDocument1 paginăPass - SupplierProchetto DaÎncă nu există evaluări

- Grit: The Power of Passion and PerseveranceDe la EverandGrit: The Power of Passion and PerseveranceEvaluare: 4 din 5 stele4/5 (588)

- Performance AppraisalDocument71 paginiPerformance AppraisalProchetto Da100% (1)

- The Yellow House: A Memoir (2019 National Book Award Winner)De la EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Evaluare: 4 din 5 stele4/5 (98)

- SeriesDocument1 paginăSeriesProchetto DaÎncă nu există evaluări

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceDe la EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceEvaluare: 4 din 5 stele4/5 (895)

- KhabarDocument1 paginăKhabarProchetto DaÎncă nu există evaluări

- Shoe Dog: A Memoir by the Creator of NikeDe la EverandShoe Dog: A Memoir by the Creator of NikeEvaluare: 4.5 din 5 stele4.5/5 (537)

- Bangladesh Institute of Management (BIM) PGDHRM (Ev-1) Course Title: Industrial Relations Group Name: RoseDocument1 paginăBangladesh Institute of Management (BIM) PGDHRM (Ev-1) Course Title: Industrial Relations Group Name: RoseProchetto DaÎncă nu există evaluări

- The Emperor of All Maladies: A Biography of CancerDe la EverandThe Emperor of All Maladies: A Biography of CancerEvaluare: 4.5 din 5 stele4.5/5 (271)

- First Time Manager Registration FormDocument2 paginiFirst Time Manager Registration FormProchetto DaÎncă nu există evaluări

- The Little Book of Hygge: Danish Secrets to Happy LivingDe la EverandThe Little Book of Hygge: Danish Secrets to Happy LivingEvaluare: 3.5 din 5 stele3.5/5 (400)

- Porf. A.Z.M. Anisur Rahman: Cost & Management AccountingDocument1 paginăPorf. A.Z.M. Anisur Rahman: Cost & Management AccountingProchetto DaÎncă nu există evaluări

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureDe la EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureEvaluare: 4.5 din 5 stele4.5/5 (474)

- 1.2 Introduction To Labour Economics and Personnel Economics.Document6 pagini1.2 Introduction To Labour Economics and Personnel Economics.Foisal Mahmud Rownak100% (2)

- On Fire: The (Burning) Case for a Green New DealDe la EverandOn Fire: The (Burning) Case for a Green New DealEvaluare: 4 din 5 stele4/5 (74)

- Cash Flow NotesDocument14 paginiCash Flow NotesProchetto Da100% (1)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersDe la EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersEvaluare: 4.5 din 5 stele4.5/5 (345)

- Calculating Employee AttritionDocument9 paginiCalculating Employee AttritionProchetto DaÎncă nu există evaluări

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryDe la EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryEvaluare: 3.5 din 5 stele3.5/5 (231)

- Multivariable FunctionsDocument2 paginiMultivariable FunctionsProchetto DaÎncă nu există evaluări

- Never Split the Difference: Negotiating As If Your Life Depended On ItDe la EverandNever Split the Difference: Negotiating As If Your Life Depended On ItEvaluare: 4.5 din 5 stele4.5/5 (838)

- Assignment On 'Personnel Economics'Document3 paginiAssignment On 'Personnel Economics'Prochetto DaÎncă nu există evaluări

- Definition of UnemploymentDocument4 paginiDefinition of UnemploymentProchetto DaÎncă nu există evaluări

- Team of Rivals: The Political Genius of Abraham LincolnDe la EverandTeam of Rivals: The Political Genius of Abraham LincolnEvaluare: 4.5 din 5 stele4.5/5 (234)

- Assignment On Performance AppraisalDocument43 paginiAssignment On Performance AppraisalProchetto Da100% (1)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaDe la EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaEvaluare: 4.5 din 5 stele4.5/5 (266)

- 14 - Stress OB Organisational BehaviourDocument19 pagini14 - Stress OB Organisational Behaviourrohan_jangid8100% (1)

- MCQ Business StatisticsDocument41 paginiMCQ Business StatisticsShivani Kapoor0% (1)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyDe la EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyEvaluare: 3.5 din 5 stele3.5/5 (2259)

- Kendall Rank Correlation CoefficientDocument4 paginiKendall Rank Correlation Coefficienthafezasad100% (1)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreDe la EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreEvaluare: 4 din 5 stele4/5 (1091)

- JPM TQM Course Mat-5 T-3 Imba 2013Document14 paginiJPM TQM Course Mat-5 T-3 Imba 2013Vishnu PrasadÎncă nu există evaluări

- Audit Sampling (Chapter 9)Document25 paginiAudit Sampling (Chapter 9)cris100% (2)

- Proc LogisticDocument261 paginiProc LogisticNguyễn Thanh TràÎncă nu există evaluări

- Sta301 Grand Quiz by McsDocument297 paginiSta301 Grand Quiz by McsNimra Khursheed100% (1)

- Data Collection StatisticsDocument18 paginiData Collection StatisticsGabriel BelmonteÎncă nu există evaluări

- The Unwinding: An Inner History of the New AmericaDe la EverandThe Unwinding: An Inner History of the New AmericaEvaluare: 4 din 5 stele4/5 (45)

- MCQ On AnovaDocument6 paginiMCQ On AnovaAmritansh100% (2)

- Final Predictive Vaibhav 2020Document101 paginiFinal Predictive Vaibhav 2020sristi agrawalÎncă nu există evaluări

- Faktor Faktor Yang Berhubungan Dengan Kepatuhan Penggunaan Alat Pelindung Diri Pada Pekerja Rekanan (Pt. X) Di PT Indonesia Power Up SemarangDocument12 paginiFaktor Faktor Yang Berhubungan Dengan Kepatuhan Penggunaan Alat Pelindung Diri Pada Pekerja Rekanan (Pt. X) Di PT Indonesia Power Up SemarangAsriadi S.Kep.Ns.Încă nu există evaluări

- Quantitative Analysis PaperDocument2 paginiQuantitative Analysis PaperJay WilliamsÎncă nu există evaluări

- Statistics of BusinessDocument26 paginiStatistics of BusinessTEUKU MUHAMMAD FAZHIAN ALZAÎncă nu există evaluări

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)De la EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Evaluare: 4.5 din 5 stele4.5/5 (121)

- Anova TestDocument41 paginiAnova TestRAJESH KUMAR100% (2)

- Pre TestDocument2 paginiPre TestPing Homigop Jamio100% (2)

- Ebook Elementary Statistics 3Rd Edition Navidi Solutions Manual Full Chapter PDFDocument52 paginiEbook Elementary Statistics 3Rd Edition Navidi Solutions Manual Full Chapter PDFDouglasRileycnji100% (11)

- Tutorial 5 Sem 1 2021-22Document6 paginiTutorial 5 Sem 1 2021-22Pap Zhong HuaÎncă nu există evaluări

- Correlation NotesDocument15 paginiCorrelation NotesGAYATHRI NATESHÎncă nu există evaluări

- Business Analytics MCQ StudentDocument4 paginiBusiness Analytics MCQ StudentArbaaz ShaikhÎncă nu există evaluări

- Assignment 2Document9 paginiAssignment 2Abhishek BatraÎncă nu există evaluări

- Nama: Rosalinda NIM: B11.2018.04883: Reliability StatisticsDocument7 paginiNama: Rosalinda NIM: B11.2018.04883: Reliability StatisticsMuhammad DaavaÎncă nu există evaluări

- Autocorrelation 2Document10 paginiAutocorrelation 2pobaÎncă nu există evaluări

- Question No 1: Month Rainfall (MM) X Umbrellas Sold YDocument6 paginiQuestion No 1: Month Rainfall (MM) X Umbrellas Sold YAli AhmadÎncă nu există evaluări

- Videos and Tutorials On Data Analysis in The Psychometrics LabDocument13 paginiVideos and Tutorials On Data Analysis in The Psychometrics LabSreesha ChakrabortyÎncă nu există evaluări

- Her Body and Other Parties: StoriesDe la EverandHer Body and Other Parties: StoriesEvaluare: 4 din 5 stele4/5 (821)

- Hypothesis Tests About The Mean and ProportionDocument109 paginiHypothesis Tests About The Mean and Proportion03435013877100% (1)

- Lesson 4.1 Computing The Point Estimate of A Population MeanDocument34 paginiLesson 4.1 Computing The Point Estimate of A Population MeanmarilexdomagsangÎncă nu există evaluări

- Gronnerod - Rorschach Assessment of Changes After PsychotherDocument21 paginiGronnerod - Rorschach Assessment of Changes After PsychotherjuaromerÎncă nu există evaluări

- Parametric Test RDocument47 paginiParametric Test RRuju VyasÎncă nu există evaluări

- Removal SamplingDocument11 paginiRemoval Samplingapi-2758973680% (5)

- Time Series Forecasting Business Report: Name: S.Krishna Veni Date: 20/02/2022Document31 paginiTime Series Forecasting Business Report: Name: S.Krishna Veni Date: 20/02/2022Krishna Veni100% (1)

- Calculus Made Easy: Being a Very-Simplest Introduction to Those Beautiful Methods of Reckoning Which are Generally Called by the Terrifying Names of the Differential Calculus and the Integral CalculusDe la EverandCalculus Made Easy: Being a Very-Simplest Introduction to Those Beautiful Methods of Reckoning Which are Generally Called by the Terrifying Names of the Differential Calculus and the Integral CalculusEvaluare: 4.5 din 5 stele4.5/5 (2)