Documente Academic

Documente Profesional

Documente Cultură

Types of Failure Detection: Applies To

Încărcat de

Tolulope AbiodunTitlu original

Drepturi de autor

Formate disponibile

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentDrepturi de autor:

Formate disponibile

Types of Failure Detection: Applies To

Încărcat de

Tolulope AbiodunDrepturi de autor:

Formate disponibile

9/9/12

Document

Solaris 10 IP Multipathing (IPMP) Link-based Only Failure Detection [ID 1008064.1]

Modified: Dec 1, 2011 Type: HOWTO Migrated ID: 211105 Status: PUBLISHED Priority: 3

Applies to:

Solaris SPARC Operating System - Version: 10 3/05 to 10 8/11 [Release: 10.0 to 10.0] Solaris x64/x86 Operating System - Version: 10 3/05 to 10 8/11 [Release: 10.0 to 10.0] All Platforms

Goal

Explain the different types of failure detection modes used by IP Multipathing (IPMP) and in particular how to set up IPMP in link-based only failure detection mode. Provide simple configuration examples.

Solution

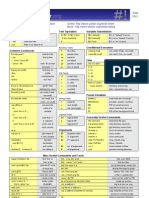

Description Most of the information is already documented in the IP Services/IPMP part of the Solaris[TM] System Administration Guide (8164554). This document is a short summary of failure detection types with additional/typical/recommended configuration examples using Linkbased failure detection only. Even though link-based failure detection was supported before Solaris[TM] 10 (since DLPI link up/down notifications are supported by used network driver), it is now possible to use this failure detection type without any probing (probebased failure detection). Steps to Follow IPMP Link-based Only Failure Detection with Solaris [TM] 10 Operating System (OS) Contents: 1. Types of Failure Detection 1.1. Link-based Failure Detection 1.2. Probe-based Failure Detection 2. Configuration Examples using Link-based Failure Detection only 2.1. Single Interface 2.2. Multiple Interfaces 2.2.1. Active-Active 2.2.1.1. Two Interfaces 2.2.1.2. Two Interfaces + logical 2.2.1.3. Three Interfaces 2.2.2. Active-Standby 2.2.2.1. Two Interfaces 2.2.2.2. Two Interfaces + logical 3. References

1. Types of Failure Detection

1.1. Link-based Failure Detection Link-based failure detection is always enabled (supposed to be supported by the interface), whether optional probe-based failure detection is used or not. As per PSARC/1999/225 network drivers do send asynchronous DLPI notifications DL_NOTE_LINK_DOWN (link/NIC is down) and DL_NOTE_LINK_UP (link/NIC is up). The UP and DOWN notifications are used in IP to set and clear the IFF_RUNNING flag which is, in the absence of such notifications, always set for an interface that is up. Failure detection software will immediately detect changes to IFF_RUNNING. These DLPI notifications were implemented to network drivers by and by, and supported by almost all of them since Solaris 10.

https://support.oracle.com/epmos/faces/ui/km/DocumentDisplay.jspx?_adf.ctrl-state=hfngveqxz_266 1/5

9/9/12

Document

With link-based failure detection, only the link between local interface and the link partner is been checked on hardware layer. Neither IP layer nor any further network path will be monitored! No test addresses are required for link-based failure detection. For more informations, please refer to Solaris 10 System Administration Guide: IP Services >> IPMP >> 30. Introducing IPMP (Overview) >> Link-Based Failure Detection 1.2. Probe-based Failure Detection Probe-based failure detection is performed on each interface in the IPMP group that has a test address. Using this test address, ICMP probe messages go out over this interface to one or more target systems on the same IP link. The in.mpathd daemon determines which target systems to probe dynamically: all default routes on same IP link are used as probe targets. all host routes on same IP link are used as probe targets. ( Configuring Target Systems) always neither default nor host routes are available, in.mpathd sends out a all hosts multicast to 224.0.0.1 in IPv4 and ff02::1 in IPv6 to find neighbor hosts on the link. Note: Available probe targets are determined dynamically, so the daemon in.mpathd has not to be re-started. The in.mpathd daemon probes all the targets separately through all the interfaces in the IPMP group. The probing rate depends on the failure detection time (FDT) specified in /etc/default/mpathd (default 10 seconds) with 5 probes each timeframe. If 5 consecutive probes fail, the in.mpathd considers the interface to have failed. The minimum repair detection time is twice the failure detection time, 20 seconds by default, because replies to 10 consecutive probes must be received. Without any configured host routes, the default route is used as a single probe target in most cases. In this case the whole network path up to the gateway (router) is monitored on IP layer. With all interfaces in the IPMP group connected via redundant network paths (switches etc.), you get full redundancy. On the other hand the default router can be a single point of failure, resulting in 'All Interfaces in group have failed'. Even with default gateway down, it could make sense to not fail the whole IPMP group, and to allow traffic within the local network. In this case specific probe targets (hosts or active network components) can be configured via host routes. So it is question of network design, which network path you do want to monitor. A test address is required on each interface in the IPMP group, but the test addresses can be in a different IP test subnet than the data address(es). So private network addresses as specified by rfc1918 (e.g. 10/8, 172.16/12, or 192.168/16) can be used as well. For more informations, please refer to Solaris 10 System Administration Guide: IP Services >> IPMP >> 30. Introducing IPMP (Overview) >> Probe-Based Failure Detection

2. Configuration Examples using Link-based Failure Detection

An IPMP configuration typically consists of two or more physical interfaces on the same system that are attached to the same IP link. These physical interfaces might or might not be on the same NIC. The interfaces are configured as members of the same IPMP group. A single interface can be configured in its own IPMP group. The single interface IPMP group has the same behavior as an IPMP group with multiple interfaces. However, failover and failback cannot occur for an IPMP group with only one interface. The following message does tell you, that this is link-based failure detection only configuration. It is reported for each interface in the group.

/a/d/esgs vrammsae i.ptd14:[D952 deo.ro]N ts adescniue o nmah[4] I 709 amnerr o et drs ofgrd n itraec0 dsbigpoebsdfiuedtcino i nefc e; ialn rb-ae alr eeto n t

So in this configuration it is not an error, but more a confirmation, that the probe-based failure detection has been disabled correctly. 2.1. Single Interface /etc/hostname.ce0 192.168.10.10 netmask + broadcast + group ipmp0 up

#icni fofg a c0 fas1083u,racs,unn,utcs,p4 mu10 idx4 e: lg=004<pbodatrnigmliativ> t 50 ne

https://support.oracle.com/epmos/faces/ui/km/DocumentDisplay.jspx?_adf.ctrl-state=hfngveqxz_266 2/5

9/9/12

Document

ie 12181.0ntakfff0 bodat12181.5 nt 9.6.01 ems fff0 racs 9.6.025 gopaeim0 runm pp ehr03b:39:c te ::a9:0f

2.2. Multiple Interfaces 2.2.1. Active-Active 2.2.1.1. Two Interfaces /etc/hostname.ce0 192.168.10.10 netmask + broadcast + group ipmp0 up /etc/hostname.ce1 group ipmp0 up

#icni fofg a c0 fas1083u,racs,unn,utcs,p4 mu10 idx4 e: lg=004<pbodatrnigmliativ> t 50 ne ie 12181.0ntakfff0 bodat12181.5 nt 9.6.01 ems fff0 racs 9.6.025 gopaeim0 runm pp ehr03b:39:c te ::a9:0f c1 fas1083u,racs,unn,utcs,p4 mu10 idx5 e: lg=004<pbodatrnigmliativ> t 50 ne ie 0000ntakf000 bodat0252525 nt ... ems f000 racs .5.5.5 gopaeim0 runm pp ehr03b:39:5 te ::a9:13

2.2.1.2. Two Interfaces + logical /etc/hostname.ce0 192.168.10.10 netmask + broadcast + group ipmp0 up \ addif 192.168.10.11 netmask + broadcast + up /etc/hostname.ce1 group ipmp0 up

#icni fofg a c0 fas1083u,racs,unn,utcs,p4 mu10 idx4 e: lg=004<pbodatrnigmliativ> t 50 ne ie 12181.0ntakfff0 bodat12181.5 nt 9.6.01 ems fff0 racs 9.6.025 gopaeim0 runm pp ehr03b:39:c te ::a9:0f c01 fas1083u,racs,unn,utcs,p4 mu10 idx e:: lg=004<pbodatrnigmliativ> t 50 ne 4 ie 12181.1ntakfff0 bodat12181.5 nt 9.6.01 ems fff0 racs 9.6.025 c1 fas1083u,racs,unn,utcs,p4 mu10 idx5 e: lg=004<pbodatrnigmliativ> t 50 ne ie 0000ntakf000 bodat0252525 nt ... ems f000 racs .5.5.5 gopaeim0 runm pp ehr03b:39:5 te ::a9:13

2.2.1.3. Three Interfaces /etc/hostname.ce0 192.168.10.10 netmask + broadcast + group ipmp0 up /etc/hostname.ce1 group ipmp0 up

https://support.oracle.com/epmos/faces/ui/km/DocumentDisplay.jspx?_adf.ctrl-state=hfngveqxz_266 3/5

9/9/12

Document

/etc/hostname.bge1 group ipmp0 up

#icni fofg a be:fas1083u,racs,unn,utcs,p4 mu10 idx3 g1 lg=004<pbodatrnigmliativ> t 50 ne ie 0000ntakf000 bodat0252525 nt ... ems f000 racs .5.5.5 gopaeim0 runm pp ehr093:19:b te ::d1:11 c0 fas1083u,racs,unn,utcs,p4 mu10 idx4 e: lg=004<pbodatrnigmliativ> t 50 ne ie 12181.0ntakfff0 bodat12181.5 nt 9.6.01 ems fff0 racs 9.6.025 gopaeim0 runm pp ehr03b:39:c te ::a9:0f c1 fas1083u,racs,unn,utcs,p4 mu10 idx5 e: lg=004<pbodatrnigmliativ> t 50 ne ie 0000ntakf000 bodat0252525 nt ... ems f000 racs .5.5.5 gopaeim0 runm pp ehr03b:39:5 te ::a9:13

2.2.2. Active-Standby 2.2.2.1. Two Interfaces /etc/hostname.ce0 192.168.10.10 netmask + broadcast + group ipmp0 up /etc/hostname.ce1 group ipmp0 standby up

#icni fofg a c0 fas1083u,racs,unn,utcs,p4 mu10 idx4 e: lg=004<pbodatrnigmliativ> t 50 ne ie 12181.0ntakfff0 bodat12181.5 nt 9.6.01 ems fff0 racs 9.6.025 gopaeim0 runm pp ehr03b:39:c te ::a9:0f c01 fas1083u,racs,unn,utcs,p4 mu10 idx e:: lg=004<pbodatrnigmliativ> t 50 ne 4 ie 0000ntakf000 bodat0252525 nt ... ems f000 racs .5.5.5 c1 e: fas6004<racs,unn,utcs,p4nfioe,tnb,ncie lg=9082bodatrnigmliativ,oalvrsadyiatv> mu0idx5 t ne ie 0000ntak0 nt ... ems gopaeim0 runm pp ehr03b:39:5 te ::a9:13

2.2.2.2. Two Interfaces + logical /etc/hostname.ce0 192.168.10.10 netmask + broadcast + group ipmp0 up \ addif 192.168.10.11 netmask + broadcast + up /etc/hostname.ce1 group ipmp0 standby up

#icni fofg a c0 fas1083u,racs,unn,utcs,p4 mu10 idx4 e: lg=004<pbodatrnigmliativ> t 50 ne ie 12181.0ntakfff0 bodat12181.5 nt 9.6.01 ems fff0 racs 9.6.025 gopaeim0 runm pp

https://support.oracle.com/epmos/faces/ui/km/DocumentDisplay.jspx?_adf.ctrl-state=hfngveqxz_266 4/5

9/9/12

Document

ehr03b:39:c te ::a9:0f c01 fas1083u,racs,unn,utcs,p4 mu10 idx e:: lg=004<pbodatrnigmliativ> t 50 ne 4 ie 12181.1ntakfff0 bodat12181.5 nt 9.6.01 ems fff0 racs 9.6.025 c02 fas1083u,racs,unn,utcs,p4 mu10 idx e:: lg=004<pbodatrnigmliativ> t 50 ne 4 ie 0000ntakf000 bodat0252525 nt ... ems f000 racs .5.5.5 c1 e: fas6004<racs,unn,utcs,p4nfioe,tnb,ncie lg=9082bodatrnigmliativ,oalvrsadyiatv> mu0idx5 t ne ie 0000ntak0 nt ... ems gopaeim0 runm pp ehr03b:39:5 te ::a9:13

3. References

in.mpathd(1M) Solaris 10 System Administration Guide: Introducing IPMP (Overview) Solaris 10 System Administration Guide: Administering IPMP (Tasks) To discuss this information further with Oracle experts and industry peers, we encourage you to review, join or start a discussion in the My Oracle Support Community, Oracle Solaris Networking Community.

References

NOTE:1010640.1 - Summary of Typical Solaris IP Multipathing (IPMP) Configurations NOTE:1382335.1 - Transitioning From Solaris 10 IP Multipathing (IPMP) to Oracle Solaris 11 IPMP

https://support.oracle.com/epmos/faces/ui/km/DocumentDisplay.jspx?_adf.ctrl-state=hfngveqxz_266

5/5

S-ar putea să vă placă și

- Knowledge Browse: Document DisplayDocument4 paginiKnowledge Browse: Document Displayqudsia8khanÎncă nu există evaluări

- The Default Route: Print The Routing Table ContentsDocument5 paginiThe Default Route: Print The Routing Table ContentsIjazKhanÎncă nu există evaluări

- IPMP UnderstandingDocument7 paginiIPMP Understandingandyd1980Încă nu există evaluări

- IPMPDocument3 paginiIPMPIndrajit NandiÎncă nu există evaluări

- Applies To:: Next Steps Summary of Typical Solaris IP Multipathing (IPMP) Configurations (Doc ID 1010640.1)Document7 paginiApplies To:: Next Steps Summary of Typical Solaris IP Multipathing (IPMP) Configurations (Doc ID 1010640.1)qudsia8khanÎncă nu există evaluări

- Working With Linux TCP - IP Network Configuration FilesDocument5 paginiWorking With Linux TCP - IP Network Configuration FilesmoukeÎncă nu există evaluări

- Configuring IP Network Multi Pa Thing On SolarisDocument9 paginiConfiguring IP Network Multi Pa Thing On SolarisAyoola OjoÎncă nu există evaluări

- Take Assessment - EWAN Final Exam - CCNA Exploration: Accessing The WAN (Version 4.0)Document5 paginiTake Assessment - EWAN Final Exam - CCNA Exploration: Accessing The WAN (Version 4.0)Oana UngureanuÎncă nu există evaluări

- FreeBSD 5.3 Networking PDFDocument27 paginiFreeBSD 5.3 Networking PDFNixbie (Pemula yg serba Kepo)Încă nu există evaluări

- IBM VIOS MaintenanceDocument46 paginiIBM VIOS MaintenanceEyad Muin Ibdair100% (1)

- 10 Commandos CiscoDocument2 pagini10 Commandos CiscoCarlos SolisÎncă nu există evaluări

- G0/1.2 Will Be in A Down/down State. G0/1.1 Will Be in An Administratively Down StateDocument33 paginiG0/1.2 Will Be in A Down/down State. G0/1.1 Will Be in An Administratively Down Stateivan martinez robledoÎncă nu există evaluări

- MCQ Question (Latest)Document13 paginiMCQ Question (Latest)nombreÎncă nu există evaluări

- Deny Any Packet From TheDocument8 paginiDeny Any Packet From Theiwc2008007Încă nu există evaluări

- Networks Lab 2Document7 paginiNetworks Lab 2Son InocencioÎncă nu există evaluări

- Revision Notes CCNA 3 EasyDocument40 paginiRevision Notes CCNA 3 EasyNur Dinah YeeÎncă nu există evaluări

- Module 6 - More iBGP, and Basic eBGP ConfigurationDocument10 paginiModule 6 - More iBGP, and Basic eBGP Configurationkrul786Încă nu există evaluări

- How To Configure CSF FirewallDocument6 paginiHow To Configure CSF FirewallRakesh BhardwajÎncă nu există evaluări

- Solaris Network CommandsDocument11 paginiSolaris Network Commandskayak_186Încă nu există evaluări

- Lab Manual:Bcsl-056: Network Programming and Administrative Lab Name: Rahul Kumar Enrolment No: 170217591Document34 paginiLab Manual:Bcsl-056: Network Programming and Administrative Lab Name: Rahul Kumar Enrolment No: 170217591Rahul KumarÎncă nu există evaluări

- Cisco Real-Exams 300-101 v2019-03-23 by Barbra 469qDocument243 paginiCisco Real-Exams 300-101 v2019-03-23 by Barbra 469qJorge ArmasÎncă nu există evaluări

- Rs QSTNDocument13 paginiRs QSTNPRINC NEUPANEÎncă nu există evaluări

- CyberneticsDocument117 paginiCyberneticsmehmet100% (2)

- Implementing Cisco Service Provider Next-Generation Core Network Services V7.0Document76 paginiImplementing Cisco Service Provider Next-Generation Core Network Services V7.0Edwin Bello R.Încă nu există evaluări

- Fravo Cisco 642-801 V3.0Document111 paginiFravo Cisco 642-801 V3.0letranganhÎncă nu există evaluări

- Chapter 7Document6 paginiChapter 7Indio CarlosÎncă nu există evaluări

- Lab 6Document25 paginiLab 6RapacitorÎncă nu există evaluări

- CN Lab Manual-2-60Document59 paginiCN Lab Manual-2-60alps2coolÎncă nu există evaluări

- SRX TroubleshootingDocument53 paginiSRX TroubleshootingMohit Kapoor100% (2)

- Iptables 2Document34 paginiIptables 2Claudemir De Almeida RosaÎncă nu există evaluări

- TCP/IP Troubleshooting ToolsDocument41 paginiTCP/IP Troubleshooting ToolsShivakumar S KadakalÎncă nu există evaluări

- Test 1 Answer KeyDocument9 paginiTest 1 Answer Keypmurphy24Încă nu există evaluări

- ICL TroubleshootingDocument17 paginiICL TroubleshootingnokiasieÎncă nu există evaluări

- Linux Interview QuestionsDocument51 paginiLinux Interview Questionsseenu933Încă nu există evaluări

- CISCO PACKET TRACER LABS: Best practice of configuring or troubleshooting NetworkDe la EverandCISCO PACKET TRACER LABS: Best practice of configuring or troubleshooting NetworkÎncă nu există evaluări

- Installing RHCS On RHELDocument14 paginiInstalling RHCS On RHELrajnapsterÎncă nu există evaluări

- There Are Incorrect Access Control List EntriesDocument16 paginiThere Are Incorrect Access Control List EntriesYoshimitsu MKÎncă nu există evaluări

- Snort and Firewall Lab - Logs and RulesDocument7 paginiSnort and Firewall Lab - Logs and RulesThái NguyễnÎncă nu există evaluări

- Write A Short Note On The FollowingDocument7 paginiWrite A Short Note On The FollowingAkmal YezdaniÎncă nu există evaluări

- Configuring Ip Routing ProtocolsDocument59 paginiConfiguring Ip Routing ProtocolsLarry TembuÎncă nu există evaluări

- Cisco Security Troubleshooting: Part III - Intrusion Prevention SystemsDocument14 paginiCisco Security Troubleshooting: Part III - Intrusion Prevention SystemshoadiÎncă nu există evaluări

- Network Engineer Interview QuestionsDocument9 paginiNetwork Engineer Interview QuestionsAnonymous kKiwxqhÎncă nu există evaluări

- Multi WAN - Load Balancing - PFSenseDocsDocument13 paginiMulti WAN - Load Balancing - PFSenseDocsjosebernardÎncă nu există evaluări

- Exam 3 Study GuideDocument8 paginiExam 3 Study GuidesajorlÎncă nu există evaluări

- Lab 10 CCNDocument15 paginiLab 10 CCNMalik WaqarÎncă nu există evaluări

- Typical CCNA QuestionDocument4 paginiTypical CCNA QuestionkeoghankÎncă nu există evaluări

- Tshoot 3Document9 paginiTshoot 3duykhanhbk100% (1)

- ConcealDocument18 paginiConcealxge53973100% (1)

- Firewalld Iptables (Continued)Document36 paginiFirewalld Iptables (Continued)Alex ValenciaÎncă nu există evaluări

- Chapter 7 True/False, Multiple Choice, and Short Answer QuestionsDocument8 paginiChapter 7 True/False, Multiple Choice, and Short Answer QuestionsdsuntewÎncă nu există evaluări

- 11.3.3.4 Packet Tracer - Using Show Commands Instructions IGDocument2 pagini11.3.3.4 Packet Tracer - Using Show Commands Instructions IGAceLong100% (1)

- Configuracion de Red EthernetDocument7 paginiConfiguracion de Red EthernetCesar LeonÎncă nu există evaluări

- Disabling RP - Filter On One InterfaceDocument2 paginiDisabling RP - Filter On One InterfaceJeff SessionsÎncă nu există evaluări

- Verificacion de NatDocument10 paginiVerificacion de NatJuanan PalmerÎncă nu există evaluări

- 230q File PDFDocument135 pagini230q File PDFjustanotherrandomdudeÎncă nu există evaluări

- Ipv6 Module 20 - Router SecurityDocument12 paginiIpv6 Module 20 - Router Securitycatalin ionÎncă nu există evaluări

- LEARN MPLS FROM SCRATCH PART-B: A Beginners guide to next level of networkingDe la EverandLEARN MPLS FROM SCRATCH PART-B: A Beginners guide to next level of networkingÎncă nu există evaluări

- CompTIA A+ Complete Review Guide: Core 1 Exam 220-1101 and Core 2 Exam 220-1102De la EverandCompTIA A+ Complete Review Guide: Core 1 Exam 220-1101 and Core 2 Exam 220-1102Evaluare: 5 din 5 stele5/5 (2)

- 19c DB FeaturesDocument14 pagini19c DB FeaturesTolulope AbiodunÎncă nu există evaluări

- Telem Atics: Senior Management Support ofDocument4 paginiTelem Atics: Senior Management Support ofTolulope AbiodunÎncă nu există evaluări

- Install Oracle VM Manager on SLES 11 SP1Document20 paginiInstall Oracle VM Manager on SLES 11 SP1Tolulope AbiodunÎncă nu există evaluări

- Postgres11 DependenciesDocument1 paginăPostgres11 DependenciesTolulope AbiodunÎncă nu există evaluări

- Root Shell Sun Solaris MeterialDocument563 paginiRoot Shell Sun Solaris Meterialonenessunity100% (1)

- Use Local VNC Viewer To Access Guest VM Console (Honglin Su - Building Open Cloud Infrastructure)Document5 paginiUse Local VNC Viewer To Access Guest VM Console (Honglin Su - Building Open Cloud Infrastructure)Tolulope AbiodunÎncă nu există evaluări

- Obiee 122140 Certmatrix 4472983Document65 paginiObiee 122140 Certmatrix 4472983Tolulope AbiodunÎncă nu există evaluări

- Steps To Create Compute & Cell Images PDFDocument7 paginiSteps To Create Compute & Cell Images PDFTolulope AbiodunÎncă nu există evaluări

- Steps To Create Compute & Cell Images PDFDocument7 paginiSteps To Create Compute & Cell Images PDFTolulope AbiodunÎncă nu există evaluări

- Obiee 122140 Certmatrix 4472983Document65 paginiObiee 122140 Certmatrix 4472983Tolulope AbiodunÎncă nu există evaluări

- ACREDITATIONDocument20 paginiACREDITATIONFabrice57% (7)

- KnownIssues ExternalDocument10 paginiKnownIssues ExternalTolulope AbiodunÎncă nu există evaluări

- Oracle 11g Step-By-Step Installation Guide With ScreenshotsDocument10 paginiOracle 11g Step-By-Step Installation Guide With ScreenshotsTolulope AbiodunÎncă nu există evaluări

- Ovm PresalesDocument67 paginiOvm PresalesTolulope AbiodunÎncă nu există evaluări

- Oracle Goldengate Hands-On Tutorial: Version Date: 1 Oct, 2012 EditorDocument115 paginiOracle Goldengate Hands-On Tutorial: Version Date: 1 Oct, 2012 EditorTolulope AbiodunÎncă nu există evaluări

- 2013 240 Niewel PPT PDFDocument64 pagini2013 240 Niewel PPT PDFTolulope AbiodunÎncă nu există evaluări

- Exadata Vs Ibm 1870172 PDFDocument30 paginiExadata Vs Ibm 1870172 PDFTolulope AbiodunÎncă nu există evaluări

- ODI Developer Assignment N1: 1. Create Master and Work RepositoriesDocument1 paginăODI Developer Assignment N1: 1. Create Master and Work RepositoriesTolulope AbiodunÎncă nu există evaluări

- 6th Central Pay Commission Salary CalculatorDocument15 pagini6th Central Pay Commission Salary Calculatorrakhonde100% (436)

- What New Solaris 11 4Document34 paginiWhat New Solaris 11 4Tolulope AbiodunÎncă nu există evaluări

- Racattack PDFDocument246 paginiRacattack PDFTolulope AbiodunÎncă nu există evaluări

- Upgrade-GI-to-11 2 0 4-From-11 2 0 3Document48 paginiUpgrade-GI-to-11 2 0 4-From-11 2 0 3Tolulope AbiodunÎncă nu există evaluări

- StepByStep5 Kickstart InstallationDocument6 paginiStepByStep5 Kickstart InstallationMdlamini1984Încă nu există evaluări

- Oracle Optimized BackupDocument3 paginiOracle Optimized BackupTolulope AbiodunÎncă nu există evaluări

- Infiniband Session3Document91 paginiInfiniband Session3Tolulope AbiodunÎncă nu există evaluări

- LMS Collection Tool Measurement Instructions 1621Document18 paginiLMS Collection Tool Measurement Instructions 1621Fravio AndersonÎncă nu există evaluări

- Step 1 - Developing A Conceptual Model Instructions and WorksheetDocument8 paginiStep 1 - Developing A Conceptual Model Instructions and WorksheetTolulope AbiodunÎncă nu există evaluări

- Oracle Configuration Manager For Ex A DataDocument29 paginiOracle Configuration Manager For Ex A DataTolulope AbiodunÎncă nu există evaluări

- The Balanced Scorecard Approach To Performance Management PDFDocument3 paginiThe Balanced Scorecard Approach To Performance Management PDFTolulope Abiodun100% (1)

- Cheat SheetDocument1 paginăCheat SheetSai Anil KumarÎncă nu există evaluări

- Newsletter April.Document4 paginiNewsletter April.J_Hevicon4246Încă nu există evaluări

- Synthesis and Characterization of Nanoparticles of Iron OxideDocument8 paginiSynthesis and Characterization of Nanoparticles of Iron OxideDipteemaya BiswalÎncă nu există evaluări

- Citizen Journalism Practice in Nigeria: Trends, Concerns, and BelievabilityDocument30 paginiCitizen Journalism Practice in Nigeria: Trends, Concerns, and BelievabilityJonathan Bishop100% (3)

- Learning OrganizationDocument104 paginiLearning Organizationanandita28100% (2)

- Chemistry An Introduction To General Organic and Biological Chemistry Timberlake 12th Edition Test BankDocument12 paginiChemistry An Introduction To General Organic and Biological Chemistry Timberlake 12th Edition Test Banklaceydukeqtgxfmjkod100% (46)

- ANSI B4.1-1967 Preferred Limits and Fits For Cylindrical PartsDocument25 paginiANSI B4.1-1967 Preferred Limits and Fits For Cylindrical Partsgiaphongn100% (5)

- Read Me Slave 2.1Document7 paginiRead Me Slave 2.1Prasad VylaleÎncă nu există evaluări

- Test Bank For Environmental Science For A Changing World Canadian 1St Edition by Branfireun Karr Interlandi Houtman Full Chapter PDFDocument36 paginiTest Bank For Environmental Science For A Changing World Canadian 1St Edition by Branfireun Karr Interlandi Houtman Full Chapter PDFelizabeth.martin408100% (16)

- USAF Electronic Warfare (1945-5)Document295 paginiUSAF Electronic Warfare (1945-5)CAP History LibraryÎncă nu există evaluări

- FeminismDocument8 paginiFeminismismailjuttÎncă nu există evaluări

- Friction Stir Welding: Principle of OperationDocument12 paginiFriction Stir Welding: Principle of OperationvarmaprasadÎncă nu există evaluări

- What Is "The Mean Relative To Us" in Aristotle's Ethics? - Lesley BrownDocument18 paginiWhat Is "The Mean Relative To Us" in Aristotle's Ethics? - Lesley Brownatonement19Încă nu există evaluări

- A&P Book - Aeronautical Charts and CompassDocument17 paginiA&P Book - Aeronautical Charts and CompassHarry NuryantoÎncă nu există evaluări

- Win10 Backup Checklist v3 PDFDocument1 paginăWin10 Backup Checklist v3 PDFsubwoofer123Încă nu există evaluări

- AbDocument13 paginiAbSk.Abdul NaveedÎncă nu există evaluări

- Journal of Statistical Planning and Inference: Akanksha S. KashikarDocument12 paginiJournal of Statistical Planning and Inference: Akanksha S. KashikarAkanksha KashikarÎncă nu există evaluări

- Cultural Diffusion: Its Process and PatternsDocument16 paginiCultural Diffusion: Its Process and PatternsJessie Yutuc100% (1)

- PESTEL Team Project (Group)Document9 paginiPESTEL Team Project (Group)Yadira Alvarado saavedraÎncă nu există evaluări

- Beyond Firo-B-Three New Theory-Derived Measures-Element B: Behavior, Element F: Feelings, Element S: SelfDocument23 paginiBeyond Firo-B-Three New Theory-Derived Measures-Element B: Behavior, Element F: Feelings, Element S: SelfMexico BallÎncă nu există evaluări

- Damplas damp proof membranes CE Marked to EN 13967Document5 paginiDamplas damp proof membranes CE Marked to EN 13967Vikram MohanÎncă nu există evaluări

- Experienced Design Engineer Seeks Challenging PositionDocument2 paginiExperienced Design Engineer Seeks Challenging PositionjmashkÎncă nu există evaluări

- Me-143 BcmeDocument73 paginiMe-143 BcmekhushbooÎncă nu există evaluări

- SEM GuideDocument98 paginiSEM GuideMustaque AliÎncă nu există evaluări

- Syllabus in Study and Thinking SkillsDocument5 paginiSyllabus in Study and Thinking SkillsEnrique Magalay0% (1)

- Vocabulary and grammar practice for future technologyDocument4 paginiVocabulary and grammar practice for future technologyRosa MartinezÎncă nu există evaluări

- Oracle Database Utilities OverviewDocument6 paginiOracle Database Utilities OverviewraajiÎncă nu există evaluări

- ASTM C186 - 15a Standard Test Method For Heat of Hydration of Hydraulic CementDocument3 paginiASTM C186 - 15a Standard Test Method For Heat of Hydration of Hydraulic CementKalindaMadusankaDasanayakaÎncă nu există evaluări

- Question 1: Bezier Quadratic Curve Successive Linear Interpolation EquationDocument4 paginiQuestion 1: Bezier Quadratic Curve Successive Linear Interpolation Equationaushad3mÎncă nu există evaluări

- The Confidence Myth and What It Means To Your Career: by Tara MohrDocument4 paginiThe Confidence Myth and What It Means To Your Career: by Tara MohrdargeniÎncă nu există evaluări

- I-K Bus Codes v6Document41 paginiI-K Bus Codes v6Dobrescu CristianÎncă nu există evaluări