Documente Academic

Documente Profesional

Documente Cultură

Text Summarization Extraction System TSES Using Extracted Keywords - Doc

Încărcat de

Zulkifli SaidTitlu original

Drepturi de autor

Formate disponibile

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentDrepturi de autor:

Formate disponibile

Text Summarization Extraction System TSES Using Extracted Keywords - Doc

Încărcat de

Zulkifli SaidDrepturi de autor:

Formate disponibile

164

International Arab Journal of e-Technology, Vol. 1, No. 4, June 2010

Text Summarization Extraction System (TSES)

Using Extracted Keywords

Rafeeq Al-Hashemi

Faculty of Information Technology, Al-Hussein Bin Talal University,Jordan

Abstract A new technique to produce a summary of an original text investigated in this paper. The system develops many

approaches to solve this problem that gave a high quality result. The model consists of four stages. The preprocess stages

convert the unstructured text into structured. In first stage, the system removes the stop words, pars the text and assigning the

POS (tag) for each word in the text and store the result in a table. The second stage is to extract the important keyphrases in

the text by implementing a new algorithm through ranking the candidate words. The system uses the extracted

keywords/keyphrases to select the important sentence. Each sentence ranked depending on many features such as the existence

of the keywords/keyphrase in it, the relation between the sentence and the title by using a similarity measurement and other

many features. The Third stage of the proposed system is to extract the sentences with the highest rank. The Forth stage is the

filtering stage. This stage reduced the amount of the candidate sentences in the summary in order to produce a qualitative

summary using KFIDF measurement.

Keywords: Text Summarization, Keyphrase Extraction, Text mining, Data Mining, Text compression .

Received October 2, 2009; Accepted March 13, 2010

1. Introduction

Text mining can be described as the process of identifying

novel information from a collection of texts. By novel

information we mean associations, hypothesis that are not

explicitly present in the text source being analyzed [9].

In [4] Hearst makes one of the first attempts to clearly

establish what constitutes text mining and distinguishes it

from information retrieval and data mining. In [4] Hearst

paper, metaphorically describes text mining as the process

of mining precious nuggets of ore from a mountain of

otherwise worthless rock. She calls text mining the

process of discovering heretofore unknown information

from a text source.

For example, suppose that a document establishes a

relationship between topics A and B and another

document establishes a relationship between topics B and

C. These two documents jointly establish the possibility of

a novel (because no document explicitly relates A and C)

relationship between A and C.

Automatic summarization involves reducing a text

document or a larger corpus of multiple documents into a

short set of words or paragraph that conveys the main

meaning of the text. Two methods for automatic text

summarization they are Extractive and Abstractive.

Extractive methods work by selecting a subset of existing

words, phrases, or sentences in the original text to form

the summary. In contrast, abstractive methods build an

internal semantic representation and then use natural

language generation techniques to create a summary that

is closer to what a human might generate. Such a

summary might contain words not explicitly present in the

original. The abstractive methods are still weak, so most

research focused on extractive methods, and this is what

we will cover.

Two particular types of summarization often addressed

in the literature. keyphrase extraction, where the goal is to

select individual words or phrases to tag a document,

and document summarization, where the goal is to select

whole sentences to create a short paragraph summary [9,

2, 7].

Our project uses an extractive method to solve the

problem with the idea of extracting the keywords, even if

it is not existed explicitly within the text. One of the main

contributions of the proposed project is the design of the

keyword extraction subsystem that helps to select the

good sentences to be in the summary.

2. Related Work

The study of text summarization [3] proposed an

automatic summarization method combining conventional sentence extraction and trainable classifier based

on Support Vector Machine. The study [3] introduces a

sentence segmentation process method to make the

extraction unit smaller than the original sentence

extraction. The evaluation results show that the system

achieves closer to the human constructed summaries

(upper bound) at 20% summary rate. On the other hand,

the system needs to improve readability of its summary

output.

In the study of [6] proposed to generate synthetic

summaries of input documents. These approaches, though

similar to human summarization of texts, are limited in the

sense that synthesizing the information requires modelling

the latent discourse of documents which in some cases is

Text Summarization Extraction System (TSES) Using Extracted Keywords

prohibitive. A simple approach to this problem is to

extract relevant sentences with respect to the main idea of

documents. In this case, sentences are represented with

some numerical features indicating the position of

sentences within each document, their length (in terms of

words, they contain), their similarity with respect to the

document title and some binary features indicating if

sentences contain some cue-terms or acronyms found to

be relevant for the summarization task. These

characteristics are then combined and the first p% of

sentences having highest scores is returned as the

document summary. The first learning summarizers have

been developed under the classification framework where

the goal is to learn the combination weights in order to

separate summary sentences from the other ones.

In [2] presents a sentence reduction system for

automatically removing extraneous phrases from

sentences that are extracted from a document for

summarization purpose. The system uses multiple sources

of knowledge to decide which phrases in an extracted

sentence can be removed, including syntactic knowledge,

context information, and statistics computed from a

corpus which consists of examples written by human

professionals. Reduction can significantly improve the

conciseness of automatic summaries.

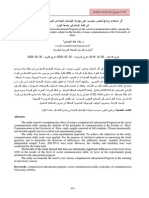

3. The proposed System Architecture

The following diagram figure.1 represents the proposed

system:

165

The model consists of the following stages:

4. Preprocessing

The pre- processing is a primary step to load the text into

the proposed system, and make some processes such as

case-folding that transfer the text into the lower case state

that improve the accuracy of the system to distinguish

similar words. The pre-processing steps are:

4.1. Stop Word Removal

The procedure is to create a filter for those words that

remove them from the text. Using the stop list has the

advantage of reducing the size of the candidate keywords.

4.2. Word Tagging

Word tagging is the process of assigning P.O.S) like

(noun, verb, and pronoun, Etc.) to each word in a sentence

to give word class. The input to a tagging algorithm is a

set of words in a natural language and specified tag to

each. The first step in any tagging process is to look for

the token in a lookup dictionary. The dictionary that

created in the proposed system consists of 230,000 words

in order to assign words to its right tag. The dictionary

had partitioned into tables for each tag type (class) such as

table for (noun, verb, Etc.) based on each P.O.S category.

The system searches the tag of the word in the tables and

selects the correct tag (if there alternatives) depending on

the tags of the previous and next words in the sentence.

4.3. Stemming

Removing suffixes by automatic means is an operation

which is especially useful in keyword extraction and

information retrieval.

The proposed system employs the Porter stemming

[10] algorithm with some improvements on its rules for

stem.

Terms with a common stem will usually have similar

meanings, for example:

(CONNECT,

CONNECTED,

CONNECTING,

CONNECTION, CONNECTIONS)

Frequently, the performance of a keyword extraction

system will be improved if term groups such as these are

conflated into a single term. This may be done by removal

of the various suffixes -ED, -ING, -ION, IONS to leave

the single term CONNECT. In addition, the suffix

stripping process will reduce the number of terms in the

system, and hence reduce the size and complexity of the

data in the system, which is always advantageous.

5. Keyphrase Features

The system uses the following features to distinguish

relevant word or phrase (Keywords):

Figure 1. System architecture.

Term frequency

Inverse Document Frequency

Existence in the document title and font type.

166

International Arab Journal of e-Technology, Vol. 1, No. 4, June 2010

Part of speech approach.

Table 1. P.O.S. Patterns.

5.1. Inverse Document Frequency (IDF)

Terms that occur in only a few documents are after more

valuable than ones that occur in many. In other words, it is

important to know in how many document of the

collection a certain word exists since a word which is

common in a document but also common in most

documents is less useful when it comes to differentiating

that document from other documents [10]. IDF measures

the information content of a word.

The inverse document frequency is calculated with the

following formula [8]:

Idfi=tf * log(N/ni)

(1)

Where N denotes the number of documents in the

collection, and ni is the number of documents in which

term i occurs.

5.2. Existence in the Document Title and Font

Type

Existence in the document title and font type is another

feature to gain more score for candidate keywords. Since

the proposed system gives more weight to the words that

exists in the document title because of its importance and

indication of relevance. Capital letters and font type can

show the importance of the word so the system takes this

into account.

5.3. Part of Speech Approach

After testing the keywords that extracted manually by the

authors of articles in field computer science we noted that

those keywords fill in one of the following patterns as

displayed in table (1). The proposed system improves this

approach by discover a new set of patterns about (21 rule)

that frequently used in computer science. This linguistic

approach extracts the phrases match any of these patterns

that used to extract the candidate keywords. These

patterns are the most frequent patterns of the keywords

found when we do experiments.

5.4. Keyphrase Weight Calculation

The proposed system computes the weight for each

candidate keyphrase using all the features mentioned

earlier. The weight represents the strength of the

keyphrase, the more weight value the more likely to be a

good keyword (keyphrase). We use these results of the

extracted keyphrases to be input to the next stage of the

text summarization.

The range of scores depends on the input text. The

system selects N keywords with the highest values.

no

1.

2.

3.

4.

5.

6.

7.

8.

9.

10.

11.

12.

13.

14.

15.

16.

17.

18.

19.

20.

21.

POS Patterns

<adj> <noun>

<noun> <noun>

<noun>

<noun> <noun> <noun>

<adj> <adj> <noun>

<adj>

<adj> <adj> <noun> <noun>

<noun> <verb>

<noun> <noun> <noun> <noun>

<noun> <verb> <noun>

<noun> <adj> <noun>

<prep> <adj> <noun>

<adj> <adj> <adj> <noun pl>

<noun> <adj> <noun pl>

<adj> <adj> <adj> <noun>

<noun pl> <noun>

<adj> <propern>

<adj> <noun> <verb>

<adj> <adj>

<adj> <noun> <noun>

<noun> <noun> <verb>

5.5. Classification

The proposed system tries to improve the efficiency of the

system by categorizing the document by trying to assign a

document to one or multiple predefined categories and to

find the similarity to other existing documents in the

training set based on their contents.

The proposed system applies classical supervised

machine learning for document classification, by depends

on the candidate keywords that are extracted till this step

and categorize the current document to one of the pre

defined classes. This process has two benefits one for

document classification and the second for feed backing

this result to filtering the extracted keywords and to

increase the accuracy of the system by discarding the

candidate keywords that are irrelevant to the processed

document field, since the proposed system is a domain

specific. Instance-based learning method is the

classification method that the proposed system

implements. First it computes the similarity between a

new document and the training documents that calculates

the similarity score for each category, and finds out the

category with the highest category score.

The documents classified according to the following

equation (2) base on the probability of document

membership to each class:

count of word i in class C documents

k

P(C ) =

k

count of words in class C document

Doc. Class = Max P(Ck)

(2)

(3)

First, the system is learned by training the system with

example documents; second, it is evaluated and tested by

using the test example documents. Algorithm (1) is the

classification algorithm. The corpus we used is in general

computer science and categories of database, Image

processing, AI. The size of training set is 90 documents

and tested by 20 documents.

Text Summarization Extraction System (TSES) Using Extracted Keywords

Algorithm ( 1 ): Text classification

Input: candidate keywords table, Doc;

Output: document class;

Begin

For C=1 to 3 {no of classes =3}

K=K+1

For I= 1 to n

PC =PC + cont(Wi)/cont(W)

Next

prob(Ck)= PC

Next

For j=1 to 2

For s=j+1 to 3

If prob(j) > prob(s) then

Class(Doc)= max(prob(j))

Next

Next

End.

6. Sentences Selection Features

Sentence position in the document and in the

paragraph.

Keyphrase existence.

Existence of indicated words.

Sentence length.

Sentence similarity to the Document class.

6.1. Existence of Headings Words

Sentences occurring under certain headings are positively

relevant; and topic sentences tend to occur very early or

very late in a document and its paragraphs.

6.2. Existence of Indicated Words

By indicated words, we mean that the existence of

information that helps to extract important statements. The

following is a list of these words:

Purpose: Information indicating whether the author's

principal intent is to offer original research findings, to

survey or evaluate the work performed by the others, to

present a speculative or theoretical discussion.

Methods: Information indicating the methods used in

conducting the research. Such statement may refer to

experimental procedures, mathematical techniques.

[Conclusions or findings Generalization or Implications]:

Information indicating the significance of the research and

its bearing on broader technical problems or theory such

as [Recommendations or suggestions].

6.3. Sentence Length Cut-off feature

Short sentences tend not to be included in summaries.

Given a threshold, the feature is true for all sentences

longer than the threshold and false otherwise.

7. Post Processing

The system makes filtering on the generated summary to

reduce the number of the sentences, and to give more

167

compressed summary. The system at first removes

redundant sentences; second the system removes the

sentence that has a similar to another one more than 65%.

This is necessary because authors often repeat the idea

using the same or similar sentences in both introduction

and conclusion sections. The similarity value is calculated

as the vector similarity between two sentences represented

as vectors. That is, the more common words in two

sentences, the more similarity they are. If the similarity

value of two sentences is greater than a threshold, we

eliminate one whose rank based on the features is lower

that of the other. For the threshold value, we used 65% in

the current implementation.

The system implements a filter that replaces incomplete

keyphrase by complete one selected from the input text

which is in a suitable form such as scientific terms. This

filter depends on the KFIDF measurement as in the

equation (4), [1]. The filter selects the term that is more

trivial and used in the scientific language:

(E.g. neural neural network ,

neural network

artificial neural network). The KFIDF computed for

each keyword, the more trivial keyword and frequently

used in the class gains more value of KFIDF. Again to the

example above if the candidate keyword is neural the

filter finds phrases within the system near the word neural

then it will select the one which has more KFIDF value

(e.g. the keyword is neural and the phrases found are

neural network and neural weights by applying

KFIDF measurement the first phrase will have greater

value depending on the trained documents existing in the

class.

KDIDF(w,cat)= docs(w,cat) LOG n cats + 1

cats ( word )

(4)

docs(w,cat)= number of documents in the category cat

containing the word w

n- smoothing factor

cats(word) = the number of categories in which the word

occurs

8. Measurements of Evaluation

Using the generated abstract by the author as the standard

against which the algorithm-derived abstract. The results

evaluated by Precision and Recall measurements.

Precision (P) and Recall (R) are the standard metrics

for retrieval effectiveness in information retrieval. They

calculated as follows:

(5)

P = tp / (tp + fp)

R = tp / (tp + fn)

(6)

Where tp = sentences in the algorithm-derived list also

found in the author list; fp = sentences in the algorithmderived list not found in the author list; fn = sentences in

the author list not found in the algorithm derived list.

They stand for true positive, false positive and false

negative, respectively. In other words, in the case of

168

International Arab Journal of e-Technology, Vol. 1, No. 4, June 2010

sentence extraction, the proportion of automatically

selected sentences that are also manually assigned

sentences is called precision. Recall is the proportion of

manually assigned sentences found by the automatic

method [11, 12].

9. Results

Documents from the test set have been selected, and the

selected sentences to be in the summary presented in table

2 below:

Matched

Precision

1

4

2

9

3

8

4

10

5

10

6

14

7

13

8

24

9

30

10

31

Overall Precision

Manual

selected

Automatic

selected

Text #

Table 2. Experiment results.

3

8

8

8

10

10

12

22

26

21

3

6

6

6

9

9

10

19

22

13

75%

67%

75%

60%

90%

64%

77%

79%

73%

42%

70%

10. Conclusion

The work presented her depends on the keyphrases

extracted by the system and many other features extracted

from the document to get the text summary as a result.

This gave the advance of finding the most related

sentences to be added to the summary text. The system

gave good results in comparison to manual summarization

extraction. The system can give the most compressed

summary with high quality. The main applications of this

work are Web search Engines, text compression and word

processor.

References

[1]

[2]

[3]

Feiyu Xu, et. al.; A Domain Adaptive

Approach to Automatic Acquisition of Domain

Relevant Terms and their Relations with

Bootstrapping; In Proceedings of the 3rd

International

Conference on Language

Resources an Evaluation (LREC'02), May 2931, Las Palmas, Canary Islands, Spain, 2002.

Hongyan Jing; Sentence Reduction for

Automatic Text Summarization; Proceedings

of the sixth conference on Applied natural

language processing, Seattle, Washington,

pp.310 315, 2000.

Kai ISHIKAWA et. al.; Trainable Automatic

Text Summarization Using Segmentation of

Sentence; Multimedia Research Laboratories,

NEC Corporation 4-1-1 Miyazaki Miyamae-ku

Kawasaki-shi Kanagawa 216-8555, 2003.

M. Hearst; Untangling text data mining, In

Proceedings of ACL'99: the 37th Annual

Meeting of the Association for Computational

Linguistics, 1999.

[5] M.F.Porter; An algorithm for Suffix

Stripping; originally published in \Program\,

\14\ no. 3, pp 130-137, July 1980.

[6] Massih Amini; Two Learning models for Text

Summarization; Canada, Colloquium Series,

2007-2008 Series.

[7] Nguyen Minh Le, et. al.; A Sentence

Reduction Using Syntax Control. Proceedings

of the sixth international workshop on

Information retrieval with Asian languages

Volume 11, 2003.

[8] Otterbacher, et. al.; Revisions that improve

cohesion in multi- document summaries; in

ACL workshop on text summarization,

Philadelphia, 2002.

[9] Sholom M. weiss. Nitim Indurkhya, Tong Zhag,

fred J. Damerau, Text mining predication

methods

for

analyzing

unstructured

information. Spring, USA, 2005.

[10] Susanne viestan, Three methods for keyword

extraction, MSc. Department of linguistics,

Uppsds University, 2000.

[11] U. Y. Nahm and R. J. Mooney. Text mining

with information extraction. In AAAI 2002

Spring Symposium on Mining Answers from

Texts and Knowledge Bases, 2002.

[1] William B. Cavnar, and John M. Trenkle;

NGram- Based Text Categorization; In Proc.

of 3rd Annual Symposium on Document

Analysis and Information Retrieval, pp.161169, 1994.

[4]

Rafeeq Al-Hashemi obtained his Ph.D.

in Computer Science from the

University of Technology. He is

currently a professor of Computer

Science at Al-Hussein Bin Talal

University in Jordan. His research

interests include Text mining, Data

mining, and image compression. Dr.

Rafeeq is also the Chairman of Computer Science in the

University.

S-ar putea să vă placă și

- ExerciseDocument1 paginăExerciseZulkifli SaidÎncă nu există evaluări

- Session 1: Summary of Biases in Probability AssessmentDocument8 paginiSession 1: Summary of Biases in Probability AssessmentZulkifli SaidÎncă nu există evaluări

- Summay Chapter 6 and 8 (Paul Goodwin and George Wright)Document10 paginiSummay Chapter 6 and 8 (Paul Goodwin and George Wright)Zulkifli SaidÎncă nu există evaluări

- Krakatau Steel A Report 4Document7 paginiKrakatau Steel A Report 4Zulkifli SaidÎncă nu există evaluări

- Perhitungan Population DensityDocument4 paginiPerhitungan Population DensityZulkifli SaidÎncă nu există evaluări

- CASE Krakatau Steel (A)Document20 paginiCASE Krakatau Steel (A)Ariq LoupiasÎncă nu există evaluări

- Zulkifli Week 3 Chapter 16Document3 paginiZulkifli Week 3 Chapter 16Zulkifli SaidÎncă nu există evaluări

- Summay Chapter 6 and 8 (Paul Goodwin and George Wright)Document10 paginiSummay Chapter 6 and 8 (Paul Goodwin and George Wright)Zulkifli SaidÎncă nu există evaluări

- Krakatau Steel A Study Cases Financial M PDFDocument8 paginiKrakatau Steel A Study Cases Financial M PDFZulkifli SaidÎncă nu există evaluări

- CASE Krakatau Steel (A)Document20 paginiCASE Krakatau Steel (A)Ariq LoupiasÎncă nu există evaluări

- ch03.ppt Intermediate 1Document107 paginich03.ppt Intermediate 1Yozi Ikhlasari Dahelza ArbyÎncă nu există evaluări

- 178 Income Statement and Related Information: - Chapter 4Document1 pagină178 Income Statement and Related Information: - Chapter 4Zulkifli SaidÎncă nu există evaluări

- Culture and Customer Behavior PDFDocument4 paginiCulture and Customer Behavior PDFZulkifli SaidÎncă nu există evaluări

- Student Handbook MBA-ITB - Vrs October 2014 PDFDocument102 paginiStudent Handbook MBA-ITB - Vrs October 2014 PDFZulkifli SaidÎncă nu există evaluări

- Berkas PendaftaranDocument2 paginiBerkas PendaftaranZulkifli SaidÎncă nu există evaluări

- Air AsiaDocument37 paginiAir AsiaZulkifli Said100% (1)

- Swot of AirasiaDocument13 paginiSwot of AirasiaShirley LinÎncă nu există evaluări

- Presentation Marketing ManagementDocument7 paginiPresentation Marketing ManagementZulkifli SaidÎncă nu există evaluări

- Bobot Penilaian Recruitment of StarDocument2 paginiBobot Penilaian Recruitment of StarZulkifli SaidÎncă nu există evaluări

- Higher Algebra - Hall & KnightDocument593 paginiHigher Algebra - Hall & KnightRam Gollamudi100% (2)

- Presentation Marketing ManagementDocument7 paginiPresentation Marketing ManagementZulkifli SaidÎncă nu există evaluări

- Step 2 - Understand The Business FunctionDocument44 paginiStep 2 - Understand The Business FunctionZulkifli SaidÎncă nu există evaluări

- Shoe Dog: A Memoir by the Creator of NikeDe la EverandShoe Dog: A Memoir by the Creator of NikeEvaluare: 4.5 din 5 stele4.5/5 (537)

- Never Split the Difference: Negotiating As If Your Life Depended On ItDe la EverandNever Split the Difference: Negotiating As If Your Life Depended On ItEvaluare: 4.5 din 5 stele4.5/5 (838)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureDe la EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureEvaluare: 4.5 din 5 stele4.5/5 (474)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeDe la EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeEvaluare: 4 din 5 stele4/5 (5782)

- Grit: The Power of Passion and PerseveranceDe la EverandGrit: The Power of Passion and PerseveranceEvaluare: 4 din 5 stele4/5 (587)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceDe la EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceEvaluare: 4 din 5 stele4/5 (890)

- The Yellow House: A Memoir (2019 National Book Award Winner)De la EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Evaluare: 4 din 5 stele4/5 (98)

- On Fire: The (Burning) Case for a Green New DealDe la EverandOn Fire: The (Burning) Case for a Green New DealEvaluare: 4 din 5 stele4/5 (72)

- The Little Book of Hygge: Danish Secrets to Happy LivingDe la EverandThe Little Book of Hygge: Danish Secrets to Happy LivingEvaluare: 3.5 din 5 stele3.5/5 (399)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryDe la EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryEvaluare: 3.5 din 5 stele3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnDe la EverandTeam of Rivals: The Political Genius of Abraham LincolnEvaluare: 4.5 din 5 stele4.5/5 (234)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaDe la EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaEvaluare: 4.5 din 5 stele4.5/5 (265)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersDe la EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersEvaluare: 4.5 din 5 stele4.5/5 (344)

- The Emperor of All Maladies: A Biography of CancerDe la EverandThe Emperor of All Maladies: A Biography of CancerEvaluare: 4.5 din 5 stele4.5/5 (271)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyDe la EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyEvaluare: 3.5 din 5 stele3.5/5 (2219)

- The Unwinding: An Inner History of the New AmericaDe la EverandThe Unwinding: An Inner History of the New AmericaEvaluare: 4 din 5 stele4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreDe la EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreEvaluare: 4 din 5 stele4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)De la EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Evaluare: 4.5 din 5 stele4.5/5 (119)

- Her Body and Other Parties: StoriesDe la EverandHer Body and Other Parties: StoriesEvaluare: 4 din 5 stele4/5 (821)

- Course Title: Strategic Management: Fifth Semester Course No: EACCT 6519Document13 paginiCourse Title: Strategic Management: Fifth Semester Course No: EACCT 6519mohammad.m hasanÎncă nu există evaluări

- 460.611.81 SU18 H&P-BibliographyDocument8 pagini460.611.81 SU18 H&P-BibliographyRsmColemanÎncă nu există evaluări

- Refresh QA Database With Manual ScriptsDocument15 paginiRefresh QA Database With Manual ScriptschandramohannvcÎncă nu există evaluări

- أثر استخدام برنامج تعليمي محوسب على مهارات التواصل الاجتماعي لدى طلبة مادة مبادىء الاتصال في كلية الإعلام في جامعة البترا.Document16 paginiأثر استخدام برنامج تعليمي محوسب على مهارات التواصل الاجتماعي لدى طلبة مادة مبادىء الاتصال في كلية الإعلام في جامعة البترا.Abeer ElmouhammadiÎncă nu există evaluări

- Level Transmitter Calibration Check Sheet: Test EquipmentDocument1 paginăLevel Transmitter Calibration Check Sheet: Test EquipmentQichiix KiciÎncă nu există evaluări

- 2021-2022 F3 (FFA - FA) Financial Accounting Kit (New)Document419 pagini2021-2022 F3 (FFA - FA) Financial Accounting Kit (New)April HạÎncă nu există evaluări

- c16000 Service ManualDocument2.491 paginic16000 Service ManualVerosama Verosama100% (1)

- ResMethods - Session5Document59 paginiResMethods - Session5David Adeabah OsafoÎncă nu există evaluări

- LMI - PID-Robust PID Controller Design Via LMI Approach - (Ge2002)Document11 paginiLMI - PID-Robust PID Controller Design Via LMI Approach - (Ge2002)PereiraÎncă nu există evaluări

- FG Wilson ATI load transfer panel featuresDocument2 paginiFG Wilson ATI load transfer panel featuresVidal CalleÎncă nu există evaluări

- Application Guidelines Kinross Tasiast Graduate Program July 2017Document6 paginiApplication Guidelines Kinross Tasiast Graduate Program July 2017Touil HoussemÎncă nu există evaluări

- Dnp3 Security and Scalability AnalysisDocument103 paginiDnp3 Security and Scalability AnalysisEnder PérezÎncă nu există evaluări

- Discrete Math NotesDocument454 paginiDiscrete Math NotesDevesh Agrawal0% (1)

- Apolo Additional Attachments (Slings Tension in 3d)Document4 paginiApolo Additional Attachments (Slings Tension in 3d)Mo'tasem SerdanehÎncă nu există evaluări

- A Critical Theory of Medical DiscourseDocument21 paginiA Critical Theory of Medical DiscourseMarita RodriguezÎncă nu există evaluări

- Fundamental and Technical Analysis of GoldDocument37 paginiFundamental and Technical Analysis of GoldBipina BabuÎncă nu există evaluări

- Repro India Limited-CSR PolicyDocument7 paginiRepro India Limited-CSR PolicyBhomik J ShahÎncă nu există evaluări

- Moral Dan Etika KerjaDocument83 paginiMoral Dan Etika KerjaChong Hou YiÎncă nu există evaluări

- PPT-Introduction To OrienteeringDocument44 paginiPPT-Introduction To OrienteeringNiel Isla0% (1)

- GRADE 5 - Pointers To Review GRADE 5 - Pointers To Review: Computer - 5 Computer - 5Document2 paginiGRADE 5 - Pointers To Review GRADE 5 - Pointers To Review: Computer - 5 Computer - 5Leizle Ann BajadoÎncă nu există evaluări

- Metaphoric ExtensionDocument17 paginiMetaphoric ExtensionMriganka KashyapÎncă nu există evaluări

- Bureaucracy For DemocracyDocument201 paginiBureaucracy For Democracynapema.educ4790100% (1)

- Falcon Survival GuideDocument130 paginiFalcon Survival GuideÁlvaro Junior100% (1)

- Tabu Search PDFDocument294 paginiTabu Search PDFjkl316Încă nu există evaluări

- PHI 445 Week 3 Case Analysis Case Studies Lehman Brothers, British Petroleum, Monsanto, Merck, Goodyear, Perdue FarmsDocument3 paginiPHI 445 Week 3 Case Analysis Case Studies Lehman Brothers, British Petroleum, Monsanto, Merck, Goodyear, Perdue FarmsrollinsandrewÎncă nu există evaluări

- Unpacked 1ST QTR Melcs 2023 English SecondaryDocument11 paginiUnpacked 1ST QTR Melcs 2023 English Secondaryeugene.caamoÎncă nu există evaluări

- Daily Lesson Plan Identifies Animals for Raising IncomeDocument4 paginiDaily Lesson Plan Identifies Animals for Raising IncomeGrace Natural100% (1)

- Hand Out Fortran 77Document3 paginiHand Out Fortran 77Dj XperiaÎncă nu există evaluări

- Microprocessor Architecture: Introduction To Microprocessors Chapter 2Document47 paginiMicroprocessor Architecture: Introduction To Microprocessors Chapter 2Ezhilarasan KaliyamoorthyÎncă nu există evaluări

- Question Bank NACPDocument12 paginiQuestion Bank NACPManoj MathewÎncă nu există evaluări