Documente Academic

Documente Profesional

Documente Cultură

NetWorker Cloud Enablement - SRG

Încărcat de

RafaelHerreraDrepturi de autor

Formate disponibile

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentDrepturi de autor:

Formate disponibile

NetWorker Cloud Enablement - SRG

Încărcat de

RafaelHerreraDrepturi de autor:

Formate disponibile

Welcome to NetWorker Cloud Enablement.

Copyright © 2017 Dell Inc. or its subsidiaries. All Rights Reserved. Dell, EMC, and other trademarks are trademarks

of Dell Inc. or its subsidiaries. Other trademarks may be the property of their respective owners. Published in the

USA.

THE INFORMATION IN THIS PUBLICATION IS PROVIDED “AS IS.” DELL EMC MAKES NO REPRESENTATIONS OR WARRANTIES OF ANY KIND WITH RESPECT TO

THE INFORMATION IN THIS PUBLICATION, AND SPECIFICALLY DISCLAIMS IMPLIED WARRANTIES OF MERCHANTABILITY OR FITNESS FOR A PARTICULAR

PURPOSE.

Use, copying, and distribution of any DELL EMC software described in this publication requires an applicable software license. The trademarks, logos, and service marks

(collectively "Trademarks") appearing in this publication are the property of DELL EMC Corporation and other parties. Nothing contained in this publication should be construed

as granting any license or right to use any Trademark without the prior written permission of the party that owns the Trademark.

AccessAnywhere Access Logix, AdvantEdge, AlphaStor, AppSync ApplicationXtender, ArchiveXtender, Atmos, Authentica, Authentic Problems, Automated Resource Manager,

AutoStart, AutoSwap, AVALONidm, Avamar, Aveksa, Bus-Tech, Captiva, Catalog Solution, C-Clip, Celerra, Celerra Replicator, Centera, CenterStage, CentraStar, EMC

CertTracker. CIO Connect, ClaimPack, ClaimsEditor, Claralert ,CLARiiON, ClientPak, CloudArray, Codebook Correlation Technology, Common Information Model, Compuset,

Compute Anywhere, Configuration Intelligence, Configuresoft, Connectrix, Constellation Computing, CoprHD, EMC ControlCenter, CopyCross, CopyPoint, CX, DataBridge ,

Data Protection Suite. Data Protection Advisor, DBClassify, DD Boost, Dantz, DatabaseXtender, Data Domain, Direct Matrix Architecture, DiskXtender, DiskXtender 2000, DLS

ECO, Document Sciences, Documentum, DR Anywhere, DSSD, ECS, elnput, E-Lab, Elastic Cloud Storage, EmailXaminer, EmailXtender , EMC Centera, EMC ControlCenter,

EMC LifeLine, EMCTV, Enginuity, EPFM. eRoom, Event Explorer, FAST, FarPoint, FirstPass, FLARE, FormWare, Geosynchrony, Global File Virtualization, Graphic

Visualization, Greenplum, HighRoad, HomeBase, Illuminator , InfoArchive, InfoMover, Infoscape, Infra, InputAccel, InputAccel Express, Invista, Ionix, Isilon, ISIS,Kazeon, EMC

LifeLine, Mainframe Appliance for Storage, Mainframe Data Library, Max Retriever, MCx, MediaStor , Metro, MetroPoint, MirrorView, Mozy, Multi-Band Deduplication,

Navisphere, Netstorage, NetWitness, NetWorker, EMC OnCourse, OnRack, OpenScale, Petrocloud, PixTools, Powerlink, PowerPath, PowerSnap, ProSphere,

ProtectEverywhere, ProtectPoint, EMC Proven, EMC Proven Professional, QuickScan, RAPIDPath, EMC RecoverPoint, Rainfinity, RepliCare, RepliStor, ResourcePak,

Retrospect, RSA, the RSA logo, SafeLine, SAN Advisor, SAN Copy, SAN Manager, ScaleIO Smarts, Silver Trail, EMC Snap, SnapImage, SnapSure, SnapView, SourceOne,

SRDF, EMC Storage Administrator, StorageScope, SupportMate, SymmAPI, SymmEnabler, Symmetrix, Symmetrix DMX, Symmetrix VMAX, TimeFinder, TwinStrata, UltraFlex,

UltraPoint, UltraScale, Unisphere, Universal Data Consistency, Vblock, VCE. Velocity, Viewlets, ViPR, Virtual Matrix, Virtual Matrix Architecture, Virtual Provisioning, Virtualize

Everything, Compromise Nothing, Virtuent, VMAX, VMAXe, VNX, VNXe, Voyence, VPLEX, VSAM-Assist, VSAM I/O PLUS, VSET, VSPEX, Watch4net, WebXtender, xPression,

xPresso, Xtrem, XtremCache, XtremSF, XtremSW, XtremIO, YottaYotta, Zero-Friction Enterprise Storage.

Revision Date: 03/2017

Revision Number: MR_1WN-NWCLD.9.1.0

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 1

This course covers Dell EMC NetWorker and the ability to enable backups to the cloud.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 2

This module focuses on the EMC CloudBoost appliance and how it integrates with NetWorker for backup

and recovery.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 3

This lesson covers a CloudBoost overview, the components of CloudBoost, and the supported cloud

platforms.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 4

The CloudBoost appliance is a platform that delivers cloud-based extensibility for current Dell EMC data

protection products and third-party data protection solutions. CloudBoost is not a standalone product. It

enables new EMC storage products to utilize both private and public cloud storage solutions securely and

efficiently. CloudBoost is available as either a physical appliance or a VMware virtual appliance. It is

managed and configured via the Internet utilizing the Cloud Portal interface.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 5

The Cloud Portal is a Web-based interface to configure and manage the CloudBoost appliance. A newly

installed CloudBoost appliance is registered in the Cloud Portal where additional configuration steps are

taken such as enabling site cache and choosing a Cloud Profile.

The Cloud Profile is unique to each cloud storage provider. The provider requires specific authentication

content such as an access URL and secret access key which must be gathered directly from the provider.

The CloudBoost appliance may be monitored from the Cloud Portal. This is where CloudBoost alerts,

configuration settings, software versions, and storage use history can be viewed.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 6

There are several different private and public cloud object stores that the CloudBoost appliance supports.

The supported private clouds are EMC ATMOS, EMC Elastic Cloud Storage (ECS), and Generic

OpenStack Swift.

The supported public clouds are Amazon Web Services (AWS), AT&T Synaptic Storage, Google Cloud

Storage, Virtustream Storage Cloud, and Microsoft Azure Storage.

A single cloud provider can have multiple CloudBoost appliances accessing it, but a CloudBoost appliance

may only have a single cloud profile configured for one cloud provider.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 7

Site cache is an optional, firewall friendly, large persistent data cache for the objects most recently written

to, or read from, the cloud. It allows backups to complete quickly over the LAN while trickling data more

slowly to the cloud over the WAN.

Site cache should be deployed when the following conditions are met:

The cloud object store is not LAN accessible.

The connectivity to the cloud object store has low bandwidth and high latency.

There are no streaming workloads or continuous backups running.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 8

The CloudBoost appliance acts as an intermediate agent for data being backed up to the cloud. Backup

solutions, such as NetWorker or Avamar, are configured to use CloudBoost as the storage target for data.

Once the data is written to CloudBoost it will be moved by the CloudBoost appliance from it’s local storage

to the targeted cloud storage. There are two scenarios for the data being backed up by CloudBoost.

First, CloudBoost is used to provide cloud storage for backup data.

Second, CloudBoost is used to provide cloud storage for a secondary offsite copy of backup data while the

primary copy of the backup data is stored onsite.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 9

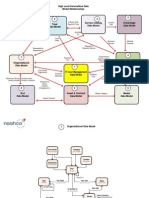

In a NetWorker deployment with a CloudBoost appliance, CloudBoost is added as a storage node and as

a CloudBoost device to the NetWorker server. NetWorker clients can fetch their configurations from the

NetWorker server and can backup to the cloud directly or via an external storage node. A CloudBoost

agent library is available as part of the NetWorker client as well as the storage node software. There are

three different paths backup data may take to reach the cloud target from the client.

Client Direct transfers data to the cloud object store directly. The CloudBoost agent library is installed on

the client. This is the optimal data path, but is limited to x64 Linux only.

An external storage node for clients that do not support client direct. The CloudBoost agent library is part

of the storage node installation.

A CloudBoost appliance contains an embedded storage node also. However this is the least preferred

method.

The CloudBoost library will convert the data into objects and store it on the cloud object store configured

as a target. The metadata for these cloud objects are recorded on the CloudBoost appliance in the

metadata database.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 10

The NetWorker server maintains the responsibility of sending backup requests to the clients and

monitoring the job status. It receives file level data from the clients and maintains data location information

sent from the storage nodes. The communication to and from the NetWorker server is over RPC. The

CloudBoost agent library, which is embedded in the client and storage node software, registers check key

and address of each data chunk with the CloudBoost appliance. The cloud storage keeps the configuration

and metadata from both the NetWorker server and CloudBoost appliance for protection reasons which can

be encrypted optionally. Communication among the CloudBoost libraries, appliance, and cloud storage are

over HTTPS.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 11

This lesson covers three different solutions for NetWorker backups with a CloudBoost appliance.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 12

The first solution option is to backup applications and data workloads in an existing on-premises

infrastructure and use cloud object storage for long term retention. In some instances, cloud storage could

replace tape for offsite compliance and disaster recovery.

CloudBoost’s optional site cache would eliminate the impact of long distance connectivity where high

latency, low bandwidth and network reliability may be an issue.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 13

The second solution option is to backup all applications and data workloads. This includes both short term

backups necessary for operational recovery and long term backups for compliance.

As with the replication solution, CloudBoost’s optional site cache would assist sites where WAN latency is

a problem as well as improve recovery time objectives.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 14

The third solution option is designed for situations where no on-premises infrastructure is available and

workloads are running entirely in the cloud. NetWorker Virtual Edition would be deployed in the cloud

environment along with the CloudBoost virtual appliance. Cloud protected storage could be used for short

term and long term backups.

Unlike the previous solutions, site cache is not available when the CloudBoost appliance is deployed

within the cloud.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 15

This lesson covers how to present CloudBoost storage, include CloudBoost in NetWorker workflows, and

recovery CloudBoost data in NMC.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 16

In order to backup using CloudBoost, a CloudBoost device must be created in NetWorker for an appliance

that has already been deployed and configured in the Cloud Portal with a FQDN, cloud profile, and site

cache option specified.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 17

First, connect to the CloudBoost appliance directly to enable the ‘remotebackup’ user account and specify

a password.

Login to the appliance with the ‘admin’ account.

Type the command ‘remote-mount-password enable password’ where password is a new

password for the ‘remotebackup’ user account.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 18

Next, configure a device using the New Device Wizard in NetWorker. The CloudBoost appliance will show

under Devices > CloudBoost Appliances as well as Devices > Storage Nodes when the wizard is

finished. However, the storage node to select is dependent on the types of clients to be backed up. Linux

clients do not require a storage node. Enable NetWorker’s Client Direct option to bypass the storage node.

When backing up Windows clients, configure a Linux server as an external storage node to offload

resource intensive activities from the CloudBoost appliance.

Login to the NMC GUI as an administrator and under the Devices window launch the Device Configuration

Wizard.

Choose the CloudBoost device type and review the preconfiguration checklist.

Choose the CloudBoost storage option; either embedded or external.

Choose to use an existing CloudBoost appliance or create a new one.

Enter the FQDN of the CloudBoost appliance.

Enter the ‘remotebackup’ user and password specified earlier.

Choose a configuration method to select the CloudBoost file system folder to serve as a target device.

Browse and Select is recommended.

Create a new folder under the /mnt/magfs/base directory to serve as the target data device.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 19

Next, configure the media pool for backups or backup clones.

Enable the option to configure media pools for devices and ensure the device which was just created is

selected.

Choose the pool type; Backup or Backup Clone.

Choose either a new pool or an existing pool. Be aware the pool must only contain CloudBoost devices.

Review the configuration settings and verify the configuration was successful. The CloudBoost pool may

now be selected as part of a workflow for direct backups and clones.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 20

To offload backup activities from the CloudBoost appliance configure an external Linux storage node.

Install the NetWorker storage node, client, and extended client software on a Linux host.

During the creation of the CloudBoost device enter the external storage node in the CloudBoost Storage

configuration option, instead of the default embedded storage.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 21

In the monitoring tab of the NMC, start the workflow containing the policy that was created for the

CloudBoost device. To monitor the upload of backup data to the cloud storage target, open the Cloud

Portal interface and select the CloudBoost appliance and then the Overview tab. The storage use history

is at the bottom of the page.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 22

Restoring data from the cloud can be achieved by running the recovery configuration wizard from the

NetWorker Management Console. The recovery can be configured to restore the data to the original path,

a new destination path, or a new destination host.

To specify a recovery from CloudBoost, create a new recovery configuration and select the saveset

Recover tab and then Query to view the instances available from CloudBoost. Configure the desired

recovery file path, and save the recovery configuration for reuse.

Recovery can be verified by viewing the contents of the recovery destination as well as the recover logs

found on the NetWorker server at \nsr\logs\recover.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 23

To review these topics further, please refer to this list of supporting documents found on the Dell EMC

support site.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 24

This module covered the CloudBoost appliance, the types of situations available for cloud backups, and

the integration of CloudBoost with NetWorker.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 25

This module focuses on the role Cloud Tier plays in backup and recovery and how to integrate Cloud Tier

with NetWorker.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 26

This lesson covers Data Domain Cloud Tier, supported platforms, components of Cloud Tier, and the

difference between CloudBoost and Cloud Tier.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 27

The Data Domain Cloud Tier enables the movement of data from the active tier of a Data Domain system

to low-cost, high-capacity object storage in the public, private, or hybrid cloud for long term data retention.

Only unique, deduplicated data is sent from the Data Domain system to the cloud or retrieved from the

cloud. This ensures that the data being sent to the cloud occupies as little space as possible.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 28

Cloud tiering provides a scalable solution for data storage. With the Data Domain Cloud Tier, users can

store up to two times the maximum active tier capacity in the cloud for long term retention of data. With

cloud tiering policies, data is in the right place at the right time. Data is moved to the cloud tier

automatically based on schedules using policies established by the age of the data.

When data is moved from the active to the cloud tier, it is deduplicated and stored in object storage in the

native Data Domain deduplicated format. This results in a lower total cost of ownership over time for long

term, cloud storage. The cloud tier supports encryption of data at rest by default and the Data Domain

retention lock feature, ensuring the ability to satisfy regulatory and compliance policies.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 29

The Data Domain Cloud Tier is managed by a single Data Domain namespace. There is no separate

cloud gateway or virtual appliance required. Data movement is supported by the native Data Domain

policy management framework.

With DD OS 6.0, supported cloud storage includes Dell EMC Elastic Cloud Storage, Virtustream, Amazon

Web Services, and Microsoft Azure. Additional storage for metadata is required to support the cloud tier.

Metadata is used by deduplication, cleaning, and replication operations.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 30

The Data Domain Cloud Tier is supported on physical Data Domain systems with expanded memory

configurations. Data Domain Cloud Tier can be used with DDVE 3.0 in 16, 64, and 96 TB options.

To support the Cloud Tier, additional metadata storage is required. The amount of required metadata

storage is based on the Data Domain platform.

A Data Domain system can run either the Cloud Tier or Extended Retention but not both on the same

system.

The Cloud Tier is also supported in an HA configuration. Both nodes must be running DD OS 6.0 or higher

and they must be HA-enabled.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 31

With DD OS 6.0, up to two cloud units are supported on each Data Domain system. Each cloud unit has

the maximum capacity of the system’s active tier. The active tier does not have to be at maximum capacity

to scale the cloud tier to maximum capacity. Each cloud unit maps to a cloud provider, which can be

different cloud providers. Metadata shelves store metadata for both cloud units. The number of metadata

shelves needed depends on the cloud unit physical capacity.

This example shows a DD9500 system with an active tier of 864 TB and two cloud units. Each cloud unit

has a capacity equal to that of the active tier, for a combined maximum usable capacity of 1.7 PB. Data

stored on the active tier provides local access to data and can be used for operational recoveries. The

cloud tier provides long term retention for data stored in the cloud.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 32

In a Cloud Tier environment with NetWorker, the NetWorker server maintains the responsibility of sending

backup requests to the clients and monitoring the job status. A Data Domain device and a Cloud Tier

device must be created in NMC. Backups are sent to the Data Domain system and an application based

policy clones the data from block storage to object storage in the Data Domain’s MTree or storage unit.

The backup data can then be pushed to the cloud provider based on an age policy controlled by the Data

Domain system. The workflow within NMC contains both a backup action and a clone action to the

corresponding media pools.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 33

After seeing both CloudBoost and Cloud Tier overviews it is important to discuss how they are different.

Both solutions push data to cloud object storage. However, the reason for deploying each solution

depends on the use case.

In CloudBoost 2.1, the client backup data is pushed directly to cloud object storage. It does not have to be

backed up to block storage first. The backup application, such as NetWorker, uses CloudBoost to enable

the connection between the client and the cloud provider as well as perform deduplication and encryption.

Both the primary copies (backup to the cloud) or secondary copies (replicate to the cloud) of backup data

are pushed off site to a cloud provider directly.

In DD OS 6.0, client backup data is saved to the Data Domain’s active tier and the policy manager clones

the save sets to the Cloud Tier. Once it has reached a certain age the Data Domain age-based policy

pushes the data to cloud object storage. This method provides block storage for new backup data and

cloud object storage for long term retention. Due to it’s age, only infrequently used backup data is stored

with the cloud provider meaning it is less likely to need recovery yet still necessary to fulfill compliance

requirements.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 34

This lesson covers how to utilize Cloud Tier in NetWorker as well as steps to recover Cloud Tier data.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 35

A Data Domain system uses data movement policies to move data. During NetWorker device creation,

NetWorker creates an app-based policy for the Data Domain storage unit, or MTree. There is one app-

based policy per MTree which associates the Data Domain storage unit with the cloud unit. The app-

based policy is managed by NetWorker as a clone policy action in the workflow where data is marked as

eligible for movement to the cloud and cloned to the Cloud Tier media pool.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 36

Two different device types must be created in NetWorker; Data Domain and DD Cloud Tier. These

devices require DD Boost and Cloud Tier be licensed and enabled on the Data Domain system. The two

types of devices must also be created within the same Data Domain MTree. A message about the app-

based policy creation will be displayed prior to reviewing the configuration settings.

Two media pools will be required as well. The Data Domain device pool type must be ‘Backup’ and the

Cloud Tier device pool type must be ‘Clone’.

The NetWorker storage node chosen to manage the devices must be running the same version of

NetWorker server software, version 9.1.

Finally, A Data Domain system configured for Cloud Tier contains a CA certificate to communicate with

the cloud provider. The Cloud Tier device configuration will offer to pull, or import, the CA into NetWorker.

The certificate must be trusted and the name of the cloud unit created on the Data Domain system needs

to be specified in NetWorker.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 37

Direct backups to the cloud provider or Cloud Tier are not permitted. Only cloned copies of the NetWorker

save sets go the Cloud Tier. The backup policy action in NetWorker copies the backup data to Data

Domain devices locally first.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 38

The clone policy action in the NetWorker protection workflow performs the data movement from the Data

Domain devices to the Cloud Tier devices. The clone action performs a copy so the backup data still

remains on the Data Domain devices. The option to delete source save sets after the clone operation

completes is available. This is the equivalent to staging. However, a NetWorker staging policy can also be

configured for the Data Domain devices and Cloud Tier destination pool based on high and low water

marks. Once the cloned or staged save sets are in the Cloud Tier, movement to the cloud object storage is

performed based on the Data Domain age-based policy.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 39

A Data Domain age-based data movement policy is created by the Data Domain administrator. It’s

schedule determines the frequency in which data is moved from the Cloud Tier to the cloud provider. A

schedule can be created to perform movement daily, weekly, or monthly in either the Data Domain

System Manager or Data Domain CLI. A manual start of the data movement is also possible.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 40

The data recovery procedure for data saved to the cloud provider or Cloud Tier is the same for non-cloud

object storage. The Cloud Tier device and a Data Domain device must be present in the same MTree. The

data recovery process clones the data from the Cloud Tier to a Data Domain device and then recovers the

data from the Data Domain device. The clone data is removed from the Data Domain device after seven

days. The savesets are recalled from the cloud provider if they are not found locally on either tier of the

Data Domain.

Many types of recoveries are supported. For example, disaster recoveries, block based backups, file level

restores of block based backups, VMware block based backups, and VMware image level recoveries.

However, VMware file level restores from the Cloud Tier are not supported. Instead, clone the data to a

Data Domain device first..

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 41

This lesson covers the integration of NetWorker and Cloud Tier.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 42

Before starting the integration of Cloud Tier in NetWorker, ensure the following Data Domain steps have

been performed in either Data Domain System Manager or Data Domain CLI. The Data Domain Cloud

Tier Implementation and Management course (MR-1WP-DDCTIM) covers these topics in detail.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 43

Start the integration of Cloud Tier by creating a Data Domain device. This can be for a new system or an

existing system. Plan to include this device in the same MTree or storage unit as the Cloud Tier device

which will be created later. The DD Boost credentials must be specified next. Continue with the

configuration wizard to create a folder for the device. Then, configure a media pool of type Backup. Be

sure to leave the option to Label and Mount device after creation enabled. Finalize the configuration

wizard and ensure a Data Domain system, Data Domain device, and a mounted volume are present in

NMC.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 44

The next step is to create the DD Cloud Tier device. Once again, ensure the Cloud Tier is licensed,

enabled, and devices have been added to the Cloud Tier on the Data Domain system. Select the Data

Domain system and enter the DD Boost credentials. Select the option to use Secure Multi-Tenancy to

use only DD Boost devices in secure storage units. The storage unit is then restricted to one owner

according to the DD Boost credentials. Dell EMC recommends using Browse and Select to choose a

folder for the NetWorker devices.

Create a new folder for the Cloud Tier device and select it. Configure a clone media pool for the Cloud Tier

device. The pool must contain only Cloud Tier devices. Create a new pool or select an existing one.

Finally, specify the Data Domain Management Parameters. Enter the Data Domain system host name

and admin credentials. The default communication port is 3009. Pull the CA Certificate from the Data

Domain used to communicate with the cloud provider. Then, enter the name of the Cloud Unit specified on

the Data Domain system. A message regarding the app-based policy appears. This ensure the clone

action can perform the data movement from the Data Domain system’s Active Tier to the Cloud Tier.

Finalize the configuration wizard and ensure a DD Cloud Tier device and a mounted volume are present.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 45

The app-based policy ensures that NetWorker’s clone action can issue the data movement policy to the

Data Domain system. Use the Policy Action Wizard to configure the clone movement. The source

storage node contains the save set data in which to clone. The destination storage node is where to store

the cloned save sets. Both storage nodes must belong to the same Data Domain MTree.

Select the media pool which contains the DD Cloud Tier devices. The retention time determines when to

mark the save sets as recyclable during the expiration server maintenance task.

Delete source savesets after clone completes is the equivalent to staging. Data is moved to the

destination volume and deleted from the source volume.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 46

NetWorker Staging to DD Cloud Tier devices is also supported. However, staging from Cloud Tier devices

is not supported. As with cloning, the Data Domain device and Cloud Tier device must reside in the same

MTree. Create the staging by choosing the source devices and the destination Cloud Tier pool.

The configuration group box specifies the criteria for the staging policy to start. For example, the high

water mark signals when to perform the operation based on the amount of used disk space on the file

system partition on the source device. The low water mark signals when the save sets stop moving from

the source device.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 47

The status of the Cloud Tier save sets can be monitored by using the mminfo command and specifying

the clone flag option. A ‘T’ flag, or in-transit flag, will display for any save set that is on the Cloud Tier

device but has not yet moved to the cloud provider.

The status may also be checked from NMC. Under the Media window select Save Sets. Under the View

menu select Choose Table Columns and ensure the Clone Flags column is selected. A ‘T’ flag will be

displayed similar to the output of the mminfo command.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 48

To review these topics further, please refer to this list of supporting documents found on the Dell EMC

support site.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 49

This module covered the Data Domain Cloud Tier, the use of Cloud Tier in NetWorker, and the integration

of Cloud Tier with NetWorker.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 50

This module focuses on the Dell EMC Data Protection Extension for NetWorker support in VMware

vRealize.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 51

This lesson covers an overview of the Dell EMC Data Protection Extension for VMware vRealize

Automation Suite.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 52

The Dell EMC vRealize Data Protection Extension (DPE) is a plug-in for the VMware vRealize Automation

Suite. The plug-in is installed in vRealize Orchestrator (vRO) by the VMware administrator, which is the

workflow engine packaged with vRealize Automation. vRealize Automation (vRA) automates business

processes for provisioning, destroying and managing virtual machines. The vRA portal provides access for

the end-users to consume cloud and IT virtualization offerings. DPE brings the VMware infrastructure and

the backup administrator’s data protection components together during virtual machine self-provisioning.

Please note: If you are familiar with previous versions of the vRealize Suite, vRealize Automation was

previously known as vCloud Automation Center, or vCAC, and vRealize Orchestrator was previously

known as vCenter Orchestrator, or vCO.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 53

Earlier versions of the Data Protection Extension had Avamar support, but new to DPE version 4.0 is

support for NetWorker 9.1. New and existing virtual machines can be protected by NetWorker protection

polices and perform the many different restore operations that NetWorker supports, all through the vRA

portal.

In order to utilize DPE version 4.0, a VMware virtual environment must consist of vRealize Orchestrator

version 7.1 or above, and vRealize Automation version 7.1 or above.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 54

The DPE plug-in is customer installable. The software and supporting documentation are available to the

VMware administrator on the Dell EMC support website. vRA enforces the specifications of newly

deployed virtual machines using blueprints. By installing the Dell EMC DPE plug-in, end users can select

a data protection policy from the vRA service catalog as new virtual machines are provisioned during the

standard vRA workflow. Different levels of protection can be chosen depending on the requested blueprint

and the defined policies and protection groups which are configured on the NetWorker server.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 55

vRA can be deployed as a multi-tenant environment to isolate different groups using shared cloud

resources. Multiple protection systems are supported in order to use different combinations of NetWorker

and Avamar systems per tenant.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 56

Some additional features are available to end-users as well. End-users have the ability to modify existing

VMs. The polices can be added and removed as protection needs change. Protection policies can also be

retired when the VM is destroyed. A final backup is performed automatically prior to destroying a virtual

machine. The protection status of the VMs can be verified by viewing the policies and recent backups in

the vRA portal. Backups can be initiated manually, or on-demand, and different restore options such as

‘revert recovery’ and ‘vm recovery’ are possible also. The web based file level recovery is also possible

through the vRA portal.

After DPE is configured to interoperate with the NetWorker server, all the existing NetWorker tools remain

available for use. NMC, REST API, and cli tools are still available to the backup administrators on the

NetWorker server. Should the backup administrator use these tools directly, vRA operations will not be

affected and will update based on changes made in NetWorker.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 57

Before starting the installation of the Data Protection Extension plug-in in vRealize, ensure the following

VMware and at least one of data protection products have been deployed in the environment.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 58

The vRealize Data Protection Extension package can be downloaded from the Dell EMC support website.

It can also be ordered at no cost through the EMC DirectXpress (DXP) or ChannelXpress (CXP) ordering

process.

Within the DPE package is the .vmoapp file which is a compressed vRealize Orchestrator install file used

by the vRealize deployment mechanism. It contains some additional file types:

• Open source licensing and license agreement files.

• A .dar file which is the orchestrator plug-in format containing the java code and library files.

Other files included in the download are:

• The vRealize Orchestrator .package file which contains the workflow actions and workflows.

• A FLR webapp rpm which is only required by Avamar systems. NetWorker already contains the FLR

webapp software.

• A vRO package upgrade script to cleanup old actions and workflows leftover in Orchestrator when

upgrading the plug-in.

A free license file must be obtained from Dell EMC and activated through the licensing website.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 59

To install the Data Protection extension, login to the vRealize Orchestrator Control Center and scroll down

to the Plug-ins section and click Manage Plug-ins. Browse for the .vmoapp file and click Install. Once

the installation is complete a message will appear requiring a restart of the Orchestrator server service.

Back at the Home screen locate the Manage section and click Startup Options. The current status of the

service will be RUNNING. Click Restart and watch the log file viewer to monitor the restart until the status

is RUNNING again. The plug-in will show under Manage Plug-ins page.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 60

Then, to apply the license file, login as root to the Linux system where vRealize Orchestrator is installed

and use secure FTP to upload the file to the /var/lib/vco/app-server/conf/plugins/edplicense directory.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 61

The next step is to leave the vRealize Orchestrator Control Center and open the vRealize Orchestrator

client, which is the GUI tool to manage the running Orchestrator instance. This is where the NetWorker

data protection is configured for use in vRealize Automation as catalog items. In the Orchestrator client,

select the Workflows tab and browse to Library > EMC > Data Protection > vRA > Installation. Right-

click the Install default setup for tenant workflow and select Start workflow.

Enter the information about the vRealize Automation Center.

Specify the catalog service for which you add data protection services.

Choose the entitlement for tenant administrators.

Select the entitlement for tenant users.

Provide the data protection type and system information. This is where all the NetWorker information is

entered.

Click Submit and monitor the progress. When it completes, view the NetWorker data protection endpoint

by selecting the Inventory tab, and clicking EMC Data Protection. If the endpoint does not immediately

display, right-click EMC Data Protection and select Reload.

Optionally, you can diagnose potential configuration issues between DPE, vRealize Automation, vRealize

Orchestrator, vCenter, and NetWorker. Right-click Check EMC data protection configuration in the left

pane of the Workflows tab, and select Start workflow.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 62

This lesson covers vRealize Automation and the protection provided by the Data Protection Extension for

NetWorker.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 63

Prior to “As A Service” environments, the backup administrator would work alone to provide service level

agreements for new and existing applications and filesystems. The end-users had no access to the

backup policies. Now, multiple roles must interact and work together to create SLAs for the end-users, or

consumers. The backup administrator creates the policies in NetWorker, which include schedules,

retention periods, and different types of backup and clone actions. The VMware administrator makes the

policies available to the end-users. As a vRA tenant admin, the VMware administrator sets up data

protection in the service catalog to make the NetWorker protection groups available to consume. The

policy groups are published to vRA as either new catalog services or added to existing catalog services.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 64

Through the vRA portal, the end-user has access to the NetWorker protection groups and can add and

remove them from their virtual machines as needed. The end-user deploys VMs and chooses from the

data protection policies created by the backup administrator, and made available in the service catalog by

the VMware administrator. The end-user has the option to add, remove, view, and destroy data protection

features.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 65

The end-user can choose a policy related to a predefined NetWorker protection group without requiring

any knowledge of the underlining software or policy actions. The DPE plug-in associates the protection

policy with the VM being provisioned.

The backup administrator will see a new VM client become associated with the protection policy in NMC.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 66

Should the end-user choose to remove the protection policy from VM, the backup administrator will notice

that the VM client is no longer associated with that policy in NetWorker.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 67

An end-user can see a VM’s protection status by selecting the option to View protection status. The

result displayed to the end-user is the NetWorker policy where the VM client belongs.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 68

Should the vRA administrator, or an end-user with proper entitlements, choose the option to destroy a VM

with data protection, the backup administrator will see the VM was removed from the protection group

once a full backup is performed automatically. The VMware administrator will also notice the VM is no

longer in vSphere’s host inventory.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 69

When the protection is enabled for the virtual machines, polices will run automatically according to the

defined schedule. End-users can perform on demand backups from the vRealize Automation portal by

selecting the Run data protection action. The end-user has a choice of which policy to run based on the

available Dell EMC protection policies that have already been applied to the VMs. When the backup

completes the status can be viewed from the action menu.

VMware administrators have the ability to follow the progress of the backup workflow in the vRO portal as

well as in VMware vSphere.

Backup administrators can view the status of the backup by using NMC’s log viewer and Monitoring

window.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 70

End-users can perform their own data restores of the virtual machines. The Restore data option restores

the VM back to its original location. Once the restore data request has been selected from the action

menu, the end-user can follow the progress using the Requests tab.

Similar to the Run data protection action, both VMware administrators and backup administrators can

follow the progress using their respective tools.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 71

The Restore to new option restores a virtual machine to a location that is different from the location of the

original machine. A new virtual machine is created in vCenter and then imported into vRealize Automation.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 72

A vRA administrator, or an end-user with proper entitlements, can also perform an Advanced restore to

new action. The initial restore options are the same, however, advanced options for vSphere are available

such as which datastore and resource pool to locate the new VM.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 73

An end-user can use vRA to initiate a NetWorker File Level Restore. A web based restore UI is available

for backups and cloned backups that reside on a Data Domain device. The end-user selects File level

restore action for the virtual machine and chooses the backup from which to restore the files.

A URL is constructed by vRA for the end-user to use the web based application. Once the application is

launched, the end-user must login with their user credentials. The user account must be part of the

NetWorker VMware FLR Users group. After gaining access, the end-user follows the FLR workflow to

browse and select files to restore.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 74

To review these topics further, please refer to this list of supporting documents found on the Dell EMC

support site.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 75

This module covered the vRealize Data Protection Extension for NetWorker and an overview of it’s

features.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 76

This course covered the integration of NetWorker with the CloudBoost appliance, Data Domain Cloud

Tier, and VMware vRealize Automation Suite for public, private, and hybrid cloud data protection.

This concludes the training. Proceed to the course assessment on the next slide.

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 77

Copyright © 2017 Dell Inc.. NetWorker Cloud Enablement 78

S-ar putea să vă placă și

- NetWorker 9.0 Overview - SRG PDFDocument47 paginiNetWorker 9.0 Overview - SRG PDFmoinkhan31Încă nu există evaluări

- Backup Fundamentals in The Age CloudDocument35 paginiBackup Fundamentals in The Age CloudJyothiMÎncă nu există evaluări

- Storage Optimization with Unity All-Flash Array: Learn to Protect, Replicate or Migrate your data across Dell EMC Unity Storage and UnityVSADe la EverandStorage Optimization with Unity All-Flash Array: Learn to Protect, Replicate or Migrate your data across Dell EMC Unity Storage and UnityVSAEvaluare: 5 din 5 stele5/5 (1)

- ViPR SRM Fundamentals SRGDocument65 paginiViPR SRM Fundamentals SRGPasindu MalinthaÎncă nu există evaluări

- Avamar Fun 7.4.1 - SRGDocument66 paginiAvamar Fun 7.4.1 - SRGFarha Azad100% (1)

- Module 4 - Data Domain Advanced Features and Functions - v5Document67 paginiModule 4 - Data Domain Advanced Features and Functions - v5ilayaraja21Încă nu există evaluări

- Emc E20-593Document138 paginiEmc E20-593mgm3233Încă nu există evaluări

- Migrating To Unity With SANDocument27 paginiMigrating To Unity With SANemcviltÎncă nu există evaluări

- Data Domain DD OS 5.4 Hardware Installation: Student GuideDocument83 paginiData Domain DD OS 5.4 Hardware Installation: Student GuideLouis Thierry Ekwa Bekok0% (1)

- Avamar Q ADocument4 paginiAvamar Q AVamsi BonamÎncă nu există evaluări

- Resume of StanelyDocument7 paginiResume of Stanelygabbu_Încă nu există evaluări

- ISM v3 Module 1 - Elearning - SRGDocument18 paginiISM v3 Module 1 - Elearning - SRGVikasSharmaÎncă nu există evaluări

- Emc Data Domain Networker Implementation Student GuideDocument96 paginiEmc Data Domain Networker Implementation Student GuideMansoorÎncă nu există evaluări

- Unity ReplicationDocument56 paginiUnity ReplicationRatataÎncă nu există evaluări

- Unisphere For VMAX Product Guide V1.5.1Document534 paginiUnisphere For VMAX Product Guide V1.5.1vinzarcev67% (3)

- Symmetrix Foundations Student Resource GuideDocument50 paginiSymmetrix Foundations Student Resource GuideJames PeterÎncă nu există evaluări

- Isilon Site Preparation and Planning GuideDocument38 paginiIsilon Site Preparation and Planning GuidejavedsajidÎncă nu există evaluări

- ScaleIO Fundamentals MR 1WN SIOFUN - Student GuideDocument74 paginiScaleIO Fundamentals MR 1WN SIOFUN - Student GuideReinaldo GuzmanÎncă nu există evaluări

- Emc Legato Networker Foundations: © 2005 Emc Corporation. All Rights ReservedDocument61 paginiEmc Legato Networker Foundations: © 2005 Emc Corporation. All Rights Reservedeshu0123456789Încă nu există evaluări

- AvamarDocument47 paginiAvamarSahil AnejaÎncă nu există evaluări

- Isilon PerformanceDocument69 paginiIsilon PerformanceManikandan Bose SÎncă nu există evaluări

- Backing Up and Recovering Clusters With Emc NetworkerDocument41 paginiBacking Up and Recovering Clusters With Emc NetworkerMansoorÎncă nu există evaluări

- NetApp - Useful CommandsDocument16 paginiNetApp - Useful CommandsVishal ShindeÎncă nu există evaluări

- AdminGuide302-003-960 01 ZH HKDocument342 paginiAdminGuide302-003-960 01 ZH HKMohamed HajjiÎncă nu există evaluări

- VPLEX Administration Student GuideDocument261 paginiVPLEX Administration Student GuideLuis SandersÎncă nu există evaluări

- Nutanix Controller VM Security Operations GuideDocument15 paginiNutanix Controller VM Security Operations GuideSuhaimi MieÎncă nu există evaluări

- XtremIO Hardware FundamentalsDocument59 paginiXtremIO Hardware Fundamentalsrodrigo.rras3224Încă nu există evaluări

- EMC Unity Best Practices Guide, May 2016Document14 paginiEMC Unity Best Practices Guide, May 2016Noso OpforuÎncă nu există evaluări

- BRM Support Day - March 2010-VmwareDocument120 paginiBRM Support Day - March 2010-VmwareacjeffÎncă nu există evaluări

- VNX 5100 - Initialize An Array With No Network AccessDocument6 paginiVNX 5100 - Initialize An Array With No Network AccessRaghunandan BhogaiahÎncă nu există evaluări

- Network Appliance NS0-145Document56 paginiNetwork Appliance NS0-145ringoletÎncă nu există evaluări

- Fundamentals VNXe StudentGuide - 2015Document77 paginiFundamentals VNXe StudentGuide - 2015alireza1023Încă nu există evaluări

- VPLEX Administration GuideDocument272 paginiVPLEX Administration GuidePrasadValluraÎncă nu există evaluări

- PowerMax and VMAX Family Configuration and Business Continuity Administration - LGDocument176 paginiPowerMax and VMAX Family Configuration and Business Continuity Administration - LGSatyaÎncă nu există evaluări

- VMAX Meta Device Symmetrix and Device AttributesDocument52 paginiVMAX Meta Device Symmetrix and Device AttributesPrakash LakheraÎncă nu există evaluări

- HPE - A00088924en - Us - HPE Primera OS - Recovering From Disaster Using RemoteDocument89 paginiHPE - A00088924en - Us - HPE Primera OS - Recovering From Disaster Using RemoteSyed Ehtisham AbdullahÎncă nu există evaluări

- EMC RecoverPoint - ArchitectureDocument6 paginiEMC RecoverPoint - ArchitectureRajÎncă nu există evaluări

- Dell EMC AvamarDocument47 paginiDell EMC AvamarMárcio MonteiroÎncă nu există evaluări

- Networker UpgradeDocument186 paginiNetworker UpgradeGopi Sai Yadav KolliparaÎncă nu există evaluări

- XtremIO Initial ConfigurationDocument64 paginiXtremIO Initial Configurationrodrigo.rras3224Încă nu există evaluări

- DD 5.1 Admin Lab Guide v1.0Document122 paginiDD 5.1 Admin Lab Guide v1.0Nitin TandonÎncă nu există evaluări

- Brocade San Ts Exam Study GuideDocument5 paginiBrocade San Ts Exam Study GuideAakashÎncă nu există evaluări

- SyncIQ Failures CheckingDocument23 paginiSyncIQ Failures CheckingVasu PogulaÎncă nu există evaluări

- DD OS 5.2 Initial Configuration GuideDocument58 paginiDD OS 5.2 Initial Configuration Guidedragos_scribdÎncă nu există evaluări

- 5 General TS and Navisphere (Important)Document43 pagini5 General TS and Navisphere (Important)c1934020% (1)

- Oracle Databases On EMC VMAX PDFDocument512 paginiOracle Databases On EMC VMAX PDFJimmy UkoboÎncă nu există evaluări

- NetApp Hardware Diagnostics Guide PDFDocument286 paginiNetApp Hardware Diagnostics Guide PDFdbo61Încă nu există evaluări

- Avamar Data Domain Integration Lab Guide 2014Document41 paginiAvamar Data Domain Integration Lab Guide 2014Ryadh ArfiÎncă nu există evaluări

- Dell 2000 StorageDocument115 paginiDell 2000 Storagests100Încă nu există evaluări

- Networker Performance Tuning PDFDocument49 paginiNetworker Performance Tuning PDFHarry SharmaÎncă nu există evaluări

- Physical Storage: Data ONTAP 8.0 7-Mode AdministrationDocument71 paginiPhysical Storage: Data ONTAP 8.0 7-Mode AdministrationkurtenweiserÎncă nu există evaluări

- Intelli SnapDocument44 paginiIntelli SnapVenkat ChowdaryÎncă nu există evaluări

- EMC Data Domain Technical OverviewDocument24 paginiEMC Data Domain Technical Overviewtelagamsetti0% (1)

- Data Domain - Installation Procedure by Hardware Model-DD6300, DD6800 and DD9300 Hardware Installation GuideDocument59 paginiData Domain - Installation Procedure by Hardware Model-DD6300, DD6800 and DD9300 Hardware Installation GuideJose L. RodriguezÎncă nu există evaluări

- Commvault Simpana A Complete Guide - 2020 EditionDe la EverandCommvault Simpana A Complete Guide - 2020 EditionÎncă nu există evaluări

- Commvault Simpana A Complete Guide - 2019 EditionDe la EverandCommvault Simpana A Complete Guide - 2019 EditionÎncă nu există evaluări

- Pure Storage FlashBlade The Ultimate Step-By-Step GuideDe la EverandPure Storage FlashBlade The Ultimate Step-By-Step GuideÎncă nu există evaluări

- Beginners Guide CodingDocument48 paginiBeginners Guide CodingNuty Ionut100% (1)

- Mannai Corporation Achieves Record Profits of QR 526 Million in 2014 Annual ReportDocument89 paginiMannai Corporation Achieves Record Profits of QR 526 Million in 2014 Annual Reportaminchhipa6892Încă nu există evaluări

- Vxblock 1000 Product OverviewDocument4 paginiVxblock 1000 Product OverviewMow MowqÎncă nu există evaluări

- History of VirtualizationDocument29 paginiHistory of VirtualizationBadrinath KadamÎncă nu există evaluări

- Fortimail VM Install 40 Mr3Document21 paginiFortimail VM Install 40 Mr3John-EdwardÎncă nu există evaluări

- Dell Emc Metro Node - Administrator Guide4 - en UsDocument44 paginiDell Emc Metro Node - Administrator Guide4 - en UsemcviltÎncă nu există evaluări

- VMWARE For HPE Proliant ServersDocument31 paginiVMWARE For HPE Proliant ServersAong AongÎncă nu există evaluări

- Docu47001 Unisphere For VMAX 1.6 Installation GuideDocument82 paginiDocu47001 Unisphere For VMAX 1.6 Installation GuideSangrealXÎncă nu există evaluări

- NB 7503 Release NotesDocument74 paginiNB 7503 Release NotessshagentÎncă nu există evaluări

- TenableCOre NessusDocument84 paginiTenableCOre NessusLanang FebriramadhanÎncă nu există evaluări

- Vmware Vsphere: © 2010 Vmware Inc. All Rights ReservedDocument162 paginiVmware Vsphere: © 2010 Vmware Inc. All Rights ReservedantermyhomeÎncă nu există evaluări

- Desktop Virtualization RFP Response ToolDocument12 paginiDesktop Virtualization RFP Response ToolFreddy FernándezÎncă nu există evaluări

- High Level ServiceNow Data ModelsDocument41 paginiHigh Level ServiceNow Data ModelsEl Mehdi100% (8)

- Oracle RAC On VMware VSANDocument37 paginiOracle RAC On VMware VSANShahulÎncă nu există evaluări

- Module - 1 Course IntroductionDocument9 paginiModule - 1 Course IntroductionIjazKhanÎncă nu există evaluări

- Comparing Virtualization Platforms - PowerVM and VMWareDocument26 paginiComparing Virtualization Platforms - PowerVM and VMWareBart SimsonÎncă nu există evaluări

- Local Nome Do ServidorDocument52 paginiLocal Nome Do ServidorMarcelino Igor AntunesÎncă nu există evaluări

- Microsoft Virtual Machine Converter Administration GuideDocument21 paginiMicrosoft Virtual Machine Converter Administration GuidesamuefonÎncă nu există evaluări

- vSAN 2 Node GuideDocument158 paginivSAN 2 Node GuideCarlos FernandezÎncă nu există evaluări

- VMware Virtual SANDocument24 paginiVMware Virtual SANUjjwal LanjewarÎncă nu există evaluări

- Netwrix Auditor Installation Configuration GuideDocument316 paginiNetwrix Auditor Installation Configuration GuidecarklounÎncă nu există evaluări

- Azure Administrator Learning PathwayDocument2 paginiAzure Administrator Learning PathwayEric FloresÎncă nu există evaluări

- Mahesh VM Resume NewDocument2 paginiMahesh VM Resume NewMahesh NaniÎncă nu există evaluări

- Vyatta-QuickStart 6.5R1 v01Document55 paginiVyatta-QuickStart 6.5R1 v01Arturo M. GittensÎncă nu există evaluări

- Roadster Diagnostic Tools Installation Guide - v1Document21 paginiRoadster Diagnostic Tools Installation Guide - v1peterdetothÎncă nu există evaluări

- Resume of MahoneytechDocument3 paginiResume of Mahoneytechapi-24933510Încă nu există evaluări

- LaptopInstallGuide Apic5072Document44 paginiLaptopInstallGuide Apic5072cesar767Încă nu există evaluări

- VSP VTSPDocument14 paginiVSP VTSPElamirÎncă nu există evaluări

- Vsphere Esxi Vcenter Server 671 Availability GuideDocument105 paginiVsphere Esxi Vcenter Server 671 Availability GuidemautigÎncă nu există evaluări

- How To Install Windows Server 2003 Enterprise Edition As Vmware Virtual Machine For Installing Sap ECC 6.0Document11 paginiHow To Install Windows Server 2003 Enterprise Edition As Vmware Virtual Machine For Installing Sap ECC 6.0Joseph DeckerÎncă nu există evaluări