Documente Academic

Documente Profesional

Documente Cultură

Comparison of Swarm Intelligence Techniques

Încărcat de

ijcsbiDrepturi de autor

Formate disponibile

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentDrepturi de autor:

Formate disponibile

Comparison of Swarm Intelligence Techniques

Încărcat de

ijcsbiDrepturi de autor:

Formate disponibile

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

Comparison of Swarm Intelligence

Techniques

Prof. S. A. Thakare

Assistant Professor

JDIET, Yavatmal (MS), India.

ABSTRACT

Swarm intelligence is a computational intelligence technique to solve complex real-world

problems. It involves the study of collective behaviour of behavior of decentralized, self-

organized systems, natural artificial. Swarm Intelligence (SI) is an innovative distributed

intelligent paradigm for solving optimization problems that originally took its inspiration

from the biological examples by swarming, flocking and herding phenomena in vertebrates.

In this paper, we have made extensive analysis of the most successful methods of

optimization techniques inspired by Swarm Intelligence (SI): Ant Colony Optimization

(ACO) and Particle Swarm Optimization (PSO). An analysis of these algorithms is carried

out with fitness sharing, aiming to investigate whether this improves performance which

can be implemented in the evolutionary algorithms.

1. INTRODUCTION

Swarm intelligence (SI) is “The emergent collective intelligence of groups

of simple agents” (Bonabeau et al., 1999). It gives rise to complex and often

intelligent behavior through simple, unsupervised (no centralized control)

interactions between a sheer number of autonomous swarm members. This

results in the emergence of very complex forms of social behavior which

fulfills a number of optimization objectives and other tasks. Swarm is

considered as biological insects like ants, bees, wasps, fish etc. In this paper

we have considered biological insects‟ ant and birds flocking for our study.

Ants possess the following characteristics:

(1) Scalability: The ants can change their group size by local and

distributed agent interactions. This is an important characteristic by

which the group is scaled to the desired level.

(2) Fault tolerance: Each ant follows a simple rule. They do not rely on

a centralized control mechanism, graceful, scalable degradation.

(3) Adaptation: Ants always search for new path by roaming around

their nest. Once they find the food their nest members follow the

shortest path. While nest members follow shortest path, some of the

members of the colony always search for another shortest path. To

accomplish this they change, die or reproduce for the colony.

(4) Speed: In order to make other ants to know the food source, they

move faster to their nest. Other ants find more pheromone on the

path and follow the path to the food source. Thus changes are

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 1

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

propagated very fast to communicate to other nest mates in order to

follow the food source.

(5) Modularity: Ants follow the simple rule of following the path which

has a higher level of pheromone concentration. They do not interact

directly and act independently to accomplish the task.

(6) Autonomy: No centralized control and hence no supervisor is

needed. They work for the colony and always strive to search food

source around their colony.

(7) Parallelism: Ants work independently and the task of searching food

source is carried out by each ant in parallelism. It is parallelism due

to which they change their path, if a new food source is found near

their colony. These characteristics of biological insects such as ants

resemble the characteristics of Mobile Ad Hoc Networks. This helps

us to apply the food searching characteristics of ants for routing

packets in Mobile Ad Hoc Networks.

2. SWARM INTELLIGENCE (SI) MODELS

Swarm intelligence models are referred to as computational models inspired

by natural swarm systems. To date, several swarm intelligence models

based on different natural swarm systems have been proposed in the

literature, and successfully applied in many real-life applications. Examples

of swarm intelligence models are: Ant Colony Optimization, Particle

Swarm Optimization, Artificial Bee Colony, Bacterial Foraging, Cat Swarm

Optimization, Artificial Immune System, and Glowworm Swarm

Optimization. In this paper, we will primarily focus on two of the most

popular swarm intelligence models, namely, Ant Colony Optimization and

Particle Swarm Optimization.

2.1 Ant Colony Optimization (ACO) Model

The ant colony optimization meta-heuristic is a particular class of ant

algorithms. Ant algorithms are multi-agent systems, which consist of agents

with the behavior of individual ants [1]. The ant colony optimization

algorithm (ACO) is a probabilistic technique for solving computational

problems which can be reduced to finding better paths through graphs. In

the real world, ants (initially) wander randomly, and upon finding food

return to their colony while laying down pheromone trails. If other ants find

such a path, they are likely not to keep travelling at random, but to instead

follow the trail; returning and reinforcing it if they eventually find food.

Over time, however, the pheromone trail starts to evaporate, thus reducing

its attractive strength. The more time it takes for an ant to travel down the

path and back again, the more time the pheromones have to evaporate. A

short path, by comparison, gets marched over faster, and thus the

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 2

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

pheromone density remains high as it is laid on the path as fast as it can

evaporate. Pheromone evaporation has also the advantage of avoiding the

convergence to a locally optimal solution. If there were no evaporation at

all, the paths chosen by the first ant would tend to be excessively attractive

to the following ones. In that case, the exploration of the solution space

would be constrained [3]. Thus, when one ant finds a good (i.e., short) path

from the colony to a food source, other ants are more likely to follow that

path, and positive feedback eventually leads all the ants following a single

path. The idea of the ant colony algorithm is to mimic this behavior with

"simulated ants" walking around the graph representing the problem to

solve.

The original idea comes from observing the exploitation of food resources

among ants, in which ants‟ individually limited cognitive abilities have

collectively been able to find the shortest path between a food source and

the nest [4, 5, 7].

1. The first ant finds the food source (F), via any way (a), then returns

to the nest (N), leaving behind a trail of pheromone (b)

2. Ants indiscriminately follow four possible ways, but the

strengthening of the runway makes it more attractive as the shortest

route.

3. Ants take the shortest route; long portions of other ways lose their

trail pheromones.

In a series of experiments on a colony of ants with a choice between two

unequal length paths leading to a source of food, biologists have observed

that ants tended to use the shortest route. A model explaining this behavior

is as follows:

1. An ant (called "blitz") runs more or less at random around the

colony;

2. If it discovers a food source, it returns more or less directly to the

nest, leaving in its path a trail of pheromone;

3. These pheromones are attractive, nearby ants will be inclined to

follow, more or less directly, the track;

4. Returning to the colony, these ants will strengthen the route;

5. If two routes are possible to reach the same food source, the shorter

one will be, in the same time, traveled by more ants than the long

route will.

6. The short route will be increasingly enhanced, and therefore become

more attractive;

7. The longer route will eventually disappear, pheromones are volatile;

8. Eventually, all the ants have determined and therefore "chosen" the

shortest route. Ants use the environment as a medium of

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 3

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

communication. They exchange information indirectly by depositing

pheromones, all detailing the status of their "work". The information

exchanged has a local scope, only an ant located where the

pheromones were left has a notion of them. This system is called

"Stigmergy" and occurs in many social animal societies (it has been

studied in the case of the construction of pillars in the nests of

termites). The mechanism to solve a problem too complex to be

addressed by single ants is a good example of a self-organized

system. This system is based on positive feedback (the deposit of

pheromone attracts other ants that will strengthen it by themselves)

and negative (dissipation of the route by evaporation prevents the

system from thrashing). Theoretically, if the quantity of pheromone

remained the same over time on all edges, no route would be chosen.

However, because of feedback, a slight variation on an edge will be

amplified and thus allow the choice of an edge. The algorithm will

move from an unstable state in which no edge is stronger than

another, to a stable state where the route is composed of the

strongest edges.

Figure 1. Behavior of real ant movements

2.1.1 Ant Colony Optimization meta-heuristic Algorithm

Let G = (V, E) be a connected graph with n = |V | nodes. The simple ant

colony optimization meta-heuristic can be used to find the shortest path

between a source node vs and a destination node vd on the graph G [7]. The

path length is given by the number of nodes on the path. Each edge e(i, j) ∈

E of the graph connecting the nodes vi and vj has a variable ϕi,j (artificial

pheromone), which is modified by the ants when they visit the node. The

pheromone concentration, ϕi, j is an indication of the usage of this edge. An

ant located in node vi uses pheromone ϕi, j of node vj ∈ Ni to compute the

probability of node vj as next hop. Ni is the set of one-step neighbors of

node vi.

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 4

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

𝜑𝑖, 𝑗 if j ∈ Ni

𝑝𝑖, 𝑗 = 𝑗 ∈ 𝑁𝑖 𝜑𝑖, 𝑗

0 if j ∈ Ni

The transition probabilities pi, j of a node vi fulfill the constraint:

𝑗 ∈𝑁𝑖 𝑝𝑖, 𝑗 = 1, 𝑖 ∈ [1, 𝑁]

During the route finding process, ants deposit pheromone on the edges. In

the simple ant colony optimization meta-heuristic algorithm, the ants

deposit a constant amount Δϕ of pheromone. An ant changes the amount of

pheromone of the edge e(vi, vj) when moving from node vi to node vj as

follows:

𝜑𝑖, 𝑗 ≔ 𝜑𝑖, 𝑗 + ∆𝜑 (1)

Like real pheromone the artificial pheromone concentration decreases with

time to inhibit a fast convergence of pheromone on the edges. In the simple

ant colony optimization meta-heuristic, this happens exponentially:

𝜑𝑖, 𝑗 ≔ 1 − 𝑞 . 𝜑𝑖, 𝑗, 𝑞 ∈ (0,1] (2)

2.2 Particle Swarm Optimization (PSO) Model

The second example of a successful swarm intelligence model is Particle

Swarm Optimization (PSO), which was introduced by Russell Eberhart, an

electrical engineer, and James Kennedy, a social psychologist, in 1995. PSO

was originally used to solve non-linear continuous optimization problems,

but more recently it has been used in many practical, real-life application

problems. For example, PSO has been successfully applied to track dynamic

systems [9], evolve weights and structure of neural networks, analyze

human tremor [11], register 3D-to-3D biomedical image [12], control

reactive power and voltage [13], even learning to play games [14] and

music composition [15]. PSO draws inspiration from the sociological

behaviour associated with bird flocking. It is a natural observation that birds

can fly in large groups with no collision for extended long distances,

making use of their effort to maintain an optimum distance between

themselves and their neighbours. This section presents some details about

birds in nature and overviews their capabilities, as well as their sociological

flocking behaviour.

2.2.1 Birds in Nature

Vision is considered as the most important sense for flock organization. The

eyes of most birds are on both sides of their heads, allowing them to see

objects on each side at the same time. The larger size of bird‟s eyes relative

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 5

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

to other animal groups is one reason why birds have one of the most highly

developed senses of vision in the animal kingdom. As a result of such large

sizes of bird‟s eyes, as well as the way their heads and eyes are arranged,

most species of birds have a wide field of view. For example, Pigeons can

see 300 degrees without turning their head, and American Woodcocks have,

amazingly, the full 360-degree field of view. Birds are generally attracted

by food; they have impressive abilities in flocking synchronously for food

searching and long-distance migration. Birds also have an efficient social

interaction that enables them to be capable of: (i) flying without collision

even while often changing direction suddenly, (ii) scattering and quickly

regrouping when reacting to external threats, and (iii) avoiding predators.

Birds Flocking Behaviour

The emergence of flocking and schooling in groups of interacting agents

(such as birds, fish, penguins, etc.) have long intrigued a wide range of

scientists from diverse disciplines including animal behaviour, physics,

social psychology, social science, and computer science for many decades .

Bird flocking can be defined as the social collective motion behaviour of a

large number of interacting birds with a common group objective. The local

interactions among birds (particles) usually emerge the shared motion

direction of the swarm,. Such interactions are based on the nearest

neighbour principle where birds follow certain flocking rules to 17 adjust

their motion (i.e., position and velocity) based only on their nearest

neighbours, without any central coordination. In 1986, birds flocking

behavior were first simulated on a computer by Craig Reynolds. The

pioneering work of Reynolds proposed three simple flocking rules to

implement a simulated flocking behaviour of birds: (i) flock centering (flock

members attempt to stay close to nearby flockmates by flying in a direction

that keeps them closer to the centroid of the nearby flockmates), (ii)

collision avoidance (flock members avoid collisions with nearby flockmates

based on their relative position), and (iii) velocity matching (flock members

attempt to match velocity with nearby flockmates) .

Although the underlying rules of flocking behavior can be considered

simple, the flocking is visually complex with an overall motion that looks

fluid yet it is made of discrete birds. One should note here that collision

avoidance rule serves to establish the minimum required separation

distance, whereas velocity matching rule helps to maintain such separation

distance during flocking; thus, both rules act as a complement to each other.

In fact, both rules together ensure that members of a simulated flock are free

to fly without running into one another, no matter how many they are. It is

worth mentioning that the three aforementioned flocking rules of Reynolds

are generally known as cohesion, separation, and alignment rules in the

literature. For example, according to the animal cognition and animal

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 6

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

behavior research, individuals of animals in nature are frequently observed

to be attracted towards other individuals to avoid being isolated and to align

themselves with neighbours. Reynolds rules are also compared to the

evaluation, comparison, and imitation principles of the Adaptive Culture

Model in the Social Cognitive Theory.

2.2.2 The Original PSO Algorithm

The original PSO was designed as a global version of the algorithm, that is,

in the original PSO algorithm, each particle globally compares its fitness to

the entire swarm population and adjusts its velocity towards the swarm„s

global best particle. There are, however, recent versions of local/topological

PSO algorithms, in which the comparison process is locally performed

within a predetermined neighbourhood topology. Unlike the original version

of ACO, the original PSO is designed to optimize real-value continuous

problems, but the PSO algorithm has also been extended to optimize binary

or discrete problems. The original version of the PSO algorithm is

essentially described by the following two simple velocity and position

update equations, shown in 3 and 4 respectively.

vid(t+1)= vid(t) + c1 R1(pid(t) – xid(t)) + c2 R2 (pgd(t) – xid(t)) (3)

xid(t+1) = xid(t) + vid(t+1) (4)

Where:

vid represents the rate of the position change (velocity) of the ith particle

in the dth dimension, and t denotes the iteration counter.

xid represents the position of the ith particle in the dth dimension. It is

worth noting here that xi is referred to as the ith particle itself, or as a

vector of its positions in all dimensions of the problem space. The n-

dimensional problem space has a number of dimensions that equals to

the numbers of variables of the desired fitness function to be optimized.

pid represents the historically best position of the ith particle in the dth

dimension (or, the position giving the best ever fitness value attained by

xi).

pgd represents the position of the swarm„s global best particle (xg) in

the dth dimension (or, the position giving the global best fitness value

attained by any particle among the entire swarm).

R1 and R2 are two n-dimensional vectors with random numbers

uniformly selected in the range of [0.0, 1.0], which introduce useful

randomness for the search strategy. It worth noting that each dimension

has its own random number, r, because PSO operates on each dimension

independently.

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 7

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

c1 and c2 are positive constant weighting parameters, also called the

cognitive and social parameters, respectively, which control the relative

importance of particle„s private experience versus swarm„s social

experience (or, in other words, it controls the movement of each particle

towards its individual versus global best position ). It is worth

emphasizing that a single weighting parameter, c, called the acceleration

constant or the learning factor, was initially used in the original version

of PSO, and was typically set to equal 2 in some applications (i.e., it was

initially considered that c1 = c2 = c = 2). But, to better control the

search ability, recent versions of PSO are now using different weighting

parameters which generally fall in the range of [0,4] with c1 + c2 = 4 in

some typical applications . The values of c1 and c2 can remarkably

affect the search ability of PSO by biasing the new position of xi toward

its historically best position (its own private experiences, Pi), or the

globally best position (the swarm„s overall social experience, Pg):

High values of c1 and c2 can provide new positions in

relatively distant regions of the search space, which often

leads to a better global exploration, but it may cause the

particles to diverge.

Small values of c1 and c2 limit the movement of the particles,

which generally leads to a more refined local search around

the best positions achieved.

When c1 > c2, the search behaviour will be biased towards

particles historically best experiences.

When c1 < c2, the search behaviour will be biased towards

the swarm„s globally best experience.

The velocity update equation in (3) has three main terms: (i) The first term,

vid(t), is sometimes referred to as inertia, momentum or habit . It ensures

that the velocity of each particle is not changed abruptly, but rather the

previous velocity of the particle is taken into consideration. That is why the

particles generally tend to continue in the same direction they have been

flying, unless there is a really major difference between the particle„s

current position from one side, and the particle„s historically best position or

the swarm„s globally best position from the other side (which means the

particle starts to move in the wrong direction).

This term has a particularly important role for the swarm„s globally best

particle, xg, This is because if a particle, xi, discovers a new position with a

better fitness value than the fitness of swarm„s globally best particle, then it

becomes the global best (i.e., g←i). In this case, its historically best

position, pi, will coincide with both the swarm„s global best position, pg,

and its own position vector, xi, in the next iteration (i.e., pi = xi = pg).

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 8

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

Therefore, the effect of last two terms in equation (3) will be no longer

there, since in this special case pid(t) – xid(t) = pig(t) – xid(t) = 0.

This will prevent the global best particle to change its velocity (and thus its

position), so it will keep staying at its same position for several iterations, as

long as there was no way to offer an inertial movement and there has been

no new best position discovered by another particle. Alternatively, when the

previous velocity term is included in the velocity updating equation (3), the

global best particle will continue its exploration of the search space using

the inertial movement of its previous velocity. (ii) The second term, ( pid(t)

– xid(t) ), is the cognitive‖ part of the equation that implements a linear

attraction towards the historically best position found so far by each

particle. This term represents the private-thinking or the self-learning

component from each particle„s flying experience, and is often referred to as

local memory, self-knowledge, nostalgia or remembrance. (iii) The third

term, ( pgd(t) – xid(t) ), is the social part of the equation that implements a

linear attraction towards the globally best position ever found by any

particle . This term represents the experience-sharing or the group-learning

component from the overall swarm„s flying experience, and is often referred

to as cooperation, social knowledge, group knowledge or shared

information.

3. COMPARISON BETWEEN THE TWO SI MODELS

Despite both models are principally similar in their inspirational origin (the

intelligence of swarms), and are based on nature-inspired properties, they are

fundamentally different in the following aspects.

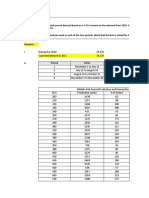

Table 1: Comparison of ACO and PSO

Criteria ACO PSO

ACO uses an indirect The communication among

Communication communication mechanism particles in PSO is rather direct

Mechanism among ants, called stigmergy, without altering the environment.

which means interaction

through the environment

ACO was originally used to PSO was originally used to solve

solve combinatorial (discrete) continuous problems, but it was

Problem Types

optimization problems, but it later modified to adapt

was later modified to adapt binary/discrete optimization

continuous problems problems.

ACO‟s solution space is PSO‟s solution space is typically

Problem

typically represented as a represented as a set of n-

Representation

weighted graph, called dimensional points

construction graph.

Algorithm ACO is commonly more PSO is commonly more

Applicability applicable to problems where applicable to problems where

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 9

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

source and destination are previous and next particle

predefined and specific. positions at each point are clear

and uniquely defined.

ACO‟s objective is generally PSO‟s objective is generally

Algorithm

searching for an optimal path finding the location of an optimal

Objective

in the construction graph. point in a Cartesian coordinate

system.

Sequential ordering, Track dynamic systems, evolve

Examples of scheduling, assembly line NN weights, analyze human

Algorithm balancing, probabilistic TSP, tremor, register 3D-to-3D

Applications DNA sequencing, 2D-HP biomedical image, control

protein folding, and protein– reactive power and voltage, and

ligand docking. even play games .

4. CONCLUSION

The ACO and PSO can be analyzed for future enhancement such that new

research could be focused to produce better solution by improving the

effectiveness and reducing the limitations. More possibilities for

dynamically determining the best destination through ACO can be evolved

and a plan to endow PSO with fitness sharing aiming to investigate whether

this helps in improving performance. In future the velocity of each

individual must be updated by taking the best element found in all iterations

rather than that of the current iteration only.

REFERENCES

[1] R Kumar, M K Tiwari and R Shankar, " Scheduling of flexible manufacturing systems:

an ant colony optimization approach", Proceedings Instn Mech Engrs Vol. 217, Part B: J

Engineering Manufacture, 2003,pp 1443-1453.

[2] Kuan Yew Wong, Phen Chiak See, " A New minimum pheromone threshold

strategy(MPTS) for Max-min ant system ", Applied Soft computing, Vol. 9, 2009, pp 882-

888.

[3] David C Mathew, “Improved Lower Limits for Pheromone Trails in ACO", G Rudolf et

al(Eds), LNCS 5199, pp 508-517, Springer Verlag, 2008.

[4] Laalaoui Y, Drias H, Bouridah A and Ahmed R B, " Ant Colony system with stagnation

avoidance for the scheduling of real time tasks", Computational Intelligence in scheduling,

IEEE symposium, 2009, pp 1-6.

[5] E Priya Darshini, " Implementation of ACO algorithm for EDGE detection and Sorting

Salesman problem",International Journal of Engineering science and Technology, Vol 2, pp

2304-2315, 2010

[6] Alaa Alijanaby, KU Ruhana Kumahamud, Norita Md Norwawi, "Interacted Multiple

Ant a. Colonies optimization Frame work: an experimental study of the evaluation and the

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 10

International Journal of Computer Science and Business Informatics

IJCSBI.ORG

exploration techniques to control the search stagnation", International Journal of

Advancements in computing Technology Vol. 2, No 1, March 2010, pp 78-85

[7] Raka Jovanovic and Milan Tuba, " An ant colony optimization algorithm with

improved pheromone correction strategy for the minimum weight vertex cover problem",

Elsvier,Applied Soft Computing, PP 5360-5366,2011.

[8] Zar Ch Su Su Hlaing, May Aye Lhine, " An Ant Colony Optimisation Algorithm for

solving Traveling Salesman Problem", International Conference on Information

Communication and Management( IPCSIT), Vol,6, pp 54-59, 2011.

[9] D. Karaboga, “An Idea Based On Honey Bee Swarm for Numerical Optimization”,

Technical Report-TR06,Erciyes University, Engineering Faculty, Computer Engineering

Department, 2005.

[10] M. Bakhouya and J. Gaber, “An Immune Inspired-based Optimization Algorithm:

Application to the Traveling Salesman Problem, Advanced Modeling and Optimization”,

Vol. 9, No. 1, pp. 105-116, 2007.

[11] K. N. Krishnanand and D. Ghose, ”Glowworm Swarm Optimization for searching

higher dimensional spaces”. In: C. P. Lim, L. C. Jain, and S. Dehuri (eds.) Innovations in

Swarm Intelligence. Springer, Heidelberg, 2009.

[12] M. P. Wachowiak, R. Smolíková, Y. Zheng, J. M. Zurada, and A. S. Elmaghraby, “An

approach to multimodal biomedical image registration utilizing particle swarm

optimization”, IEEE Transactions on Evolutionary Computation, 2004.

[13] L. Messerschmidt, A. P. Engelbrecht, “Learning to play games using a PSO-based

competitive learning approach”, IEEE Transactions on Evolutionary Computation, 2004.

[14] T. Blackwell and P. J. Bentley, “Improvised music with swarms, In David B. Fogel,

Mohamed A. El-Sharkawi, Xin Yao, Garry Greenwood, Hitoshi Iba, Paul Marrow, and

Mark Shackleton (eds.), Proceedings of the 2002 Congress on Evolutionary Computation

CEC 2002, pages 1462–1467, IEEE Press, 2002.

ISSN: 1694-2108 | Vol. 1, No. 1. MAY 2013 11

S-ar putea să vă placă și

- Swarm Intelligence: Jawaharlal Nehru Technological University, ANANTAPUR-515002Document12 paginiSwarm Intelligence: Jawaharlal Nehru Technological University, ANANTAPUR-515002lakshmanprathapÎncă nu există evaluări

- Swarm Inteligence Seminar ReportDocument20 paginiSwarm Inteligence Seminar ReportAbhilash Nayak100% (3)

- Ant Colony Optimization AlgorithmsDocument13 paginiAnt Colony Optimization AlgorithmsMahesh Singh DanuÎncă nu există evaluări

- Ant Colony Optimization: For Image Edge DetectionDocument18 paginiAnt Colony Optimization: For Image Edge Detectionmanohar903Încă nu există evaluări

- Ant Colony Optimization Algorithms: Interaction Toolbox Print/export LanguagesDocument25 paginiAnt Colony Optimization Algorithms: Interaction Toolbox Print/export LanguagesMuhammad WasifÎncă nu există evaluări

- The Science of Ant Communication: A Discussion of How Ants Talk to Each OtherDe la EverandThe Science of Ant Communication: A Discussion of How Ants Talk to Each OtherEvaluare: 5 din 5 stele5/5 (1)

- 10 1109@irce50905 2020 9199239Document8 pagini10 1109@irce50905 2020 9199239Rodrigo GuevaraÎncă nu există evaluări

- Ijecet: International Journal of Electronics and Communication Engineering & Technology (Ijecet)Document12 paginiIjecet: International Journal of Electronics and Communication Engineering & Technology (Ijecet)IAEME PublicationÎncă nu există evaluări

- TM03 ACO IntroductionDocument18 paginiTM03 ACO IntroductionHafshah DeviÎncă nu există evaluări

- Ant Colony OptimizationDocument10 paginiAnt Colony OptimizationPranav SondhiÎncă nu există evaluări

- SW Int WriteupDocument6 paginiSW Int WriteupNathan TomerÎncă nu există evaluări

- Ant Colony Optimization AlgorithmDocument3 paginiAnt Colony Optimization AlgorithmIbrahim DewaliÎncă nu există evaluări

- Final Ant ColonyDocument23 paginiFinal Ant ColonyChitransh ShrivastavaÎncă nu există evaluări

- Ant Colony Optimization Algorithms: Fundamentals and ApplicationsDe la EverandAnt Colony Optimization Algorithms: Fundamentals and ApplicationsÎncă nu există evaluări

- cs621 Lect7 SI 13aug07Document47 paginics621 Lect7 SI 13aug07xiditoÎncă nu există evaluări

- Tech-Soc Simchamp 2012: Ant Colony SimulationDocument3 paginiTech-Soc Simchamp 2012: Ant Colony SimulationJustin SimpsonÎncă nu există evaluări

- Swarm Intelligence For BeginnersDocument20 paginiSwarm Intelligence For Beginnersankita dablaÎncă nu există evaluări

- Ant Colony Optimization (Aco) : Submitted By: Subvesh Raichand Mtech, 3 Semester 07204007 Electronics and CommunicationDocument22 paginiAnt Colony Optimization (Aco) : Submitted By: Subvesh Raichand Mtech, 3 Semester 07204007 Electronics and CommunicationSuresh ThallapelliÎncă nu există evaluări

- Ant Algorithms: Theory and Applications: S. D. ShtovbaDocument12 paginiAnt Algorithms: Theory and Applications: S. D. ShtovbaAhmed ArafaÎncă nu există evaluări

- Models and Concepts of Life and IntelligenceDocument13 paginiModels and Concepts of Life and IntelligenceKishor KunalÎncă nu există evaluări

- Structures and Tasks - But All Contributing To A: Dr. Eng. Syafaruddin, S.T, M.Eng Syafaruddin@unhas - Ac.idDocument16 paginiStructures and Tasks - But All Contributing To A: Dr. Eng. Syafaruddin, S.T, M.Eng Syafaruddin@unhas - Ac.idIkhwanul RamadanÎncă nu există evaluări

- Chapter-1: Swarm IntelligenceDocument25 paginiChapter-1: Swarm IntelligenceAbhishek GuptaÎncă nu există evaluări

- Optimization Algorithms: Swarm IntelligenceDocument11 paginiOptimization Algorithms: Swarm IntelligenceRupanshi SikriÎncă nu există evaluări

- Tutorial ACODocument1 paginăTutorial ACOAmalina ZakariaÎncă nu există evaluări

- SI For TSP KuehnerDocument8 paginiSI For TSP KuehnerGame kun01Încă nu există evaluări

- Optimization: Applications: Bee Colony Principles andDocument6 paginiOptimization: Applications: Bee Colony Principles andFranciscoÎncă nu există evaluări

- Ant Colony OptimizationDocument13 paginiAnt Colony Optimizationjaved765100% (1)

- Ant Colony OptimizationDocument12 paginiAnt Colony OptimizationMauro Muñoz MedinaÎncă nu există evaluări

- Ants Fermat PrincipleDocument3 paginiAnts Fermat PrincipleOTTO_Încă nu există evaluări

- AntColonyOptimization ATutorialReviewDocument7 paginiAntColonyOptimization ATutorialReviewVishwasÎncă nu există evaluări

- Ant Colony Optimization: A Tutorial Review: August 2015Document7 paginiAnt Colony Optimization: A Tutorial Review: August 2015VishwasÎncă nu există evaluări

- Ant Colony OptimizationDocument18 paginiAnt Colony OptimizationAster AlemuÎncă nu există evaluări

- Ant Ieee TecDocument29 paginiAnt Ieee TecMilay BhattÎncă nu există evaluări

- 231 Sem MishDocument28 pagini231 Sem MishMonika kakadeÎncă nu există evaluări

- 231 Sem MishDocument28 pagini231 Sem MishMonika kakadeÎncă nu există evaluări

- Ant Colony OptimizationDocument24 paginiAnt Colony OptimizationKazeel SilmiÎncă nu există evaluări

- Comparative Analysis of Ant Colony and Particle Swarm Optimization TechniquesDocument6 paginiComparative Analysis of Ant Colony and Particle Swarm Optimization TechniquesSrijan SehgalÎncă nu există evaluări

- Journal of Advanced Computing and Communication Technologies (ISSNDocument3 paginiJournal of Advanced Computing and Communication Technologies (ISSNوديع القباطيÎncă nu există evaluări

- Swarm Intelligence: From Natural To Artificial SystemsDocument42 paginiSwarm Intelligence: From Natural To Artificial SystemsneuralterapianetÎncă nu există evaluări

- Cat Hunting Optimization Algorithm: A Novel Optimization AlgorithmDocument23 paginiCat Hunting Optimization Algorithm: A Novel Optimization AlgorithmGogyÎncă nu există evaluări

- Reid Et Al PNAS-2012Document5 paginiReid Et Al PNAS-2012domraorÎncă nu există evaluări

- Data Mining With An Ant Colony Optimization Algorithm: Rafael S. Parpinelli, Heitor S. Lopes, and Alex A. FreitasDocument29 paginiData Mining With An Ant Colony Optimization Algorithm: Rafael S. Parpinelli, Heitor S. Lopes, and Alex A. FreitasPaulo SuchojÎncă nu există evaluări

- Application of ACODocument7 paginiApplication of ACOfunky_zorroÎncă nu există evaluări

- Ants' Navigation in An Unfamiliar Environment Is Influenced by Their Experience of A Familiar RouteDocument7 paginiAnts' Navigation in An Unfamiliar Environment Is Influenced by Their Experience of A Familiar RoutePablo EspinozaÎncă nu există evaluări

- PIIS0960982218301635Document3 paginiPIIS0960982218301635d2cc8hbcjcÎncă nu există evaluări

- Kids Ant FactsDocument3 paginiKids Ant Factsaditya patelÎncă nu există evaluări

- Springer Behavioral Ecology and SociobiologyDocument12 paginiSpringer Behavioral Ecology and SociobiologyNam LeÎncă nu există evaluări

- Analytical ReasoningDocument7 paginiAnalytical ReasoningAbcdÎncă nu există evaluări

- Swarm Intelligence PDFDocument7 paginiSwarm Intelligence PDFGanesh BishtÎncă nu există evaluări

- Simulating An Ant Colony PDFDocument17 paginiSimulating An Ant Colony PDFანგელოზი აბელისÎncă nu există evaluări

- The Secret Lives of Ants Exploring the Microcosm of a Tiny KingdomDe la EverandThe Secret Lives of Ants Exploring the Microcosm of a Tiny KingdomÎncă nu există evaluări

- Script - 10 Insect Behaviour200228080802023232Document8 paginiScript - 10 Insect Behaviour200228080802023232muhammedshahzeb272Încă nu există evaluări

- AnimalcomparisonlabsDocument3 paginiAnimalcomparisonlabsapi-250430638Încă nu există evaluări

- Mirjalili2016 Article DragonflyAlgorithmANewMeta-heu 2Document21 paginiMirjalili2016 Article DragonflyAlgorithmANewMeta-heu 2ZaighamAbbasÎncă nu există evaluări

- Optimal Design of PID Controller Using Bacterial Foraging Algorithm For AVR SystemDocument6 paginiOptimal Design of PID Controller Using Bacterial Foraging Algorithm For AVR SystemMajid RostamiÎncă nu există evaluări

- Appendix: Figure Questions Concept Check 1.3Document2 paginiAppendix: Figure Questions Concept Check 1.3Bahan Tag AjaÎncă nu există evaluări

- Swarm Intelligence Swarm IntelligenceDocument28 paginiSwarm Intelligence Swarm IntelligenceBanda E WaliÎncă nu există evaluări

- Week5 (AI Inspiration)Document31 paginiWeek5 (AI Inspiration)Karim Abd El-GayedÎncă nu există evaluări

- Vol 17 No 2 - July-December 2017Document39 paginiVol 17 No 2 - July-December 2017ijcsbiÎncă nu există evaluări

- Vol 15 No 5 - September 2015Document34 paginiVol 15 No 5 - September 2015ijcsbiÎncă nu există evaluări

- Vol 16 No 2 - July-December 2016Document91 paginiVol 16 No 2 - July-December 2016ijcsbiÎncă nu există evaluări

- Vol 16 No 1 - January-June 2016Document63 paginiVol 16 No 1 - January-June 2016ijcsbiÎncă nu există evaluări

- Vol 15 No 3 - May 2015Document85 paginiVol 15 No 3 - May 2015ijcsbiÎncă nu există evaluări

- Vol 17 No 1 - January June 2017Document42 paginiVol 17 No 1 - January June 2017ijcsbiÎncă nu există evaluări

- Vol 15 No 6 - November 2015Document42 paginiVol 15 No 6 - November 2015ijcsbiÎncă nu există evaluări

- Vol 14 No 1 - July 2014Document105 paginiVol 14 No 1 - July 2014ijcsbiÎncă nu există evaluări

- Vol 15 No 1 - January 2015Document92 paginiVol 15 No 1 - January 2015ijcsbiÎncă nu există evaluări

- Vol 15 No 2 - March 2015Document62 paginiVol 15 No 2 - March 2015ijcsbiÎncă nu există evaluări

- Vol 14 No 2 - September 2014Document149 paginiVol 14 No 2 - September 2014ijcsbiÎncă nu există evaluări

- Vol 15 No 4 - July 2015Document41 paginiVol 15 No 4 - July 2015ijcsbiÎncă nu există evaluări

- Vol 13 No 1 - May 2014Document114 paginiVol 13 No 1 - May 2014ijcsbiÎncă nu există evaluări

- Vol 7 No 1 - November 2013Document105 paginiVol 7 No 1 - November 2013ijcsbiÎncă nu există evaluări

- Vol 8 No 1 - December 2013Document96 paginiVol 8 No 1 - December 2013ijcsbiÎncă nu există evaluări

- Vol 11 No 1 - March 2014Document116 paginiVol 11 No 1 - March 2014ijcsbiÎncă nu există evaluări

- Vol 14 No 3 - November 2014Document50 paginiVol 14 No 3 - November 2014ijcsbiÎncă nu există evaluări

- Vol 10 No 1 - February 2014Document94 paginiVol 10 No 1 - February 2014ijcsbiÎncă nu există evaluări

- Vol 2 No 1 - June 2013Document121 paginiVol 2 No 1 - June 2013ijcsbiÎncă nu există evaluări

- The International Journal of Computer Science and Business InformaticsDocument1 paginăThe International Journal of Computer Science and Business InformaticsijcsbiÎncă nu există evaluări

- Vol 5 No 1 - September 2013Document131 paginiVol 5 No 1 - September 2013ijcsbiÎncă nu există evaluări

- Vol 4 No 1 - August 2013Document100 paginiVol 4 No 1 - August 2013ijcsbiÎncă nu există evaluări

- The International Journal of Computer Science and Business InformaticsDocument1 paginăThe International Journal of Computer Science and Business InformaticsijcsbiÎncă nu există evaluări

- The International Journal of Computer Science and Business InformaticsDocument1 paginăThe International Journal of Computer Science and Business InformaticsijcsbiÎncă nu există evaluări

- National Service Training Program 1Document13 paginiNational Service Training Program 1Charlene NaungayanÎncă nu există evaluări

- Project Management Pro: Powerpoint SlidesDocument350 paginiProject Management Pro: Powerpoint SlidesJosephÎncă nu există evaluări

- Diec Russias Demographic Policy After 2000 2022Document29 paginiDiec Russias Demographic Policy After 2000 2022dawdowskuÎncă nu există evaluări

- MPLS Fundamentals - Forwardi..Document4 paginiMPLS Fundamentals - Forwardi..Rafael Ricardo Rubiano PavíaÎncă nu există evaluări

- Articles About Gossip ShowsDocument5 paginiArticles About Gossip ShowssuperultimateamazingÎncă nu există evaluări

- Hybrid and Derivative Securities: Learning GoalsDocument2 paginiHybrid and Derivative Securities: Learning GoalsKristel SumabatÎncă nu există evaluări

- S1-TITAN Overview BrochureDocument8 paginiS1-TITAN Overview BrochureصصÎncă nu există evaluări

- Philosophies PrinceDocument4 paginiPhilosophies PrincePrince CuetoÎncă nu există evaluări

- Etsi en 300 019-2-2 V2.4.1 (2017-11)Document22 paginiEtsi en 300 019-2-2 V2.4.1 (2017-11)liuyx866Încă nu există evaluări

- Apforest Act 1967Document28 paginiApforest Act 1967Dgk RajuÎncă nu există evaluări

- The Nervous System 1ae60 62e99ab3Document1 paginăThe Nervous System 1ae60 62e99ab3shamshadÎncă nu există evaluări

- Solar SystemDocument3 paginiSolar SystemKim CatherineÎncă nu există evaluări

- Athletic KnitDocument31 paginiAthletic KnitNish A0% (1)

- Coffee in 2018 The New Era of Coffee EverywhereDocument55 paginiCoffee in 2018 The New Era of Coffee Everywherec3memoÎncă nu există evaluări

- CTY1 Assessments Unit 6 Review Test 1Document5 paginiCTY1 Assessments Unit 6 Review Test 1'Shanned Gonzalez Manzu'Încă nu există evaluări

- B. Complete The Following Exercise With or Forms of The Indicated VerbsDocument4 paginiB. Complete The Following Exercise With or Forms of The Indicated VerbsLelyLealGonzalezÎncă nu există evaluări

- V.S.B Engineering College, KarurDocument3 paginiV.S.B Engineering College, KarurKaviyarasuÎncă nu există evaluări

- Douglas Boyz Release Bridging The Gap Via Extraordinary Collective Music GroupDocument2 paginiDouglas Boyz Release Bridging The Gap Via Extraordinary Collective Music GroupPR.comÎncă nu există evaluări

- Operations Management-CP PDFDocument4 paginiOperations Management-CP PDFrey cedricÎncă nu există evaluări

- Gardens Illustrated FernandoDocument4 paginiGardens Illustrated FernandoMariaÎncă nu există evaluări

- Past Paper1Document8 paginiPast Paper1Ne''ma Khalid Said Al HinaiÎncă nu există evaluări

- LMR - 2023 04 14Document5 paginiLMR - 2023 04 14Fernando ShitinoeÎncă nu există evaluări

- 4aeaconditionals 110228122737 Phpapp01Document24 pagini4aeaconditionals 110228122737 Phpapp01Luis Fernando Ponce JimenezÎncă nu există evaluări

- World Price List 2014: Adventys Induction Counter TopsDocument4 paginiWorld Price List 2014: Adventys Induction Counter TopsdiogocorollaÎncă nu există evaluări

- Palladium Skill Book 1a.Document10 paginiPalladium Skill Book 1a.Randy DavisÎncă nu există evaluări

- Sajid, Aditya (Food Prossing)Document29 paginiSajid, Aditya (Food Prossing)Asif SheikhÎncă nu există evaluări

- Physics Tadka InstituteDocument15 paginiPhysics Tadka InstituteTathagata BhattacharjyaÎncă nu există evaluări

- Good Governance and Social Responsibility-Module-1-Lesson-3Document11 paginiGood Governance and Social Responsibility-Module-1-Lesson-3Elyn PasuquinÎncă nu există evaluări

- Malware Reverse Engineering HandbookDocument56 paginiMalware Reverse Engineering HandbookAJGMFAJÎncă nu există evaluări

- Indian Retail Industry: Structure, Drivers of Growth, Key ChallengesDocument15 paginiIndian Retail Industry: Structure, Drivers of Growth, Key ChallengesDhiraj YuvrajÎncă nu există evaluări