Documente Academic

Documente Profesional

Documente Cultură

A Comparison of SIFT and Harris Conner Features For Correspondence Points Matching

Încărcat de

rajali gintingTitlu original

Drepturi de autor

Formate disponibile

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentDrepturi de autor:

Formate disponibile

A Comparison of SIFT and Harris Conner Features For Correspondence Points Matching

Încărcat de

rajali gintingDrepturi de autor:

Formate disponibile

A Comparison of SIFT and Harris Conner Features

for Correspondence Points Matching

My-Ha Le Byung-Seok Woo Kang-Hyun Jo

Graduated School of Electrical Graduated School of Electrical Graduated School of Electrical

Engineering Engineering Engineering

University of Ulsan, Ulsan, Korea University of Ulsan, Ulsan, Korea University of Ulsan, Ulsan, Korea

lemyha@islab.ulsan.ac.kr bswoo@islab.ulsan.ac.kr acejo@ulsan.ac.kr

Abstract—This paper presents a comparative study of two discussion in section 4, conclusion is showed in section 5.

competing features for the task of finding correspondence points Paper is finished with acknowledgement in section 6.

in consecutive image frames. One is the Harris corner feature

and the other is the SIFT feature. First, consecutive frames are

extracted from video data then two kinds of features are

II. SIFT

computed. Second, correspondence points matching will be SIFT [4] is first presented by David G Lowe in 1999 and it

found. The results of SIFT key points matching and Harris key is completely presented in [5]. As we know on experiments of

points will be compare and discussed. The comparison based on his proposed algorithm is very invariant and robust for feature

the result of implementation video data of outdoor with matching with scaling, rotation, or affine transformation.

difference condition of intensity and angle of view.

According to those conclusions, we utilize SIFT feature points

Keywords- SIFT; Harris conner; correspondence points to find correspondent points of two sequence images. The

matching SIFT algorithm are described through these main steps: scale-

space extrema detection, accurate keypoint localization,

I. INTRODUCTION orientation assignment and keypoint descriptor.

3D objects reconstruction is one of important process in A. Scale space extrema detection

application of virtual environment, navigation of robots and First, we build the pyramid of image by continuous smooth

object recognition. Important progress has been made in the with Gaussian mask. DoG (Difference of Gaussian) pyramid

reconstruction of 3D from multiple images and image of the image will be obtained by subtraction adjacent

sequences obtained by cameras during the last few years. In smoothed images. By comparing each pixel of current scale

this technology, correspondence points matching are the most with upper and lower scales in the region 3x3, i.e. 26 pixels,

important process to find fundamental matrix, essential matrix we can find the maximum or minimum value among them.

and camera matrix. These points are also considered as candidate of keypoint. The

Many kind of features are considered in recent research equations below will be used to describe Gaussian function,

include Harris, SIFT, PCA-SIFT, SUFT, etc [1], [2]. In this scale space and DoG.

paper, we considered those kinds of features and check the

result of comparison. Harris corner features and SIFT are 1 2 2

) / 2σ 2

computed then the correspondence points matching will be G ( x, y , σ ) = e −( x + y (1)

found. The comparisons of these kinds of features are checked 2πσ 2

for correct points matching. Once descriptor of key points

localization of SIFT were found, we measure the distance of L ( x, y , σ ) = G ( x, y , σ ) * I ( x, y ) (2)

two set points by Euclidian distance. Whereas, Harris corner

points were found, matching of correspondence points can be Where * is the convolution operation in x and y.

obtained by correlation method. The results of these methods

are useful for 3D object reconstruction [3] especially for our D(x, y,σ) = (G(x, y,kσ) −G(x, y,σ)*I(x, y) = L(x, y, kσ) −L(x, y,σ) (3)

research field: building reconstruction in outdoor scene of

autonomous mobile robot. B. Accurate keypoint localization

This paper is organized into 6 sections. The next section The initial result of this algorithm, he just considers

describes SIFT feature computation, section 3 is Harris corner keypoint location is at the central of sample point. However

feature computation. We explain matching problem and this is not the correct maximum location of keypoint then we

This research was supported by the MKE(The Ministry of Knowledge

Economy), Korea, under the Human Resources Development Program for

Convergence Robot Specialists support program supervised by the

NIPA(National IT Industry Promotion Agency) (NIPA-2010-C7000-1001-

0007)

1 ∂D −1

D( xˆ ) = D + xˆ (6)

2 ∂x

If the value of D (xˆ ) is below a threshold, this point will

be excluded.

To eliminate poorly localized extrema we use the fact that

(a) in these cases there is a large principle curvature across the

edge but a small curvature in the perpendicular direction in the

difference of Gaussian function. A 2x2 Hessian matrix, H,

computed at the location and scale of the key point is used to

find the curvature. With these formulas, the ratio of principle

curvature can be checked efficiently.

(b) ⎡ Dxx Dxy ⎤

H =⎢ (7)

⎣ Dxy Dyy ⎥⎦

( Dxx + D yy ) 2 (r + 1) 2

< (8)

Dxx D yy − ( Dxy ) 2 r

So if inequality (8) fails, the keypoint is removed from the

candidate list.

C. Key points orientation assignment

Each key point is assign with consistent orientation based

on local image properties so that the descriptor has a character

of rotation invariance. This step can be described by two

(c) equations below:

Fig. 1. Keypoint detection. (a) Pyramid images, (b) DoG

(Difference of Gaussian), (c) keypoint candidate detection. m(x, y) = (L(x +1, y) − L(x −1, y))2 + (L(x, y +1) − L(x, y −1))2 (9)

need a 3D quadratic function to fit the local sample points to

( L( x, y + 1) − L( x, y − 1))

determine the true location, i.e. sub-pixel accuracy level of θ ( x, y ) = tan −1 ( ) (10)

maximum value. He used the Taylor expansion of the scale L( x + 1, y ) − L( x − 1, y ))

space function shifted so the original is at the sample point.

Two above equation are the gradient magnitude and the

∂D T

1 ∂ D 2 orientation of pixel (x, y) at its scale L(x, y). In actual

D( x) = D + x + xT x (4) calculation, a gradient histogram is formed from the gradient

∂x 2 ∂x 2 orientations of sample points within a region around the key

point. The orientation histogram has 36 bins covering the 360

Where D and its derivatives are evaluated at the sample point

degree range of orientations, so each 100 represents a

and x = ( x, y, σ )T is the offset from this point. The location of direction, so there are 36 directions in all. Each sample added

the extremum, x̂ , is determined by taking the derivative of to the histogram is weighted by its gradient magnitude and by

this function with respect to x and setting it to zero, giving a Gaussian-weighted circular window with σ that is 1.5 times

that of the scale of the keypoint. The highest peak in the

∂ 2 D −1 ∂D histogram is detected and then any other local peaks have 80%

xˆ = − (5) of the highest peak value is used to create a keypoint with that

∂x 2 ∂x orientation as the dominant direction of the key point. One

keypoint can have more than one direction.

The next stage attempts to eliminate some unstable points

from the candidate list of key points by finding those that have D. Key point descriptor

low contrast or are poorly localized on an edge. For low The repeatable local 2D coordinate system in the previous

contrast point finding, we evaluate D(xˆ ) value with threshold. assigned image with location, scale and orientation are used to

By substituting two equations above, we have: describe image region which is invariant with these

We’ve looking for windows that produce a large E value.

To do that, we need to high values of the terms inside the

square brackets. We expand this term using the Taylor series.

E (u , v) ≈ ∑ [ I ( x, y ) + uI x + vI y − I ( x, y )]2 (12)

x, y

We tucked up this equation into matrix form

⎛ ⎡ I2 I x I y ⎤ ⎞⎟ ⎡u ⎤

E (u, v) ≈ [u v]⎜ ∑ ⎢ x ⎥ (13)

⎜ ⎢I x I y

⎝ ⎣ I y2 ⎥⎦ ⎟⎠ ⎢⎣ v ⎥⎦

After that rename the summed-matrix, and put it to be M:

Fig. 2. Keypoint orientation assignment

⎡ I x2 IxIy ⎤

M = ∑ w( x, y ) ⎢ ⎥ (14)

parameters. In this step, descriptor of keypoint will be ⎢⎣ I x I y I y2 ⎥⎦

compute. Each keypoints will be described by a 16x16 region

around. Then in each of the 4x4 subregion, calculate the

histograms with 8 orientation bins. After accumulation, the Harris corner can be defined as the maximum in local area

gradient magnitudes of the 4x4 region to the orientation by the following formula:

histograms, we can create a seed point; each seed point is an

8-demensional vector, so a descriptor contains 16x16x8 R = Det ( M ) − kTrace2 ( M ) (15)

elements in total. Where

III. HARRIS CONNER FEATURE Det ( M ) = λ1λ2 (16)

The Harris corner detector algorithm relies on a central Trace( M ) = λ1 + λ2 (17)

principle: at a corner, the image intensity will change largely

in multiple directions. This can alternatively be formulated by According to formulas above, all windows that have a score

examining the changes of intensity due to shifts in a local R greater than a certain value are corners.

window. Around a corner point, the image intensity will

change greatly when the window is shifted in an arbitrary

direction. Following this intuition and through a clever

decomposition, the Harris detector uses the second moment

matrix as the basis of its corner decisions. To extract corner

can give prominence to the important information. Those can

be described by equation below.

E (u , v) = ∑ w( x, y )[ I ( x + u , y + v) − I ( x, y )]2 (11)

x, y

Where

• E is the difference between the original and the moved

window.

• u is the window’s displacement in the x direction

• v is the window’s displacement in the y direction Fig. 3. Harris corner detection results

• w(x, y) is the window at position (x, y). This acts like a IV. CORRESPONDENCE POINTS MATCHING FROM HARRIS

mask. Ensuring that only the desired window is used. AND SIFT FEATURES

• I is the intensity of the image at a position (x, y) A. Correspondence points matching

• I(x+ u, y+ v) is the intensity of the moved window The corresponding matching point from Harris corner

features between previously detected feature points in two

• I(x, y) is the intensity of the original

images can be found by looking for points that are maximally

correlated with each other within windows surrounding each

point. Only points that correlate most strongly with each other Summarizations of these features are showed in the table

in both directions are returned. The demonstration result is below.

showed below.

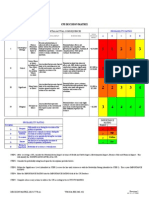

TABLE I. COMPARED PROPERTIES OF HARRIS AND SIFT FEATURES

Properties Harris corner SIFT features

features

Time consuming low high

Correctness low high

Robustness low high

V. CONCLUSIONS

This paper was implemented correspondence matching

Fig. 4. Matching points result from Harris features problem from Harris and SIFTS features. Based on the results

we can see that SIFT features are robust and correct for that

SIFT uses the Euclidean distance between two feature purpose. In future works, we improve matching of two

vectors as the similarity criteria of the two key points and uses methods by using RANSAC algorithm, calculate fundamental

the nearest neighbor algorithm to match each other. Suppose a matrix and reconstruct in 3D of outdoor objects.

feature point is selected in image 1, the nearest neighbor

feature point is defined as the key point with minimum metric VI. ACKNOWLEDGMENT

distance in image 2. By comparing the distance of the closest This research was supported by the MKE (The Ministry of

neighbor to that of the second-closest neighbor we can obtain Knowledge Economy), Korea, under the Human Resources

a more effective method to achieve more correct matches. Development Program for Convergence Robot Specialists

Given a threshold, if the ratio of the distance between the support program supervised by the NIPA(National IT Industry

closest neighbor and the second-closest neighbor is less than Promotion Agency) (NIPA-2010-C7000-1001-0007)

the threshold, then we have obtained a correct match.

REFERENCES

[1] Luo Juan and Oubong Gwun, “A Comparison of SIFT, PCA-SIFT and

SURF” International Journal of Image Processing, Volume 3, Issue 5,

2010

[2] Herbert Bay, Tinne Tuytelaars, Luc Van Gool, “SUFT: speeched up

robust features”, ECCV, Vol. 3951, pp. 404-417, 2006

[3] R. Hartley, A. Zisserman. “Multiple view geometry in computer vision”,

Cambridge University Press, 2000.

[4] D. Lowe, “Object recognition from local scale-invariant features”. Proc.

of the International Conference on Computer Vision, pp. 1150-1157

Fig. 5. Matching points result from SIFT features 1999

[5] D. Lowe, “Distinctive Image Features from Scale-Invariant Interest

B. Comparison Points”, International Journal of Computer Vision, Vol. 60, pp. 91-110,

Based on experiment results, we can see that 2004.

correspondence point from Harris features can be obtained [6] C. Harris, M. Stephens, “A combined corner and edge detector”,

Proceedings of the 4th Alvey Vision Conference, pp. 147-151,

with low time consuming but it is very difficult to get high Manchester, UK 1998.

correct point. Whereas from SIFT features we can get high

correctness and robustness correspondence points.

S-ar putea să vă placă și

- Paper Title: Author Name AffiliationDocument6 paginiPaper Title: Author Name AffiliationPritam PatilÎncă nu există evaluări

- Feature Detection: Jayanta Mukhopadhyay Dept. of Computer Science and EnggDocument54 paginiFeature Detection: Jayanta Mukhopadhyay Dept. of Computer Science and EnggsutanuberaÎncă nu există evaluări

- Scale Invariant Feature Transform (SIFT) : CS 763 Ajit RajwadeDocument52 paginiScale Invariant Feature Transform (SIFT) : CS 763 Ajit RajwadeashuraÎncă nu există evaluări

- Open SurfDocument25 paginiOpen SurfSanjeev Naik RÎncă nu există evaluări

- Phuong Phap SIFTDocument27 paginiPhuong Phap SIFTtaulau1234Încă nu există evaluări

- Scholar Pedia SiftDocument18 paginiScholar Pedia SiftHarsh TagotraÎncă nu există evaluări

- Chapter6 Regression Diagnostic For Leverage and InfluenceDocument10 paginiChapter6 Regression Diagnostic For Leverage and Influencerakib chowdhuryÎncă nu există evaluări

- Face Recognition Using SURF FeaturesDocument7 paginiFace Recognition Using SURF FeaturesLEK GEFORCEÎncă nu există evaluări

- Scale Invariant Feature Transform: Tony LindebergDocument18 paginiScale Invariant Feature Transform: Tony LindebergVeronica NitaÎncă nu există evaluări

- SIFT TransformDocument50 paginiSIFT Transformk191292 Hassan JamilÎncă nu există evaluări

- Least-Squares Fitting of Polygons: Pattern Recognition and Image Analysis April 2016Document8 paginiLeast-Squares Fitting of Polygons: Pattern Recognition and Image Analysis April 2016mike mikeÎncă nu există evaluări

- Least-Squares Fitting of PolygonsDocument8 paginiLeast-Squares Fitting of PolygonsA PlanÎncă nu există evaluări

- Materi 08 VK 2122 2Document70 paginiMateri 08 VK 2122 2IlhamzÎncă nu există evaluări

- Icip2007 Final Submitted PDFDocument4 paginiIcip2007 Final Submitted PDFRaghavendra M BhatÎncă nu există evaluări

- Venkatrayappa2015 Chapter RSD-HoGANewImageDescriptorDocument10 paginiVenkatrayappa2015 Chapter RSD-HoGANewImageDescriptorSakhi ShokouhÎncă nu există evaluări

- Mathematics: Solving Multi-Point Boundary Value Problems Using Sinc-Derivative InterpolationDocument14 paginiMathematics: Solving Multi-Point Boundary Value Problems Using Sinc-Derivative InterpolationErick Sebastian PulleyÎncă nu există evaluări

- VFX HW2Document4 paginiVFX HW2評Încă nu există evaluări

- Survey on metric dimensionDocument29 paginiSurvey on metric dimensiondragance107Încă nu există evaluări

- Mathematical Implementation of Circle Hough Transformation Theorem Model Using C# For Calculation Attribute of CircleDocument5 paginiMathematical Implementation of Circle Hough Transformation Theorem Model Using C# For Calculation Attribute of Circlepham tamÎncă nu există evaluări

- Sift PreprintDocument28 paginiSift PreprintRicardo Gómez GómezÎncă nu există evaluări

- Feature CombinationsDocument5 paginiFeature Combinationssatishkumar697Încă nu există evaluări

- Aggregate Production Planning Using FLPDocument16 paginiAggregate Production Planning Using FLPSei LaÎncă nu există evaluări

- Distance Metrics and Data Transformations: Jianxin WuDocument25 paginiDistance Metrics and Data Transformations: Jianxin WuXg WuÎncă nu există evaluări

- 10.1007@3 540 36131 655Document10 pagini10.1007@3 540 36131 655Shit ShitÎncă nu există evaluări

- Large Deviation Principle For Reflected Stochastic Diffe 2023 Statistics PDocument12 paginiLarge Deviation Principle For Reflected Stochastic Diffe 2023 Statistics Ppepito perezÎncă nu există evaluări

- SIFT: Scale Invariant Feature Transform SeminarDocument8 paginiSIFT: Scale Invariant Feature Transform SeminarHemanthkumar TÎncă nu există evaluări

- Scale-Space: In: Encyclopedia of Computer Science and Engineering (Benjamin Wah, Ed), John Wiley and Sons, VolDocument13 paginiScale-Space: In: Encyclopedia of Computer Science and Engineering (Benjamin Wah, Ed), John Wiley and Sons, VolAaron SmithÎncă nu există evaluări

- Finite Elements in Analysis and Design: Farshid Fathi, René de BorstDocument9 paginiFinite Elements in Analysis and Design: Farshid Fathi, René de Borstadd200588Încă nu există evaluări

- Feature Selection UNIT 4Document40 paginiFeature Selection UNIT 4sujitha100% (2)

- Lesson2 Graphs, Piecewise, Absolute and Greatest IntegerDocument9 paginiLesson2 Graphs, Piecewise, Absolute and Greatest IntegerChristian MedelÎncă nu există evaluări

- Deep Riemannian Manifold Learning: BBL 17 MBM 17Document9 paginiDeep Riemannian Manifold Learning: BBL 17 MBM 17Nick NikzadÎncă nu există evaluări

- UntitledDocument61 paginiUntitledduonglacbkÎncă nu există evaluări

- Integration of The Signum, Piecewise and Related FunctionsDocument7 paginiIntegration of The Signum, Piecewise and Related FunctionsHemendra VermaÎncă nu există evaluări

- Worked Examples On Using The Riemann Integral and The Fundamental of Calculus For Integration Over A Polygonal ElementDocument32 paginiWorked Examples On Using The Riemann Integral and The Fundamental of Calculus For Integration Over A Polygonal ElementValentin MotocÎncă nu există evaluări

- Level set HSI segmentation using spectral edge and signature infoDocument7 paginiLevel set HSI segmentation using spectral edge and signature infomiusayÎncă nu există evaluări

- Bhejni HaiDocument60 paginiBhejni HaisourabhÎncă nu există evaluări

- Term 4 Maths GR 12Document11 paginiTerm 4 Maths GR 12Simphiwe ShabalalaÎncă nu există evaluări

- Proceedings of Spie: Analysis of Design Strategies For Dammann GratingsDocument12 paginiProceedings of Spie: Analysis of Design Strategies For Dammann Gratingsraghavp.iitbÎncă nu există evaluări

- IIT JEE Maths Super 500 Questions With Solutions (Crackjee - Xyz)Document118 paginiIIT JEE Maths Super 500 Questions With Solutions (Crackjee - Xyz)legendÎncă nu există evaluări

- Functional Local Linear Relative Regression: Abdelkader Chahad Ali Laksaci Ait-Hennani LarbiDocument7 paginiFunctional Local Linear Relative Regression: Abdelkader Chahad Ali Laksaci Ait-Hennani Larbiالشمس اشرقتÎncă nu există evaluări

- Machine Learning For Quantitative Finance: Fast Derivative Pricing, Hedging and FittingDocument15 paginiMachine Learning For Quantitative Finance: Fast Derivative Pricing, Hedging and FittingJ Martin O.Încă nu există evaluări

- Pre-Calculus: Updated 09/15/04Document14 paginiPre-Calculus: Updated 09/15/04Neha SinghÎncă nu există evaluări

- 978-1-6654-7661-4/22/$31.00 ©2022 Ieee 37Document12 pagini978-1-6654-7661-4/22/$31.00 ©2022 Ieee 37vnodataÎncă nu există evaluări

- Illumination Scale RotationDocument16 paginiIllumination Scale RotationGra ProÎncă nu există evaluări

- Fy BSC Ba Syllabus Maths PDFDocument18 paginiFy BSC Ba Syllabus Maths PDFDaminiKapoorÎncă nu există evaluări

- lec05_invariance_for_webDocument34 paginilec05_invariance_for_webArijit BoseÎncă nu există evaluări

- The Curvelet Transform For Image FusionDocument6 paginiThe Curvelet Transform For Image FusionajuÎncă nu există evaluări

- Pages From Notes09Document10 paginiPages From Notes09quasemanobrasÎncă nu există evaluări

- Exploring Housing Attributes and DensityDocument26 paginiExploring Housing Attributes and DensityrodmagatÎncă nu există evaluări

- Multi-Image Matching Using Multi-Scale Oriented Patches: Matthew Brown Richard Szeliski Simon WinderDocument8 paginiMulti-Image Matching Using Multi-Scale Oriented Patches: Matthew Brown Richard Szeliski Simon WinderFanny GaÎncă nu există evaluări

- Learning Competency DirectoryDocument3 paginiLearning Competency DirectoryRaymond Gorda88% (8)

- Analysis of Spatial Data Using GeostatisticsDocument7 paginiAnalysis of Spatial Data Using GeostatisticsyyamidÎncă nu există evaluări

- Fuzzy 5Document12 paginiFuzzy 5Basma ElÎncă nu există evaluări

- Geometric functions in computer aided geometric designDe la EverandGeometric functions in computer aided geometric designÎncă nu există evaluări

- Improved SIFT Algorithm Image MatchingDocument7 paginiImproved SIFT Algorithm Image Matchingivy_publisherÎncă nu există evaluări

- Definition and Examples of Metric Spaces: Previous Page Next PageDocument3 paginiDefinition and Examples of Metric Spaces: Previous Page Next PageDaban AbdwllaÎncă nu există evaluări

- Lecture 12&13Document89 paginiLecture 12&13QUANG ANH B18DCCN034 PHẠMÎncă nu există evaluări

- Algorithm For Electromagnetic Power Estimation in Radio Environment MapDocument18 paginiAlgorithm For Electromagnetic Power Estimation in Radio Environment MapTilalÎncă nu există evaluări

- Application of Galerkin's Procedure To Aquifer Analysis: Oh K (H HW) 0Document13 paginiApplication of Galerkin's Procedure To Aquifer Analysis: Oh K (H HW) 0Juan Manuel Martínez RiveraÎncă nu există evaluări

- A-level Maths Revision: Cheeky Revision ShortcutsDe la EverandA-level Maths Revision: Cheeky Revision ShortcutsEvaluare: 3.5 din 5 stele3.5/5 (8)

- Machine Learning For Human BeingsDocument105 paginiMachine Learning For Human Beingsrajali gintingÎncă nu există evaluări

- Soal Psikotest - Logika AngkaDocument10 paginiSoal Psikotest - Logika Angkarajali gintingÎncă nu există evaluări

- Face Recognition Using PCADocument23 paginiFace Recognition Using PCArajali gintingÎncă nu există evaluări

- Eng - Najah.edu Sites Eng - Najah.edu Files FingerprintrecognitionDocument74 paginiEng - Najah.edu Sites Eng - Najah.edu Files Fingerprintrecognitionvpal967Încă nu există evaluări

- Mini CNC PlotterDocument2 paginiMini CNC Plotterrajali gintingÎncă nu există evaluări

- Autonomous Rovers For Mars ExplorationDocument15 paginiAutonomous Rovers For Mars Explorationrajali gintingÎncă nu există evaluări

- Lmari - Accuracy, Trueness, and PrecisionDocument12 paginiLmari - Accuracy, Trueness, and Precisionchristiana_mÎncă nu există evaluări

- Simulink Getting StartedDocument90 paginiSimulink Getting Startedmaxellligue5487Încă nu există evaluări

- Main Part of PresentationDocument24 paginiMain Part of Presentationrajali gintingÎncă nu există evaluări

- Rajali GintingDocument28 paginiRajali Gintingrajali gintingÎncă nu există evaluări

- 01 DTG2D3 ELKOM DNN Radio-Frequency-Passive-ComponentDocument13 pagini01 DTG2D3 ELKOM DNN Radio-Frequency-Passive-Componentrajali gintingÎncă nu există evaluări

- Chapter 4 Matrices Form 5Document22 paginiChapter 4 Matrices Form 5CHONG GEOK CHUAN100% (2)

- Malla Reddy Engineering College (Autonomous)Document17 paginiMalla Reddy Engineering College (Autonomous)Ranjith KumarÎncă nu există evaluări

- Space Gass 12 5 Help Manual PDFDocument841 paginiSpace Gass 12 5 Help Manual PDFNita NabanitaÎncă nu există evaluări

- Develop Your Kuji In Ability in Body and MindDocument7 paginiDevelop Your Kuji In Ability in Body and MindLenjivac100% (3)

- How to trade forex like the banksDocument34 paginiHow to trade forex like the banksGeraldo Borrero80% (10)

- Factors That Affect Information and Communication Technology Usage: A Case Study in Management EducationDocument20 paginiFactors That Affect Information and Communication Technology Usage: A Case Study in Management EducationTrần Huy Anh ĐứcÎncă nu există evaluări

- Dimensioning GuidelinesDocument1 paginăDimensioning GuidelinesNabeela TunisÎncă nu există evaluări

- Excellence Range DatasheetDocument2 paginiExcellence Range DatasheetMohamedYaser100% (1)

- Dswd-As-Gf-018 - Rev 03 - Records Disposal RequestDocument1 paginăDswd-As-Gf-018 - Rev 03 - Records Disposal RequestKim Mark C ParaneÎncă nu există evaluări

- Propaganda and Counterpropaganda in Film, 1933-1945: Retrospective of The 1972 ViennaleDocument16 paginiPropaganda and Counterpropaganda in Film, 1933-1945: Retrospective of The 1972 ViennaleDanWDurningÎncă nu există evaluări

- Light Body ActivationsDocument2 paginiLight Body ActivationsNaresh Muttavarapu100% (4)

- 5.mpob - LeadershipDocument21 pagini5.mpob - LeadershipChaitanya PillalaÎncă nu există evaluări

- TheMindReader TeaserA WhatDocument7 paginiTheMindReader TeaserA WhatnakulshenoyÎncă nu există evaluări

- Pengenalan Icd-10 Struktur & IsiDocument16 paginiPengenalan Icd-10 Struktur & IsirsudpwslampungÎncă nu există evaluări

- Modal Analysis of Honeycomb Structure With Variation of Cell SizeDocument3 paginiModal Analysis of Honeycomb Structure With Variation of Cell Sizeprateekg92Încă nu există evaluări

- What Is Science Cornell Notes ExampleDocument3 paginiWhat Is Science Cornell Notes Exampleapi-240096234Încă nu există evaluări

- 2-Eagan Model of CounsellingDocument23 pagini2-Eagan Model of CounsellingVijesh V Kumar100% (4)

- RBI and Maintenance For RCC Structure SeminarDocument4 paginiRBI and Maintenance For RCC Structure SeminarcoxshulerÎncă nu există evaluări

- The Basics of Hacking and Pen TestingDocument30 paginiThe Basics of Hacking and Pen TestingAnonÎncă nu există evaluări

- Mind MapDocument1 paginăMind Mapjebzkiah productionÎncă nu există evaluări

- 4idealism Realism and Pragmatigsm in EducationDocument41 pagini4idealism Realism and Pragmatigsm in EducationGaiLe Ann100% (1)

- HistoryDocument45 paginiHistoryRay Joshua Angcan BalingkitÎncă nu există evaluări

- Decision MatrixDocument12 paginiDecision Matrixrdos14Încă nu există evaluări

- The God Complex How It Makes The Most Effective LeadersDocument4 paginiThe God Complex How It Makes The Most Effective Leadersapi-409867539Încă nu există evaluări

- Co2 d30 Laser MarkerDocument8 paginiCo2 d30 Laser MarkerIksan MustofaÎncă nu există evaluări

- Cellulose StructureDocument9 paginiCellulose Structuremanoj_rkl_07Încă nu există evaluări

- Digital Logic Design: Dr. Oliver FaustDocument16 paginiDigital Logic Design: Dr. Oliver FaustAtifMinhasÎncă nu există evaluări

- Liberal Theory: Key Aspects of Idealism in International RelationsDocument11 paginiLiberal Theory: Key Aspects of Idealism in International RelationsArpit JainÎncă nu există evaluări

- Subject and Power - FoucaultDocument10 paginiSubject and Power - FoucaultEduardo EspíndolaÎncă nu există evaluări

- Failure Reporting, Analysis, and Corrective Action SystemDocument46 paginiFailure Reporting, Analysis, and Corrective Action Systemjwpaprk1100% (1)