Documente Academic

Documente Profesional

Documente Cultură

Memory Hierarchies (Part 2) Review: The Memory Hierarchy

Încărcat de

huyquyDescriere originală:

Titlu original

Drepturi de autor

Formate disponibile

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentDrepturi de autor:

Formate disponibile

Memory Hierarchies (Part 2) Review: The Memory Hierarchy

Încărcat de

huyquyDrepturi de autor:

Formate disponibile

Chapter 7

Chapter 7

Memory Hierarchies (Part 2)

Outline of Lectures on Memory Systems 1. Memory Hierarchies 2. Cache Memory 3. Virtual Memory 4. The future

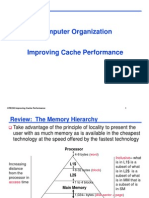

Review: The Memory Hierarchy

Take advantage of the principle of locality to present the user with as much memory as is available in the cheapest technology at the speed offered by the fastest technology

Processor

4-8 bytes (word)

Increasing distance from the processor in access time

L1$

8-32 bytes (block)

L2$

1 to 4 blocks

Main Memory

Inclusive what is in L1$ is a subset of what is in L2$ is a subset of what is in MM that is a subset of is in SM

1,024+ bytes (disk sector = page)

Secondary Memory

(Relative) size of the memory at each level

Chapter 7

Chapter 7

Review: Principle of Locality

Temporal Locality Keep most recently accessed data items closer to the processor Lower Level Spatial Locality Memory To Processor Upper Level Move blocks consisting Memory of contiguous words Blk X From Processor to the upper levels Blk Y Hit Time << Miss Penalty Hit: data appears in some block in the upper level (Blk X)

Hit Rate: the fraction of accesses found in the upper level Hit Time: RAM access time + Time to determine hit/miss

Measuring Cache Performance

Assuming cache hit costs are included as part of the normal CPU execution cycle, then CPU time = IC CPI CC = IC (CPIideal + Memory-stall cycles) CC CPIstall Memory-stall cycles come from cache misses (a sum of readstalls and write-stalls) Read-stall cycles = reads/program read miss rate read miss penalty Write-stall cycles = (writes/program write miss rate write miss penalty) + write buffer stalls

Miss: data needs to be retrieved from a lower level block (Blk Y)

Miss Rate = 1 - (Hit Rate) Miss Penalty: Time to replace a block in the upper level with a block from the lower level + Time to deliver this blocks word to the processor Miss Types: Compulsory, Conflict, Capacity

Page 1

Chapter 7

Chapter 7

Review: The Memory Wall

Logic vs DRAM speed gap continues to grow

Impacts of Cache Performance

Relative cache penalty increases as processor performance improves (faster clock rate and/or lower CPI) The memory speed is unlikely to improve as fast as processor cycle time. When calculating CPIstall, the cache miss penalty is measured in processor clock cycles needed to handle a miss The lower the CPIideal, the more pronounced the impact of stalls A processor with a CPIideal of 2, a 100 cycle miss penalty, 36% load/store instrs, and 2% I$ and 4% D$ miss rates Memory-stall cycles = 2% 100 + 36% 4% 100 = 3.44 So CPIstalls = 2 + 3.44 = 5.44 What if the CPIideal is reduced to 1? 0.5? 0.25? What if the processor clock rate is doubled (doubling the miss penalty)?

100 10 1 0.1 0.01 VAX/1980 PPro/1996 2010+

Core Memory

Chapter 7

Clocks per DRAM access

1000

Clocks per instruction

Chapter 7

Reducing Cache Miss Rates: Method 1

1. Allow more flexible block placement In a direct mapped cache a memory block maps to exactly one cache block At the other extreme, could allow a memory block to be mapped to any cache block fully associative cache A compromise is to divide the cache into sets each of which consists of n ways (n-way set associative). A memory block maps to a unique set (specified by the index field) and can be placed in any way of that set (so there are n choices) (block address) modulo (# sets in the cache)

Cache

Set Associative Cache Example

Way Set V Tag 0 1 0 1 0 1

Data

Q1: Is it there? Compare all the cache tags in the set to the high order 3 memory address bits to tell if the memory block is in the cache

Main Memory 0000xx 0001xx Two low order bits define the byte in the 0010xx word (32-b words) 0011xx One word blocks 0100xx 0101xx 0110xx 0111xx Q2: How do we find it? 1000xx 1001xx Use next 1 low order 1010xx memory address bit to 1011xx 1100xx determine which 1101xx cache set (i.e., modulo 1110xx the number of sets in 1111xx the cache)

Page 2

Chapter 7

Chapter 7

10

Direct Mapped Cache

Start with an empty cache - all blocks initially marked as not valid

Two Way Set Associative Cache

Start with an empty cache - all blocks initially marked as not valid

Consider the main memory word reference string 0 4 0 4 0 4 0 4

0 miss 00 Mem(0) 4 miss 4 Mem(0) 0 miss 0 Mem(4) 4 miss Mem(0)4

Consider the main memory word reference string 0 4 0 4 0 4 0 4

0 miss 000 Mem(0) 000 010 4 miss Mem(0) Mem(4) 000 010 0 hit Mem(0) Mem(4) 000 010 4 hit Mem(0) Mem(4)

01 00

00 01

01 00

00 01

0 miss 0 Mem(4)

01 00

4 miss

4 Mem(0)

00 01

0 miss 0 Mem(4)

01 00

4 miss 4 Mem(0)

8 requests, 2 misses Solves the ping pong effect in a direct mapped cache due to conflict misses since now two memory locations that map into the same cache set can co-exist!

8 requests, 8 misses Ping pong effect due to conflict misses - two memory locations that map into the same cache block

Chapter 7

11

Chapter 7

12

28 = 256 sets each with four ways (each with one block)

31 30 ... 13 12 11 ... 2 1 0

Four-Way Set Associative Cache

Byte offset

Range of Set Associative Caches

For a fixed size cache, each increase by a factor of two in associativity doubles the number of blocks per set (i.e., the number of ways) and halves the number of sets decreases the size of the index by 1 bit and increases the size of the tag by 1 bit

Data

Tag

Index V Tag

0 1 2 . . . 253 254 255

22

8 V Tag

0 1 2 . . . 253 254 255

Index

Data

0 1 2 . . . 253 254 255

V Tag

Data

Data

0 1 2 . . . 253 254 255

V Tag

Tag

Index

Block offset Byte offset

32

4x1 select Hit Data

Page 3

Chapter 7

13

Chapter 7

14

Range of Set Associative Caches

For a fixed size cache, each increase by a factor of two in associativity doubles the number of blocks per set (i.e., the number of ways) and halves the number of sets decreases the size of the index by 1 bit and increases the size of the tag by 1 bit

Costs of Set Associative Caches

When a miss occurs, which ways block do we pick for replacement? Least Recently Used (LRU): the block replaced is the one that has been unused for the longest time

Must have hardware to keep track of when each ways block was used relative to the other blocks in the set For 2-way set associative, takes one bit per set set the bit when a block is referenced (and reset the other ways bit)

Used for tag compare Tag

Selects the set Index

Selects the word in the block Block offset Byte offset

Decreasing associativity Direct mapped (only one way) Smaller tags

Increasing associativity Fully associative (only one set) Tag is all the bits except block and byte offset

N-way set associative cache costs N comparators (delay and area) MUX delay (set selection) before data is available Data available after set selection (and Hit/Miss decision). In a direct mapped cache, the cache block is available before the Hit/Miss decision

So its not possible to just assume a hit and continue and recover later if it was a miss

Chapter 7

15

Chapter 7

16

Benefits of Set Associative Caches

The choice of direct mapped or set associative depends on the cost of a miss versus the cost of implementation

12 10 Miss Rate 8 6 4 2 0 1-way 2-way 4-way 8-way Associativity 4KB 8KB 16KB 32KB 64KB 128KB 256KB 512KB

Data from Hennessy & Patterson, Computer Architecture, 2003

Reducing Cache Miss Rates: Method 2

2. Use multiple levels of caches With advancing technology, we can have more than enough room on the die for bigger L1 caches or for a second level of caches normally a unified L2 cache (i.e., it holds both instructions and data) and in some cases even a unified L3 cache For our example, CPIideal of 2, 100 cycle miss penalty (to main memory), 36% load/stores, a 2% (4%) L1I$ (D$) miss rate, add a UL2$ that has a 25 cycle miss penalty and a 0.5% miss rate CPIstalls = 2 + .0225 + .36.0425 + .005100 + .36.005100 = 3.54 (as compared to 5.44 with no L2$)

Largest gains are in going from direct mapped to 2-way (20%+ reduction in miss rate)

Page 4

Chapter 7

17

Chapter 7

18

Multilevel Cache Design Considerations

Design considerations for L1 and L2 caches are very different Primary cache should focus on minimizing hit time in support of a shorter clock cycle

Smaller with smaller block sizes

Key Cache Design Parameters

L1 typical Total size (blocks) Total size (KB) Block size (B) Miss penalty (clocks) Miss rates (global for L2) 250 to 2000 16 to 64 32 to 64 10 to 25 2% to 5%

L2 typical 4000 to 250,000 500 to 8000 32 to 128 100 to 1000 0.1% to 2%

Secondary cache(s) should focus on reducing miss rate to reduce the penalty of long main memory access times

Larger with larger block sizes

The miss penalty of the L1 cache is significantly reduced by the presence of an L2 cache so it can be smaller (i.e., faster) but have a higher miss rate For the L2 cache, hit time is less important than miss rate The L2$ hit time determines L1$s miss penalty L2$ local miss rate >> the global miss rate

Chapter 7

19

Chapter 7

20

Two Machines Cache Parameters

Intel P4 L1 organization L1 cache size L1 block size L1 associativity L1 replacement L1 write policy L2 organization L2 cache size L2 block size L2 associativity L2 replacement L2 write policy Split I$ and D$ 8KB for D$, 96KB for trace cache (~I$) 64 bytes 4-way set assoc. ~ LRU write-through Unified 512KB 128 bytes 8-way set assoc. ~LRU write-back AMD Opteron Split I$ and D$ 64KB for each of I$ and D$ 64 bytes 2-way set assoc. LRU write-back Unified 1024KB (1MB) 64 bytes 16-way set assoc. ~LRU write-back

Four Questions for the Memory Hierarchy

Q1: Where can a block be placed in the upper level? (Block placement) Q2: How is a block found if it is in the upper level? (Block identification) Q3: Which block should be replaced on a miss? (Block replacement) Q4: What happens on a write? (Write strategy)

Page 5

Chapter 7

21

Chapter 7

22

Q1&Q2: Where can a block be placed/found?

# of sets # of blocks in cache (# of blocks in cache)/ associativity 1 Blocks per set 1 Associativity (typically 2 to 16) # of blocks in cache

Q3: Which block should be replaced on a miss?

Easy for direct mapped only one choice Set associative or fully associative Random LRU (Least Recently Used) For a 2-way set associative cache, random replacement has a miss rate about 1.1 times higher than LRU. LRU is too costly to implement for high levels of associativity (> 4-way) since tracking the usage information is costly

Direct mapped Set associative Fully associative

Direct mapped Set associative

Location method Index Index the set; compare sets tags

# of comparisons 1 Degree of associativity # of blocks

Fully associative Compare all blocks tags

Chapter 7

23

Chapter 7

24

Q4: What happens on a write?

Write-through The information is written to both the block in the cache and the block in the next lower level of the memory hierarchy Write-through is always combined with a write buffer so write waits to lower level memory can be eliminated (as long as the write buffer doesnt fill) Write-back The information is written only to the block in the cache. The modified cache block is written to next lower level of memory only when it is replaced. Need a dirty bit to keep track of whether the block is clean or dirty Pros and cons of each? Write-through: read misses dont result in writes (so are simpler and cheaper) Write-back: repeated writes require only one write to lower level

Improving Cache Performance

0. Reduce the time to hit in the cache smaller cache direct mapped cache smaller blocks for writes

no write allocate no hit on cache, just write to write buffer write allocate to avoid two cycles (first check for hit, then write) pipeline writes via a delayed write buffer to cache

1. Reduce the miss rate bigger cache more flexible placement (increase associativity) larger blocks (16 to 64 bytes typical) victim cache small buffer holding most recently discarded blocks

Page 6

Chapter 7

25

Chapter 7

26

Improving Cache Performance

2. Reduce the miss penalty smaller blocks use a write buffer to hold dirty blocks being replaced so dont have to wait for the write to complete before reading check write buffer (and/or victim cache) on read miss may get lucky for large blocks fetch critical word first use multiple cache levels L2 cache not tied to CPU clock rate faster backing store/improved memory bandwidth

wider buses memory interleaving, page mode DRAMs

Summary: The Cache Design Space

Several interacting dimensions cache size block size associativity replacement policy write-through vs write-back write allocation The optimal choice is a compromise depends on access characteristics

workload use (I-cache, D-cache, TLB)

Cache Size

Associativity

Block Size

Bad

depends on technology / cost Simplicity often wins

Good Factor A Less

Factor B

More

Chapter 7

27

Read misses (I$ and D$) stall the entire pipeline, fetch the block from the next level in the memory hierarchy, install it in the cache and send the requested word to the processor, then let the pipeline resume Write misses (D$ only) 1. stall the pipeline, fetch the block from next level in the memory hierarchy, install it in the cache (which may involve having to evict a dirty block if using a write-back cache), write the word from the processor to the cache, then let the pipeline resume 2. Write allocate just write the word into the cache updating both the tag and data, no need to check for cache hit, no need to stall (normally used in write-back caches) 3. No-write allocate skip the cache write and just write the word to the write buffer (and eventually to the next memory level), no need to stall if the write buffer isnt full; must invalidate the cache block since it will be inconsistent (now holding stale data) No-write allocate is normally used in write-through caches with a write buffer)

Review: Handling Cache Misses

Page 7

S-ar putea să vă placă și

- C & C++ Interview Questions You'll Most Likely Be Asked: Job Interview Questions SeriesDe la EverandC & C++ Interview Questions You'll Most Likely Be Asked: Job Interview Questions SeriesÎncă nu există evaluări

- Improving Cache PerformanceDocument24 paginiImproving Cache Performanceramakanth_komatiÎncă nu există evaluări

- Cache DesignDocument59 paginiCache DesignChunkai HuangÎncă nu există evaluări

- Chapter 5Document87 paginiChapter 5supersibas11Încă nu există evaluări

- Cache1 2Document30 paginiCache1 2Venkat SrinivasanÎncă nu există evaluări

- Memory Hierarchy DesignDocument115 paginiMemory Hierarchy Designshivu96Încă nu există evaluări

- CS704 Finalterm QA Past PapersDocument20 paginiCS704 Finalterm QA Past PapersMuhammad MunirÎncă nu există evaluări

- CODch 7 SlidesDocument49 paginiCODch 7 SlidesMohamed SherifÎncă nu există evaluări

- Lecture 16Document22 paginiLecture 16Alfian Try PutrantoÎncă nu există evaluări

- ECE4680 Computer Organization and Architecture Memory Hierarchy: Cache SystemDocument25 paginiECE4680 Computer Organization and Architecture Memory Hierarchy: Cache SystemNarender KumarÎncă nu există evaluări

- Chapter 6Document37 paginiChapter 6shubhamvslaviÎncă nu există evaluări

- ACA Unit-5Document54 paginiACA Unit-5mannanabdulsattarÎncă nu există evaluări

- The Motivation For Caches: Memory SystemDocument9 paginiThe Motivation For Caches: Memory SystemNarender KumarÎncă nu există evaluări

- Memory Hierarchy - Short ReviewDocument11 paginiMemory Hierarchy - Short ReviewBusingye ElizabethÎncă nu există evaluări

- The Memory Hierarchy: - Ideally One Would Desire An Indefinitely Large Memory Capacity SuchDocument23 paginiThe Memory Hierarchy: - Ideally One Would Desire An Indefinitely Large Memory Capacity SuchHarshitaSharmaÎncă nu există evaluări

- MathsDocument3 paginiMathssukanta majumderÎncă nu există evaluări

- Caches - Basic IdeaDocument11 paginiCaches - Basic IdeaJustin WilsonÎncă nu există evaluări

- Computer Architecture Cache DesignDocument28 paginiComputer Architecture Cache Designprahallad_reddy100% (3)

- Solutions: 18-742 Advanced Computer ArchitectureDocument8 paginiSolutions: 18-742 Advanced Computer ArchitectureArwa AlrawaisÎncă nu există evaluări

- Final Review PDFDocument27 paginiFinal Review PDFMohamedÎncă nu există evaluări

- Large and Fast: Exploiting Memory HierarchyDocument48 paginiLarge and Fast: Exploiting Memory Hierarchyapi-26072581Încă nu există evaluări

- Lecture 5: Memory Hierarchy and Cache Traditional Four Questions For Memory Hierarchy DesignersDocument10 paginiLecture 5: Memory Hierarchy and Cache Traditional Four Questions For Memory Hierarchy Designersdeepu7deeptiÎncă nu există evaluări

- AC14L08 Memory HierarchyDocument20 paginiAC14L08 Memory HierarchyAlexÎncă nu există evaluări

- ch2 AppbDocument58 paginich2 AppbKrupa UrankarÎncă nu există evaluări

- Lecture 12: Memory Hierarchy - Cache Optimizations: CSCE 513 Computer ArchitectureDocument69 paginiLecture 12: Memory Hierarchy - Cache Optimizations: CSCE 513 Computer ArchitectureFahim ShaikÎncă nu există evaluări

- Computer Architecture and Organization: Lecture14: Cache Memory OrganizationDocument18 paginiComputer Architecture and Organization: Lecture14: Cache Memory OrganizationMatthew R. PonÎncă nu există evaluări

- Computer Org and Arch: R.MageshDocument48 paginiComputer Org and Arch: R.Mageshmage9999Încă nu există evaluări

- Unit-III: Memory: TopicsDocument54 paginiUnit-III: Memory: TopicsZain Shoaib MohammadÎncă nu există evaluări

- Lecture # 15Document27 paginiLecture # 15Noman Ajaz KørãíÎncă nu există evaluări

- Cache Memory: CS2100 - Computer OrganizationDocument45 paginiCache Memory: CS2100 - Computer OrganizationamandaÎncă nu există evaluări

- Midterm PracticeDocument7 paginiMidterm PracticeZhang YoudanÎncă nu există evaluări

- COSS - Lecture - 5 - With AnnotationDocument23 paginiCOSS - Lecture - 5 - With Annotationnotes.chandanÎncă nu există evaluări

- Assign1 PDFDocument5 paginiAssign1 PDFSyed Furqan AlamÎncă nu există evaluări

- Computer Organization PDFDocument2 paginiComputer Organization PDFCREATIVE QUOTESÎncă nu există evaluări

- Solution of CSE 240A Assignemnt 3Document5 paginiSolution of CSE 240A Assignemnt 3soc1989Încă nu există evaluări

- William Stallings Computer Organization and Architecture 7th Edition Cache MemoryDocument57 paginiWilliam Stallings Computer Organization and Architecture 7th Edition Cache MemorySumaiya KhanÎncă nu există evaluări

- Cmsc132part1 3rdexamDocument2 paginiCmsc132part1 3rdexamwarlic978Încă nu există evaluări

- William Stallings Computer Organization and Architecture 7th Edition Cache MemoryDocument57 paginiWilliam Stallings Computer Organization and Architecture 7th Edition Cache MemoryDurairajan AasaithambaiÎncă nu există evaluări

- 05) Cache Memory IntroductionDocument20 pagini05) Cache Memory IntroductionkoottyÎncă nu există evaluări

- Cache Memory Design OptionsDocument20 paginiCache Memory Design OptionsAlexander TaylorÎncă nu există evaluări

- Computer Architecture Homework 5 - Due Date:08/11/2014: Class 11ECE NameDocument4 paginiComputer Architecture Homework 5 - Due Date:08/11/2014: Class 11ECE NameVanlocTranÎncă nu există evaluări

- Chapter 12Document23 paginiChapter 12Georgian ChailÎncă nu există evaluări

- Cache Miss Penalty Reduction: #1 - Multilevel CachesDocument8 paginiCache Miss Penalty Reduction: #1 - Multilevel CachesHarish SwamiÎncă nu există evaluări

- CH04Document46 paginiCH04sidÎncă nu există evaluări

- Parallel Computing Platforms: Chieh-Sen (Jason) HuangDocument21 paginiParallel Computing Platforms: Chieh-Sen (Jason) HuangBalakrishÎncă nu există evaluări

- 5 1Document39 pagini5 1tinni09112003Încă nu există evaluări

- 18-742 Advanced Computer Architecture: Exam I October 8, 1997Document11 pagini18-742 Advanced Computer Architecture: Exam I October 8, 1997Nitin GodheyÎncă nu există evaluări

- Memory Hierarchy-Unit4-IiitDocument20 paginiMemory Hierarchy-Unit4-Iiitdcsvn131930Încă nu există evaluări

- Course Code: CS 283 Course Title: Computer Architecture: Class Day: Friday Timing: 12:00 To 1:30Document23 paginiCourse Code: CS 283 Course Title: Computer Architecture: Class Day: Friday Timing: 12:00 To 1:30Niaz Ahmed KhanÎncă nu există evaluări

- Chapter ThreeDocument63 paginiChapter ThreesileÎncă nu există evaluări

- Advanced Computer Architecture-06CS81-Memory Hierarchy DesignDocument18 paginiAdvanced Computer Architecture-06CS81-Memory Hierarchy DesignYutyu YuiyuiÎncă nu există evaluări

- 4.1 Computer Memory System OverviewDocument12 pagini4.1 Computer Memory System Overviewmtenay1Încă nu există evaluări

- Memory Hierarchy - Introduction: Cost Performance of Memory ReferenceDocument52 paginiMemory Hierarchy - Introduction: Cost Performance of Memory Referenceravi_jolly223987Încă nu există evaluări

- COA Lecture 25 26Document13 paginiCOA Lecture 25 26Chhaveesh AgnihotriÎncă nu există evaluări

- Aca Seminar ReportDocument11 paginiAca Seminar Reportanon_318052444Încă nu există evaluări

- Cache and Memory SystemsDocument100 paginiCache and Memory SystemsJay JaberÎncă nu există evaluări

- Memory Design: SOC and Board-Based SystemsDocument48 paginiMemory Design: SOC and Board-Based SystemsSathiyaSeelaÎncă nu există evaluări

- COD Homework 5 - Due Date:Final Exam: Class 11DT NameDocument4 paginiCOD Homework 5 - Due Date:Final Exam: Class 11DT Namehacker_05Încă nu există evaluări

- Embedded Systems Unit 8 NotesDocument17 paginiEmbedded Systems Unit 8 NotesPradeep Kumar Goud NadikudaÎncă nu există evaluări

- Web Services in LabVIEWDocument14 paginiWeb Services in LabVIEWhuyquyÎncă nu există evaluări

- Let's Build A Fuzzy Logic Control SystemDocument13 paginiLet's Build A Fuzzy Logic Control SystemhuyquyÎncă nu există evaluări

- Modbus Application Protocol V1 1bDocument51 paginiModbus Application Protocol V1 1bAlexander E. GuzmanÎncă nu există evaluări

- Design For Test: EE116B (Winter 2000) : Lecture # 9 February 8, 2000Document28 paginiDesign For Test: EE116B (Winter 2000) : Lecture # 9 February 8, 2000huyquyÎncă nu există evaluări

- DE1 Basic ComputerDocument27 paginiDE1 Basic ComputerhuyquyÎncă nu există evaluări

- T1 7.0 SD-Card Upgrade User Guide V1.0Document8 paginiT1 7.0 SD-Card Upgrade User Guide V1.0Parash Mani TimilsinaÎncă nu există evaluări

- Platform Technologies Mobile PlatformsDocument18 paginiPlatform Technologies Mobile PlatformsVincent SonÎncă nu există evaluări

- Catalogo 191022 PubDocument29 paginiCatalogo 191022 PubPpÎncă nu există evaluări

- A Demonstration of Exact String Matching Algorithms With CUDADocument10 paginiA Demonstration of Exact String Matching Algorithms With CUDARaymond TayÎncă nu există evaluări

- Datasheet of DS WSPWI T 08 & DS WSPLI T 08 Workstation V1.0 20190507Document4 paginiDatasheet of DS WSPWI T 08 & DS WSPLI T 08 Workstation V1.0 20190507RajeevÎncă nu există evaluări

- Dspic30F Data Sheet: General Purpose and Sensor FamiliesDocument248 paginiDspic30F Data Sheet: General Purpose and Sensor FamiliesEFRENÎncă nu există evaluări

- ch1 1Document46 paginich1 1Rohan ChalisgaonkarÎncă nu există evaluări

- Byte Con Fiden Tial Don Otc Opy: Model Name: Ga-P61A-D3Document34 paginiByte Con Fiden Tial Don Otc Opy: Model Name: Ga-P61A-D3sỹ QuốcÎncă nu există evaluări

- Introduction To ScannersDocument3 paginiIntroduction To ScannersMyrielle Trisha SAYREÎncă nu există evaluări

- MSP 430 F 5418Document95 paginiMSP 430 F 5418anurag2006agarwalÎncă nu există evaluări

- Switch XDocument7 paginiSwitch XLito ManayonÎncă nu există evaluări

- Exynos Log Tool: User GuideDocument39 paginiExynos Log Tool: User GuideSteve Wilson100% (1)

- Gygabyte GA-A55M-DS2 ManualDocument44 paginiGygabyte GA-A55M-DS2 ManualHarun HusetićÎncă nu există evaluări

- 2023 06 07 18 47 11 DESKTOP-GPG06PB LogDocument519 pagini2023 06 07 18 47 11 DESKTOP-GPG06PB LogTriust XDÎncă nu există evaluări

- Asus E402Wa (Dark Blue) Asus E402Na (Dark Blue) : Lenovo Ip 110-14 IbrDocument3 paginiAsus E402Wa (Dark Blue) Asus E402Na (Dark Blue) : Lenovo Ip 110-14 IbrPenghancur DuniaÎncă nu există evaluări

- Jabra Evolve2 65 Datasheet Teams A4 WebDocument2 paginiJabra Evolve2 65 Datasheet Teams A4 WebLuis Miguel Ramirez JaramilloÎncă nu există evaluări

- Lenovo Thinkcentre M90a Aio WW FinalDocument3 paginiLenovo Thinkcentre M90a Aio WW FinalAPNA TECHÎncă nu există evaluări

- Viotech 3200+ - 20190527Document2 paginiViotech 3200+ - 20190527Jesus ManuelÎncă nu există evaluări

- ch.1 Lesson 1.Document19 paginich.1 Lesson 1.Yosef BekeleÎncă nu există evaluări

- PDFDocument688 paginiPDFaymanaymanÎncă nu există evaluări

- Windows & Office KeysDocument31 paginiWindows & Office KeysComposite BowmanÎncă nu există evaluări

- Tafsir Pimpinan Ar Rahman PDFDocument3 paginiTafsir Pimpinan Ar Rahman PDFUmar Mohd Jafar50% (12)

- r05321503 Systems ProgrammingDocument5 paginir05321503 Systems ProgrammingSRINIVASA RAO GANTAÎncă nu există evaluări

- Denso Nec76f0040agd 175822-652 HinoDocument9 paginiDenso Nec76f0040agd 175822-652 HinoSlamat AgungÎncă nu există evaluări

- Von Neumann ArchitectureDocument27 paginiVon Neumann ArchitectureNgoc VuÎncă nu există evaluări

- CSCI 4717/5717 Computer ArchitectureDocument39 paginiCSCI 4717/5717 Computer ArchitectureDanny MannoÎncă nu există evaluări

- Advantech Product Selection GuideDocument82 paginiAdvantech Product Selection GuideQuantumAutomationÎncă nu există evaluări

- Xilinx XC6SLX9 2TQG144C DatasheetDocument76 paginiXilinx XC6SLX9 2TQG144C DatasheetDaniel CrespoÎncă nu există evaluări

- ND MP Backup SolutionsDocument9 paginiND MP Backup SolutionsLoris StrozziniÎncă nu există evaluări

- AGC-4 Installation InstructionsDocument70 paginiAGC-4 Installation InstructionsTimÎncă nu există evaluări