Documente Academic

Documente Profesional

Documente Cultură

Dissertacao FilipeTMarques Infraestrutura de TI

Încărcat de

carlos_reis_19Drepturi de autor

Formate disponibile

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentDrepturi de autor:

Formate disponibile

Dissertacao FilipeTMarques Infraestrutura de TI

Încărcat de

carlos_reis_19Drepturi de autor:

Formate disponibile

Universidade Federal de Campina Grande

Centro de Engenharia Eltrica e Informtica

Coordenao de Ps-Graduao em Cincia da Computao

Dissertao de Mestrado

Projeto de Infra-estrutura de TI pela Perspectiva de

Negcio

Filipe Teixeira Marques

Campina Grande, Paraba, Brasil

Julho - 2006

Projeto de Infra-estrutura de TI pela Perspectiva de

Negcio

Filipe Teixeira Marques

Dissertao submetida Coordenao de Ps-Graduao em Cincia da

Computao da Universidade Federal de Campina Grande - Campus I

como parte dos requisitos necessrios para obteno do grau de Mestre

em Informtica.

rea de Concentrao: Cincia da Computao

Linha de Pesquisa: Redes de Computadores e Sistemas Distribudos

(Gesto da Tecnologia de Informao)

Jacques Philippe Sauv

(Orientador)

Campina Grande, Paraba, Brasil

c Filipe Teixeira Marques, Julho 2006

Resumo

Considerando o papel crucial que a Tecnologia da Informao (TI) desempenha ao apoiar

e melhorar as operaes de negcio, as empresas esto mais dependentes de seus servios e

infra-estrutura. Este fato implica em uma maior ateno para com os servios de TI, os quais

devem ser cuidadosamente projetados e mantidos para suportar as necessidades do negcio.

As abordagens convencionais tratam o projeto de infra-estruturas escolhendo a congurao

de menor custo que suporte ecientemente os servios de TI. No entanto, as necessidades do

negcio vo muito alm do custo da infra-estrutura e precisam ser capturadas e relacionadas

s questes tcnicas de TI. Esta dissertao apresenta uma investigao formal de tcnicas

de projeto de infra-estruturas de TI e acordos de nveis de servios onde as mtricas de

negcio so levadas em considerao - esta a principal diferena em relao s abordagens

existentes. A abordagem proposta baseada no conceito de Business-Driven IT Management

(BDIM), que estabelece uma relao formal entre a infra-estrutura de TI, os servios de TI e

o negcio propriamente dito.

i

Abstract

Since Information Technology (IT) plays a crucial role in leveraging the business opera-

tions, corporations are getting more dependent on their IT services and infrastructure. This

high level of criticality has signicantly raised the visibility of IT services and these must

be designed and provisioned ever more carefully to support business needs. The conven-

tional capacity planning approaches tries to solve this problem by choosing the most cost-

effective IT infrastructure conguration alternative. However, the needs of the business must

somehow be captured and linked to the IT world so that adequate service be designed and

deployed. This dissertation presents a formal investigation of techniques for designing IT in-

frastructure and Service Level Agreements, where business metrics are considered that is

the main difference from existing approaches. The proposed approach is based on Business-

Driven IT Management (BDIM). BDIM establishes a formal link between IT infrastructure,

IT services and the business itself.

ii

Agradecimentos

Em primeiro lugar agradeo a Deus.

A minha "verdadeira"famlia pelo imenso amor, apoio e incentivo recebido durante

todo esse perodo; sem isso no teria conseguido. Aos meus irmos Rodrigo (vov), Rafa

(hipopotamo) e Flvio pelo companheirismo.

Agradeo imensamente ao professor Jacques, um verdadeiro mestre, pela sua orientao.

Tambm, sou grato aos professores Anto Moura e Marcus Sampaio pelas inmeras dis-

cusses e ensinamentos. Em especial, agradeo tambm ao professor Dalton pelas palavras

certas em um momento crucial.

Aos poucos verdadeiros amigos que, mesmo diante das minhas ausncias semanais e no

momento de maior escurido da minha vida, mostraram a essncia da verdadeira amizade.

Ao resto do pessoal do grupo Bottom-Line (Rodrigo Rebouas e Rodrigo "Biscoito")

pelos incontveis debates, momentos de descontrao e consultorias diversas. Tambm sou

grato aos amigos(as) do mestrado pelo companheirismo, pelos momentos de descontraes

e apoio.

A Aninha e Lili, por terem sido to prestativas e carinhosas.

Agradeo aos amigos Flvio, Fbio (Tio Binho), Gustavo e Lauro, os quais foram meus

companheiros de casa em Campina Grande, por terem, literalmente, me "suportado"durante

todo esse tempo.

Agradeo a todos aqueles que apesar de j no fazerem parte da minha vida, tiveram

fundamentais importncia e inuncia na minha caminhada; em especial, meu eterno tio

Hendrik e meus avs Toinho e Teixeira.

Agradeo em especial a Elise Marianni. Apesar de tudo, deixar de reconhecer sua con-

tribuio seria extremamente injusto diante de seu apoio e incentivo.

iii

Contedo

1 Introduo 1

1.0.1 Gerncia de Servios de TI . . . . . . . . . . . . . . . . . . . . . . 2

1.0.2 BDIM: uma extenso Gerncia de Servios de TI . . . . . . . . . 4

1.1 Objetivos . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

1.2 Organizao da Dissertao . . . . . . . . . . . . . . . . . . . . . . . . . . 7

2 Projetando Infra-estruturas de TI e Acordos de Nveis de Servios 8

2.1 Abstrao da Infra-estrutura de TI . . . . . . . . . . . . . . . . . . . . . . 11

2.2 Sobre os problemas de projeto de infra-estruturas e SLAs . . . . . . . . . . 12

2.3 Sobre o modelo de custo da infra-estrutura de TI . . . . . . . . . . . . . . . 13

2.4 Sobre o modelo particular de impacto negativo . . . . . . . . . . . . . . . 14

2.5 Sobre o modelo de disponibilidade do servio de TI . . . . . . . . . . . . . 15

2.6 Sobre a probabilidade de desistncia . . . . . . . . . . . . . . . . . . . . . 15

3 Resultados da Pesquisa 17

3.1 Projeto timo de Infra-Estruturas de TI pela Perspectiva do Negcio . . . . 17

3.2 Estudo do Efeito de Picos de Demanda no Projeto timo de Infra-Estruturas

de TI pela Perspectiva do Negcio . . . . . . . . . . . . . . . . . . . . . . 19

3.3 Negociao tima de SLAs Internos pela Perspectiva do Negcio . . . . . 20

3.4 Negociao tima de SLAs Envolvendo Servios Terceirizados pela Per-

spectiva do Negcio . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

4 Discusso sobre a Validao dos Modelos 23

iv

CONTEDO v

5 Concluses e Trabalhos Futuros 27

5.1 Concluses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

5.2 Trabalhos Futuros . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

A Optimal Design of e-Commerce Site Infrastructure from a Business

Perspective 43

A.1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

A.2 Informal Problem Description . . . . . . . . . . . . . . . . . . . . . . . . 45

A.3 Problem Formalization . . . . . . . . . . . . . . . . . . . . . . . . . . . . 46

A.3.1 The Design Optimization Problem . . . . . . . . . . . . . . . . . . 46

A.3.2 Characterizing the Infrastructure . . . . . . . . . . . . . . . . . . . 47

A.3.3 The Response Time Performance Model . . . . . . . . . . . . . . . 49

A.3.4 The Business Impact Model . . . . . . . . . . . . . . . . . . . . . 54

A.4 An Example E-Commerce Site Design . . . . . . . . . . . . . . . . . . . . 55

A.5 Related Work . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

A.6 Conclusions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 63

B Business-Oriented Capacity Planning of IT Infrastructure to handle

Load Surges 65

B.1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

B.2 Capacity Planning Using a Cost Perspective . . . . . . . . . . . . . . . . . 66

B.2.1 IT Infrastructure Abstraction . . . . . . . . . . . . . . . . . . . . . 66

B.2.2 Cost-Oriented Capacity Planning Formalization . . . . . . . . . . . 68

B.3 Adding a Business-Oriented View . . . . . . . . . . . . . . . . . . . . . . 69

B.3.1 Handling Load Surges: The Challenge . . . . . . . . . . . . . . . . 69

B.3.2 Loss Model Components . . . . . . . . . . . . . . . . . . . . . . . 70

B.4 Evaluating the Model: an Example . . . . . . . . . . . . . . . . . . . . . . 70

B.5 Conclusions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 74

C SLA Design from a Business Perspective 76

C.1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

C.2 Gaining a Business Perspective on IT Operations . . . . . . . . . . . . . . 77

CONTEDO vi

C.2.1 Addressing IT Problems through Business Impact Management . . 78

C.2.2 SLA Design: An Optimization Problem . . . . . . . . . . . . . . . 78

C.3 Problem Formalization . . . . . . . . . . . . . . . . . . . . . . . . . . . . 79

C.3.1 The Entities and their Relationships . . . . . . . . . . . . . . . . . 79

C.3.2 The Cost Model . . . . . . . . . . . . . . . . . . . . . . . . . . . 80

C.3.3 Loss Considerations . . . . . . . . . . . . . . . . . . . . . . . . . 80

C.3.4 The SLA Design Problem . . . . . . . . . . . . . . . . . . . . . . 82

C.3.5 A Specic Loss Model . . . . . . . . . . . . . . . . . . . . . . . . 83

C.3.6 The Availability Model . . . . . . . . . . . . . . . . . . . . . . . . 84

C.3.7 The Response Time Performance Model . . . . . . . . . . . . . . . 84

C.4 A Numerical Example of SLA Design . . . . . . . . . . . . . . . . . . . . 86

C.5 Related Work . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89

C.6 Conclusions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 90

D Business-Driven Design of Infrastructures for IT Services 92

D.1 Introduction: The Problem . . . . . . . . . . . . . . . . . . . . . . . . . . 92

D.2 A Method to Capture the Business Perspective in IT Infrastructure Design . 95

D.2.1 A Layered Model . . . . . . . . . . . . . . . . . . . . . . . . . . . 95

D.2.2 Optimization problems dealing with infrastructure design . . . . . . 98

D.3 Performance Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 99

D.3.1 Calculating infrastructure cost . . . . . . . . . . . . . . . . . . . . 100

D.3.2 Calculating business loss from a general business impact model . . 101

D.3.3 Calculating service availability . . . . . . . . . . . . . . . . . . . . 103

D.3.4 Calculating customer defection probability . . . . . . . . . . . . . 105

D.4 Application of the Method and Models . . . . . . . . . . . . . . . . . . . . 114

D.4.1 Behavior of the loss metric . . . . . . . . . . . . . . . . . . . . . . 114

D.4.2 Comparing business-oriented design with ad hoc design . . . . . . 117

D.4.3 The inuence of business process importance . . . . . . . . . . . . 120

D.4.4 Provisioning two services . . . . . . . . . . . . . . . . . . . . . . 121

D.4.5 The effect of varying load on optimal design . . . . . . . . . . . . 122

D.4.6 Designing infrastructure for load surges . . . . . . . . . . . . . . . 124

CONTEDO vii

D.5 Related Work . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 127

D.6 Conclusions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 131

E Business-Driven Service Level Agreement Negotiation and Service

Provisioning 134

E.1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 135

E.2 Related Work . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 136

E.3 Conventional SLA Negotiation Approach . . . . . . . . . . . . . . . . . . 138

E.3.1 IT Infrastructure Basics . . . . . . . . . . . . . . . . . . . . . . . . 138

E.3.2 Dening and evaluating IT metrics . . . . . . . . . . . . . . . . . . 139

E.3.3 Dening and evaluating business metrics . . . . . . . . . . . . . . 141

E.3.4 Conventional SLA Negotiation Formalization . . . . . . . . . . . . 143

E.4 Business-Driven SLA Negotiation Approach . . . . . . . . . . . . . . . . . 144

E.4.1 Improving the Service Customer-Client Linkage . . . . . . . . . . 145

E.4.2 Business-Driven SLA Negotiation . . . . . . . . . . . . . . . . . . 147

E.5 Evaluating the Approach: an Example . . . . . . . . . . . . . . . . . . . . 148

E.6 Conclusions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 153

Lista de Figuras

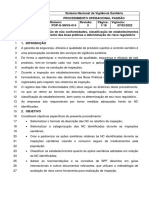

2.1 Esboo da Soluo Proposta . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.2 Principais Entidades do Modelo . . . . . . . . . . . . . . . . . . . . . . . 12

A.1 Model entities . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 45

A.2 CBMG for the e-commerce site . . . . . . . . . . . . . . . . . . . . . . . . 51

A.3 E-commerce Business Loss . . . . . . . . . . . . . . . . . . . . . . . . . . 55

A.4 Sensitivity of total cost plus loss due to load . . . . . . . . . . . . . . . . . 59

A.5 Sensitivity of cost and loss due to load . . . . . . . . . . . . . . . . . . . . 61

A.6 Response time for various designs . . . . . . . . . . . . . . . . . . . . . . 62

B.1 Load States . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 67

B.2 Cost and BDIM Dimensions Comparison . . . . . . . . . . . . . . . . . . 72

B.3 Inuence of Surge Duration on Optimal Design . . . . . . . . . . . . . . . 73

B.4 Inuence of Surge Height on Optimal Design . . . . . . . . . . . . . . . . 74

B.5 Comparison of Three Design Alternatives . . . . . . . . . . . . . . . . . . 75

C.1 Entities and their relationships . . . . . . . . . . . . . . . . . . . . . . . . 81

C.2 Effect of Load on Loss . . . . . . . . . . . . . . . . . . . . . . . . . . . . 88

C.3 Sensitivity of Loss due to Redundancy . . . . . . . . . . . . . . . . . . . . 88

D.1 Model Layers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 95

D.2 Cost and Availability Model Entities . . . . . . . . . . . . . . . . . . . . . 100

D.3 Business Impact Model Crossing the IT-Business Gap . . . . . . . . . . . 102

D.4 CBMG for the e-commerce site . . . . . . . . . . . . . . . . . . . . . . . . 107

D.5 Customer behavior model . . . . . . . . . . . . . . . . . . . . . . . . . . . 108

D.6 Flow of a request between tiers and cache model . . . . . . . . . . . . . . 109

viii

LISTA DE FIGURAS ix

D.7 Sensitivity of Loss due to Redundancy . . . . . . . . . . . . . . . . . . . . 115

D.8 Effect of Load on Loss . . . . . . . . . . . . . . . . . . . . . . . . . . . . 117

D.9 Inuence of BP importance on optimal design . . . . . . . . . . . . . . . . 119

D.10 Inuence of load on optimal design . . . . . . . . . . . . . . . . . . . . . . 120

D.11 Variation of Optimal Design due to Different BPs Importance . . . . . . . . 121

D.12 Sensitivity of Total Cost Plus Loss due to Load . . . . . . . . . . . . . . . 122

D.13 Sensitivity of cost and loss due to load . . . . . . . . . . . . . . . . . . . . 123

D.14 Response time for various designs . . . . . . . . . . . . . . . . . . . . . . 124

D.15 Cost Dimension Comparison . . . . . . . . . . . . . . . . . . . . . . . . . 125

D.16 Cost and BIM Dimensions Comparison . . . . . . . . . . . . . . . . . . . 126

D.17 Inuence of Surge Duration on Optimal Design . . . . . . . . . . . . . . . 127

D.18 Inuence of Surge Height on Optimal Design . . . . . . . . . . . . . . . . 128

E.1 Conventional SLA Negotiation Approach . . . . . . . . . . . . . . . . . . 139

E.2 Business-Driven SLA Negotiation Approach . . . . . . . . . . . . . . . . . 145

E.3 Service Provider Cost Comparison . . . . . . . . . . . . . . . . . . . . . . 152

E.4 Service Provider Cost and Prot Comparison . . . . . . . . . . . . . . . . 152

E.5 Client Loss, Cost and Prot Comparison (Business-driven Approach) . . . 153

Lista de Tabelas

2.1 Denio formal do problema de projetar infra-estruturas de TI. . . . . . . 13

2.2 Denio formal do problema de projetar SLAs. . . . . . . . . . . . . . . . 13

A.1 Notational summary for problem denition . . . . . . . . . . . . . . . . . 47

A.2 Formal denition of design problem . . . . . . . . . . . . . . . . . . . . . 47

A.3 Notational summary for problem denition . . . . . . . . . . . . . . . . . 48

A.4 Notational summary for problem denition . . . . . . . . . . . . . . . . . 50

A.5 Transition probabilities in NRG CBMG . . . . . . . . . . . . . . . . . . . 53

A.6 Average number of visits to each state . . . . . . . . . . . . . . . . . . . . 56

A.7 Parameters for example site . . . . . . . . . . . . . . . . . . . . . . . . . . 57

A.8 Service demand in milliseconds in all tiers . . . . . . . . . . . . . . . . . . 57

A.9 Comparing infrastructure designs . . . . . . . . . . . . . . . . . . . . . . . 59

B.1 Cost-Oriented Capacity Planning Problem Formalization . . . . . . . . . . 69

B.2 Business-Oriented Capacity Planning Problem Formalization . . . . . . . . 70

B.3 Example scenario parameters . . . . . . . . . . . . . . . . . . . . . . . . . 71

C.1 Parameters for example . . . . . . . . . . . . . . . . . . . . . . . . . . . . 86

C.2 Comparing designs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89

D.1 Notational summary for main optimization problem . . . . . . . . . . . . . 99

D.2 Notational summary for infrastructure cost . . . . . . . . . . . . . . . . . . 100

D.3 Notational summary for business impact model . . . . . . . . . . . . . . . 102

D.4 Notational summary for availability model . . . . . . . . . . . . . . . . . . 104

D.5 Notational summary for response time analysis . . . . . . . . . . . . . . . 106

D.6 Transition probabilities in NRG CBMG . . . . . . . . . . . . . . . . . . . 114

x

LISTA DE TABELAS xi

D.7 Average number of visits to each state . . . . . . . . . . . . . . . . . . . . 115

D.8 Parameters for examples . . . . . . . . . . . . . . . . . . . . . . . . . . . 116

D.9 Service demand in milliseconds in all tiers . . . . . . . . . . . . . . . . . . 116

D.10 Comparing infrastructure designs . . . . . . . . . . . . . . . . . . . . . . . 119

E.1 Design Problem Associated with Conventional SLA Negotiation . . . . . . 144

E.2 Design Problem Associated with Business-Driven SLA Negotiation . . . . 148

E.3 Average Number of visits to each CBMG state during a customer session . 149

E.4 Parameters for example scenario . . . . . . . . . . . . . . . . . . . . . . . 150

E.5 Resource demand in milliseconds . . . . . . . . . . . . . . . . . . . . . . 150

Captulo 1

Introduo

Uma vez que a Tecnologia da Informao (TI) tem fundamental importncia no apoio s

operaes de negcio, as empresas esto cada vez mais dependentes de sua infra-estrutura e

seus servios de TI. Concomitantemente, com o passar do tempo, a TI teve que evoluir de

forma a tornar-se capaz de atender s novas regras do mercado. Neste sentido, as metodolo-

gias de gerncia de TI tambm tiveram que evoluir de forma a acompanhar a TI. Ao longo

desse processo, a gerncia de TI passou por diversos nveis de maturidade, iniciando com

a gerncia de infra-estrutura, Information Technology Infrastructure Management

[

Lew99a

]

(ITIM) em ingls, que constituda de vrios sub-nveis de escopo: gerncia de dispositivos,

de redes de computadores, de sistemas, de aplicaes e, nalmente, na gerncia integrada

abrangendo todos estes nveis. Em seguida a gerncia de TI evoluiu para a gerncia de

servios de TI, Information Technology Service Management

[

BKP02

]

(ITSM) em ingls,

medida que a TI propriamente dita alcanava o nvel de proporcionar vantagem competitiva.

Note que, enquanto ITIM objetiva a gerncia dos componentes de TI, ITSM busca a gerncia

dos servios de TI. Neste sentido houve diversas mudanas. Como exemplos, podemos citar

a mudana do foco dos componentes de TI para os clientes dos servios constituindo sua

principal mudana , a existncia de um catlogo descrevendo os servios de TI oferecidos,

a promessa de nveis de qualidade em torno de tais servios, entre outros. Um dos principais

objetivos da gerncia de servios de TI prover e suportar servios que atendam s neces-

sidades dos clientes destes. Para tal, ITSM composto de diversos processos, tais como:

gerncia de mudanas, gerncia de segurana, gerncia de nveis de servio, gerncia de

incidentes, gerncia de congurao, entre outros. Cada um desses processos engloba uma

1

2

ou mais atividades do departamento de TI ou do provedor de servios de TI, quando a TI

terceirizada. Neste momento, interessante denir bem os termos cliente e usurio acima

referidos e que sero amplamente usados ao longo desta dissertao. Cliente a entidade que

recebe, do departamento de TI, ou contrata, perante um provedor, servios de TI. J usurio

a pessoa que usar os servios de TI disponibilizados.

Dado que a infra-estrutura de TI um componente indispensvel no contexto corpora-

tivo atual, mant-la funcionando e atendendo s necessidades do negcio propriamente dito

crucial. Para tal, faz-se necessrio entender tais necessidades e relacion-las com a TI,

de forma que o negcio seja suportado por servios de TI. Tais servios esto, por sua vez,

apoiados em uma infra-estrutura que fornece qualidade apropriada. Contudo, tal tarefa no

trivialmente resolvida. Primeiramente, bastante comum centrar-se na ecincia da TI

propriamente dita

[

TT05b

]

, o que pode resultar em uma infra-estrutura de TI pouco ecaz

em relao ao negcio. Para melhor entendimento desse fato, faz-se necessrio conhecer um

pouco mais sobre a gerncia de servios de TI, o que apresentada na subseo seguinte.

Em segundo lugar, a prpria atividade de projeto de infra-estruturas de TI (Capacity Plan-

ning, em Ingls) uma atividade bastante complexa. Projetar uma infra-estrutura de TI para

atender a determinados requisitos de performance envolve a congurao de hardware e soft-

ware em diversas camadas (por exemplo, camada Web, camada de aplicao e camada de

dados), denio do nmero de servidores em modo ativo e em modo standby para prover

valores adequados de tempo de resposta e disponibilidade, respectivamente , caching, con-

sideraes sobre escala e carga submetida durante perodos normais de demanda, bem como

durante perodos com pico de demanda.

1.0.1 Gerncia de Servios de TI

Como relatado previamente, uma das principais contribuies trazidas pela gerncia de

servios de TI foi a mudana de foco gerencial dos recursos de TI para o cliente propria-

mente dito, pois esta no est mais focada apenas nos componentes de TI em si. Todavia,

as mtricas adotadas continuam sendo tcnicas, a exemplo de: disponibilidade, tempo de

resposta e vazo. Um problema da adoo de mtricas tcnicas est relacionado dicul-

dade de correlacion-las com objetivos de negcio, o que fundamental para que se projete

uma infra-estrutura com foco em sua eccia em relao aos objetivos de negcio; qual a

3

disponibilidade, ou tempo de resposta, que uma determinada infra-estrutura de TI deve ofer-

ecer para um dado servio? Qual a relao existente entre 99.99% de disponibilidade, ou

1,5 segundos de tempo de resposta, com um determinado objetivo de negcio? Alm de no

exprimirem claramente os objetivos de negcio, mtricas tcnicas dicultam a comunicao

entre o departamento de TI e o resto da empresa, a qual no est familiarizada com um jargo

tcnico, mas sim com um jargo de negcios.

A maneira padro atual de relacionar as necessidades do negcio e da TI atravs

de contratos conhecidos como Acordos de Nvel de Servio ou Service Level Agreements

(SLAs)

[

BTdZ99; LR99; SM00; Lew99a

]

, em ingls. A elaborao de SLAs fornece, entre

outras coisas, um consenso sobre os valores de nveis de servios requeridos pelo cliente e

os nveis de servios oferecidos pelo departamento de TI ou pelo provedor de servios de

TI, quando a TI terceirizada. Um SLA aplicvel tanto para especicao de servios

intra-empresa quanto para especicao inter-empresas, isto , envolvendo servios ter-

ceirizados. Tais valores de nveis de servios, quando expressos em um SLA, tomam a

forma de Service Level Objectives (SLOs), em ingls, e so limiares aplicados a mtricas

de desempenho, conhecidas como Service Level Indicators (SLIs) em ingls. "Disponibil-

idade"e "tempo mdio de resposta do servio"so exemplos de SLIs utilizados num SLA;

enquanto que, os valores 99.99% de disponibilidade ou 1,5 segundos de tempo de resposta

so exemplos de SLOs. Ainda, diversos outros parmetros podem ser denidos em um

SLA, tais como penalidades a serem pagas pelo provedor do servio quando este fal-

har em cumprir o nvel de servio prometido ; recompensas a serem pagas pelo cliente

do servio quando o provedor do servio superar as expectativas do nvel de servio acor-

dado ; perodo de vigncia do contrato e perodo de avaliao dos SLOs. Neste contexto,

SLAs so componentes de fundamental importncia para a gerncia de nveis de servios,

Service Level Management (SLM), conforme pode ser constatado nas seguintes metodolo-

gias: IT Infrastructure Library (ITIL)

[

Ele03; BKP02

]

, IT Service Management (ITSM) da

Hewlett Packard (HP)

[

HP

]

, Microsoft Operations Framework (MOF)

[

Mic

]

da Microsoft e

Control Objectives for Information and related Technology (COBIT)

[

Ins01

]

. Estas quatro

abordagens podem ser vistas como colees de boas prticas e recomendaes que abor-

dam diversos aspectos da gerncia de TI, em particular da gerncia de servios. Alm

da especicao de nveis de servio, SLM tambm aborda a negociao, implementao,

4

monitorao e otimizao dos servios de TI a serem oferecidos

[

BTdZ99; LR99; SM00;

Lew99a

]

. Neste sentido, SLM uma parte crucial da gerncia de servios de TI

[

BKP02

]

.

Com relao adoo de SLAs, vale a pena salientar que apesar de serem comumente ado-

tados para expressar as necessidades do negcio com relao aos servios de TI, a escolha

de seus parmetros (tcnicos), em especial SLOs, tambm no uma atividade trivial. Alm

disso, tal escolha tem sido feita de maneira ad-hoc, sem uma metodologia apropriada, re-

sultando em acordos difceis de serem cumpridos e que podem no atender s expectativas

do negcio, e, por conseqncia gerar possveis grandes perdas no negcio, como se con-

stata na referncia

[

TT05b

]

. Esta referncia consiste basicamente de um estudo com grandes

empresas americanas e europias que demonstrou uma enorme decincia na escolha dos

parmetros de SLAs. Dessa forma, tal estudo veio a reforar, baseado em dados de contratos

reais, a necessidade de mtodos e modelos mais maduros que resultem em SLAs adequada-

mente negociados.

1.0.2 BDIM: uma extenso Gerncia de Servios de TI

Recentemente, uma nova rea da gerncia de TI, chamada de Business-Driven Impact Man-

agement (BDIM)

[

Dub02; SMS

+

04; Pat02; Mas02; DCR04

]

estende a gerncia de servios

atravs da adoo de mtricas de negcio em adio s mtricas tcnicas convencionais.

Como apresentado na referncia

[

SMS

+

05

]

, uma mtrica de negcio pode ser vista como

uma quanticao de um atributo de um processo de negcio (business process (BP), em in-

gls) ou de uma unidade de negcio. Lucro, faturamento, nmero de funcionrios ou de BPs

afetados por um determinado evento ocorrendo na infra-estrutura e perda de vazo de BP so

exemplos de mtricas de negcios. Um BP representa uma seqncia de tarefas que deve ser

executada para a realizao de algum evento de negcio que resulte em agregao de valor

para a empresa ou para o cliente. Por exemplo, um processo de venda que constitui um

dos objetivos do negcio envolve diversas atividades, geralmente apoiadas por TI ou seus

servios, tais como vericar crdito do comprador, compra de matria-prima, disparar pro-

cesso de fabricao e realizar a entrega dos produtos. Nenhuma destas atividades (tarefas)

de forma isolada permite que realizemos o processo de venda precisamos de uma viso

"integrada"da TI para tal. Neste sentido, um processo de negcio provavelmente, mas no

necessariamente, est apoiado por TI para prover maior ecincia.

5

Contudo, como ser visto a seguir, BDIM vai alm da proposta de utilizao de uma

nova modalidade de mtricas para gerir a TI. O principal objetivo de BDIM melhorar e

ajudar o negcio propriamente dito

[

SMS

+

06

]

. Tal objetivo alcanado na medida em que

BDIM visa propr um conjunto de ferramentas, modelos e tcnicas que permitem mapear

eventos ocorridos na TI com o impacto quantitativo destes nos resultados do negcio. A

quanticao de tal impacto pode servir como fonte de informao de forma a propiciar a

tomada de decises que melhorem os resultados do negcio em si e tambm da TI, esta l-

tima como elemento de suporte s operaes de negcio isto a essncia de BDIM. Neste

sentido BDIM busca capturar o impacto negativo nos negcios, ou simplesmente perda, cau-

sado pelas falhas inerentes infra-estrutura de TI subjacente, assim como por degradaes

de desempenho dos servios de TI. Os Apndices A, B, C, D e E discutem BDIM mais pro-

fundamente. Tais apndices representam os resultados obtidos com o trabalho desenvolvido

ao longo do perodo do presente mestrado, resultando nesta dissertao. Tais resultados, por

sua vez, foram publicados como artigos cientcos em conferncias internacionais.

Neste momento interessante entender quo srio o problema de no considerar a per-

spectiva de negcio no projeto de infra-estruturas de TI ou de SLAs. A seguir, encontra-se

ilustrado tal fato com alguns nmeros obtidos dos Apndices A, B, C, D e E. Com isso,

objetiva-se antecipar alguns resultados gerais de forma a motivar o presente trabalho e ex-

plicitar a importncia do tema em questo. Como mostrado no apndice C, o custo de se

escolher erroneamente uma congurao de infra-estrutura de TI, ou valores inapropriados

de SLOs, pode ser bastante alto. Por exemplo, no cenrio descrito no referido apndice,

gasta-se desnecessariamente entre cerca de, 5830% (US$ 1.700.000,00, em valor absoluto) e

105% (US$30.500,00 em termos absolutos), dependendo da infra-estrutura escolhida. Esse

mesmo cenrio exemplo mostra que projetar infra-estruturas TI realizando overdesign tam-

bm produz possveis grandes perdas; aproximadamente 31% (US$ 9.000 percentualmente)

no referido cenrio. Tambm, projetar infra-estruturas capazes de lidar com picos de de-

manda (vide Apndice B), sem o devido entendimento da necessidade do negcio, pode ter

um custo bastante elevado; em mdia, o custo total (custo da infra-estrutura de TI mais perda

nanceira) 67% maior que a abordagem orientada aos negcios. Tal diferena pode chegar

a ser superior a 100% em alguns picos de demanda. Percebe-se, baseado nos exemplos

acima, que a perda potencial ao se desconsiderar a perspectiva dos negcios pode ser alta, e,

1.1 Objetivos 6

portanto, nos motiva a elaborar solues que levem em conta tal perspectiva.

A nova perspectiva aberta por BDIM permite reconsiderar as atividades de projeto de

infra-estruturas de TI e de SLAs atravs da considerao de mtricas de negcio. No to-

cante ao projeto de infra-estruturas de TI, tem-se o seguinte argumento informal: proje-

tar uma infra-estrutura de melhor qualidade, portanto mais complexa, implica em um alto

custo associado e em uma pequena possvel perda nanceira por impacto de falhas de TI ou

degradaes de performance. Por outro lado, projetar uma infra-estrutura de baixa qualidade

resulta em um baixo custo associado e em um provvel maior impacto negativo nos negcios,

originado a partir de falhas de TI ou degradaes de desempenho. Tem-se claramente um

tradeoff. Achar o ponto timo deste tradeoff signica achar a infra-estrutura tima segundo

a perspectiva dos negcios; pode-se, por exemplo, obter a infra-estrutura de TI com o menor

valor para perda ou para a soma da perda nanceira com o custo da infra-estrutura.

1.1 Objetivos

O objetivo geral deste trabalho apresentar uma investigao formal de tcnicas para (i)

projetar infra-estruturas de TI e (ii) escolher parmetros de SLAs segundo uma perspectiva

de negcio, de forma a otimizar mtricas de negcios. Como exemplo de tais mtricas,

pode-se citar: custo de provisionamento de infra-estrutura ou perda, oriunda da degradao

de performance nos servios especicados num SLA ou devido s falhas na infra-estrutura

de TI subjacente. A supracitada perspectiva de negcio capturada por meios de uma viso

BDIM permitir ponderar os custos relativos obteno de servios face s perdas oriundas

do impacto negativo das falhas da infra-estrutura nos negcios tradeoff acima apresentado.

Neste trabalho, considera-se apenas a infra-estrutura relacionada ao server farm, no

sendo considerados elementos como a rede ou os computadores pessoais dos usurios que

acessam os servios suportados pela infra-estrutura de TI a considerao de tais elementos

constitui um possvel trabalho futuro. Com relao ao projeto de infra-estruturas de TI,

apesar de estarem sendo consideradas infra-estruturas estticas, tambm so apresentados

estudos sobre o efeito de picos de demanda no projeto da infra-estrutura tima e uma breve

discusso sobre o uso de BDIM com provisionamento dinmico. No tocante escolha de

parmetros de SLA, foca-se na escolha de SLOs e aborda-se tanto servios que envolvem

1.2 Organizao da Dissertao 7

consumidor e provedor no mesmo domnio administrativo, quanto servios terceirizados.

De forma mais especca objetiva-se adicionalmente apresentar um modelo analtico us-

ado para representar uma congurao tpica da infra-estrutura de TI necessria para suportar

determinada qualidade de servio.

1.2 Organizao da Dissertao

Considerando a existncia de vrios artigos cientcos relatando a evoluo do trabalho em

questo, e que os temas neles discutidos constituem-se alvo de maior comparao com tra-

balhos da comunidade internacional do que da comunidade nacional, esta dissertao apre-

senta uma estrutura bastante enxuta em portugus e faz freqentes referncias aos apndices

que representam as contribuies trazidas gerncia de TI, especicamente s atividades de

projeto de infra-estruturas de TI e de Acordos de Nveis de Servio de TI, e que resultaram

nesta dissertao. Vale salientar que tais apndices esto escritos em ingls, pois represen-

tam artigos cientcos, que guram entre os trabalhos pioneiros de BDIM, publicados ou

submetidos a algum veculo de circulao internacional.

O restante da dissertao est organizado como descrito a seguir. No Captulo 2

descrever-se-, em linhas gerais, a soluo proposta pelo presente trabalho aos problemas

acima apresentados. Um guia objetivando facilitar a leitura dos apndices da dissertao

ser apresentado no Captulo 3. Tal guia apresentar um resumo e os principais resultados

de cada artigo cientco. O Captulo 4 discorer sobre aspectos relacionados validao do

trabalho desenvolvido, principalmente no que diz respeito validade e acurcia dos modelos

analticos propostos. Por ltimo, o Captulo 5 sumarizar a abordagem proposta, relatar

as concluses obtidas e discutir idias de possveis prximos passos para o trabalho em

questo.

Captulo 2

Projetando Infra-estruturas de TI e

Acordos de Nveis de Servios

Este captulo descreve rapidamente a soluo apresentada por esta dissertao para os prob-

lemas relacionados com as atividades de projeto de infra-estruturas de TI e de SLAs que

foram apresentados no captulo anterior. Para detalhes especcos sobre cada soluo, vide

os Apndices A, B, C, D e E.

Desconsiderar a perspectiva dos negcios quando projetando infra-estruturas de TI para

atender s necessidades do negcio pode gerar grande perda nanceira. Primeiramente,

preciso entender tais necessidades e relacion-las com a TI, para que seja possvel projetar

infra-estruturas de TI, ou especicar SLAs, que ofeream uma qualidade de servio apropri-

ada. Neste sentido, fundamental a adio da viso de negcio nestas atividades. Tal viso

de negcio signica entender qual a relao existente entre a TI e o negcio, ou seja, capturar

qual o impacto no negcio caso determinado evento na infra-estrutura de TI ocorra.

Este trabalho est baseado no argumento informal de que projetar uma infra-estrutura

de melhor qualidade, portanto mais complexa, implica em um maior custo associado e em

uma possivelmente menor perda nanceira por impacto de falhas de TI ou degradaes de

performance. Por outro lado, projetar uma infra-estrutura de menor qualidade resulta em um

menor custo associado e em um possvel maior impacto negativo nos negcios, originado a

partir de falhas de TI ou degradaes de desempenho. Observa-se claramente um tradeoff.

A abordagem proposta por este trabalho, e adotada nos Apndices A, B, C, D e E, consiste

em achar o ponto timo desse tradeoff, atravs de uma otimizao. Este ponto timo repre-

8

9

senta a infra-estrutura tima de TI segundo a perspectiva de negcio, e, portanto de acordo

com os objetivos gerais de negcio. Note que a abordagem aqui apresentada no captura os

requisitos especcos dos objetivos de negcio, mas apenas requisitos gerais no sentido de

minimizar o impacto nanceiro nos negcios. Aps obter a soluo tima, caso se deseje

especicar um SLA, pode-se calcular os valores dos SLOs a partir da infra-estrutura obtida.

Neste sentido, esta abordagem para especicao de SLAs substancialmente diferente das

abordagens atuais, onde tais valores so escolhidos sem um perfeito entendimento das ne-

cessidades do negcio. Alm disso, nas abordagens atuais os SLOs so escolhidos a priori,

isto , primeiro se dene os valores dos SLOs e depois projeta-se uma infra-estrutura de TI

que os suporte. Tem-se o processo inverso na abordagem proposta: primeiro se dene uma

infra-estrutura de TI adequada s necessidades do negcio para ento calcular os SLOs re-

sultantes. O que doravante chamar-se- de uma escolha a posteriori dos SLOs. A Figura 2.1

ilustra a abordagem proposta.

Figura 2.1: Esboo da Soluo Proposta

Para capturar o impacto negativo nos negcios oriundo de falhas associadas infra-

estrutura de TI, assim como de degradaes de desempenho dos servios de TI, preciso

criar uma relao de causa-efeito entre a TI e o negcio propriamente dito. No contexto

BDIM, tal relao causa-efeito obtida atravs de um modelo, chamado de modelo de im-

10

pacto. Logo, a tarefa desempenhada por tal modelo no trivial, sendo equivalente a rela-

cionar mtricas tcnicas em mtricas de negcio de forma a obter uma perspectiva de neg-

cio. Neste sentido, com o objetivo de estabelecer a referida relao causa-efeito, adota-se

um modelo em trs camadas (vide Figura 2.1), composto das seguintes camadas: de TI, de

processos de negcios e de negcios. Neste modelo a camada de processos de negcio (BPs)

tem um papel fundamental no cruzamento de mtricas entre a camada de TI e a camada de

negcios. A camada de BPs responsvel pelo mapeamento entre mtricas tcnicas tais

como disponibilidade e tempo mdio de resposta em mtricas de BP, tal como vazo de

transaes de negcio. Estas mtricas tcnicas so obtidas a partir da camada de TI. Na

seqncia, a camada de negcios, atravs de um submodelo chamado de modelo de receita,

relaciona mtricas de BP a mtricas de negcio, tal como faturamento perdido.

O modelo de impacto proposto e utilizado neste trabalho considera que a perda nan-

ceira, L(T), durante o intervalo de tempo T, tem duas fontes: indisponibilidade dos

servios de TI e desistncia de compra por parte dos consumidores nais, isto , aqueles que

acessam o BP suportado pelos servios de TI, a m de realizarem suas compras (comrcio

eletrnico ou e-commerce, em ingls). No modelo utilizado aqui, um consumidor nal de-

siste de comprar sempre que experimentar um tempo de resposta maior que um determinado

limiar, T

DEF

; o valor de 8 segundos comumente referido na literatura como um valor apro-

priado para T

DEF

[

MAD04

]

. Portanto, para capturar o impacto negativo, preciso calcular

a disponibilidade dos servios de TI, o que feito utilizando teoria da conabilidade con-

vencional

[

Tri82

]

, e obter a probabilidade, B(T

DEF

), de que o tempo de resposta seja maior

que o dado limiar. O clculo destes dois valores acima mencionados permite a derivao

do valor da perda nanceira, L(T), durante o intervalo de tempo T. Note que, alm de

obter L(T), preciso tambm obter C(T), o custo da infra-estrutura de TI durante o

perodo T, para que a abordagem proposta, minimizar o valor da soma da perda nanceira

com o custo da infra-estrutura, seja efetivamente aplicada. Observe que, o modelo espec-

co de impacto adotado neste trabalho depende essencialmente de BPs para estabelecer a

relao de causa-efeito entre a TI e o negcio propriamente dito. Este fato implica que tal

modelo em particular aplicvel apenas em um contexto no qual h uma forte, ou total,

dependncia na TI. Porm, este fato no constitui uma restrio do presente trabalho, pois a

abordagem proposta perfeitamente geral, de forma a permitir a utilizao de outros mod-

2.1 Abstrao da Infra-estrutura de TI 11

elos de impacto, aplicveis em cenrios diferentes do considerado. Como exemplo tpico

de cenrio que atende claramente ao requisito de forte dependncia na TI pode-se citar o

comrcio eletrnico (e-commerce). Tal cenrio ser usado na exposio das idias trazidas

pela abordagem proposta pela presente dissertao.

A seguir apresentado o modelo formal bsico de infra-estrutura que permite a obteno

das duas mtricas de interesse no processo de otimizao: custo da infra-estrutura de TI e

impacto negativo nos negcios (perda). Para derivar o impacto negativo necessrio obter

a disponibilidade dos servios de TI e a desistncia de compra por parte dos consumidores

nais. Comentrios gerais sobre a obteno de todas essas mtricas so feitos a partir do

prximo pargrafo. Vale salientar que diversas extenses foram acrescentadas a esse modelo

bsico. Tais extenses, trazidas nos artigos cientcos constantes nos apndices A, B, C, D

e E, sero explicitamente apresentadas no prximo captulo desta dissertao. No captulo

seguinte apresentar-se- de maneira formal e detalhada cada um destes artigos cientcos.

2.1 Abstrao da Infra-estrutura de TI

Conforme mostrado na Figura 2.2, considera-se que cada BP est apoiado em apenas um

servio de TI (IT service). Ainda, considera-se o caso de apenas um servio de TI. Apesar

da generalizao destas duas simplicaes ser uma tarefa relativamente fcil, complicaria

de forma desnecessria a apresentao do modelo. Um dado servio S baseia-se em um

conjunto RC de classes de recursos de TI (IT resource classes); tome um conjunto de servi-

dores de banco de dados ou de servidores de aplicao como exemplos. Uma determinada

classe de recursos, RC

j

, composta de um cluster de n

j

recursos de TI (IT resources) idn-

ticos. Como exemplos de recursos de TI, pode-se citar um servidor de banco de dados ou um

servidor web. Do total de servidores de RC

j

, m

j

recursos de TI esto congurados em modo

ativo, ou de balanceamento de carga, para lidar com a carga recebida oferecendo tempo de

resposta aceitvel, e n

j

m

j

recursos de TI esto congurados em modo standby (fail-over),

oferecendo uma melhor disponibilidade ao servio S.

Alm disso, um dado recurso de TI R

j

, pertencente classe de recursos RC

j

, formado

de um conjunto P

j

= {P

j,1

, . . . , P

j,k

, . . .} de componentes de TI (IT components) tome

hardware do servidor e sistema operacional como exemplos de componentes de TI que po-

2.2 Sobre os problemas de projeto de infra-estruturas e SLAs 12

dem fazer parte de R

j

. Caso um ou mais componente de TI falhem, o respectivo recurso

de TI tambm falha. O formalismo matemtico apresentado corresponde ao modelo formal

bsico para representar uma infra-estrutura de TI. Apesar de haver diversas extenses a este

modelo de infra-estrutura de TI, estes no so apresentadas aqui (vide apndices A, B, C, D

e E. O objetivo fornecer apenas o necessrio para um entendimento um pouco mais formal

da abordagem proposta.

Figura 2.2: Principais Entidades do Modelo

2.2 Sobre os problemas de projeto de infra-estruturas e

SLAs

Como j foi visto, projetar infra-estruturas de TI no uma atividade trivial. O problema de

projetar infra-estruturas de TI pode ser denido como mostrado na Tabela 2.1.

2.3 Sobre o modelo de custo da infra-estrutura de TI 13

Tabela 2.1: Denio formal do problema de projetar infra-estruturas de TI.

Achar: O nmero total de recursos, n

j

, e o nmero de recursos em

balanceamento de carga, m

j

, para cada classe de recursos RC

j

.

Minimizando: C(T) + L((T), o impacto naceiro nos negcios durante

o perodo T.

Sujeito a: n

j

m

j

e m

j

1.

Considerando que na presente abordagem primeiro se dene uma infra-estrutura de TI

adequada s necessidades do negcio para ento calcular os SLOs resultantes, pode-se es-

pecicar o problema de projetar SLAs conforme mostrado na Tabela 2.2.

Tabela 2.2: Denio formal do problema de projetar SLAs.

Achar: Os parmetros do SLA em especicao: A

MIN

, T e B

MAX

.

Minimizando: C(T) + L((T), o impacto naceiro nos negcios durante

o perodo T.

Variando: O nmero total de recursos, n

j

, e o nmero de recursos em

balanceamento de carga, m

j

, para cada classe de recursos RC

j

.

Sujeito a: n

j

m

j

e m

j

1.

Onde: A

MIN

o valor mnimo aceitvel para a disponibilidade do servio S;

T o valor mximo aceitvel para o tempo mdio de resposta do

servio S;

B

MAX

o valor mximo aceitvel para a probabilidade de desistncia.

2.3 Sobre o modelo de custo da infra-estrutura de TI

O custo da infra-estrutura, C(T), durante o perodo T, simplesmente a soma dos cus-

tos de todos os recursos de TI. Uma vez que os recursos de TI podem estar congurados

em modo ativo (balanceamento de carga) ou em modo standby (fail-over), eles devem ter

custos diferentes quando congurados em modos diferentes

[

JST03

]

. Recursos congurados

no modo ativo tm maiores custos devido a gastos com energia eltrica, licenas de soft-

ware, etc. Considerando que recursos de TI so compostos de componentes de TI, possvel

2.4 Sobre o modelo particular de impacto negativo 14

expressar suas taxas de custo em termos das taxas de custo dos componentes de TI que o

compem; c

active

j,k

e c

standby

j,k

representam as taxas de custo do componente P

j,k

quando con-

gurado nos modos ativo e em espera respectivamente. Tais valores de custo so expressos

em taxa de custo e representam custo por unidade de tempo, o que possibilita o clculo do

custo da infra-estrutura de TI para um determinado perodo. Para obter detalhes de como

calcular C(T), o custo da infra-estrutura consulte os Apndices A, B, C, D e E.

2.4 Sobre o modelo particular de impacto negativo

Como denido anteriormente, para que seja possvel aplicar o mtodo proposto, alm do

valor de o custo da infra-estrutura de TI preciso tambm estimar o valor do impacto neg-

ativo nos negcios, L(T), durante o perodo T. Dado que transaes por segundo

chegam ao BP BP gerador de faturamento e que est suportado por S, caso a infra-estrutura

de TI subjacente fosse perfeita, todas as transaes de entrada seriam transformadas em

faturamento. Um BP dito gerador de faturamento caso produza renda a cada transao

nalizada com sucesso. O faturamento mdio por transao do BP . No entanto, as im-

perfeies da TI leia-se indisponibilidade e desistncia dos consumidores nais reduzem

a vazo de BP para X transaes por segundo existindo, portanto uma perda na vazo de

BP de X transaes por segundo. A vazo de um BP representa a sua taxa de transaes

nalizadas com sucesso. Logo, o impacto negativo nos negcios L(T) = T X,

mas X = X

T

+ X

A

, onde X

T

= B(T

DEF

) A o impacto negativo

atribudo s desistncias por parte dos consumidores nais e X

A

= (1 A)

o impacto negativo atribudo indisponibilidade do servio S. Dessa forma, L(T) =

T (X

T

+X

A

) = T (B(T

DEF

) A+1A)). A seguir comentrios

sobre os modelos de disponibilidade e de performance que propiciam, respectivamente, o

clculo da disponibilidade do servio de TI e da probabilidade de desistncia de compra por

parte dos consumidores nais.

2.5 Sobre o modelo de disponibilidade do servio de TI 15

2.5 Sobre o modelo de disponibilidade do servio de TI

Para calcular a disponibilidade, A, do servio S preciso ter em mente que, devido imper-

feio inerente da infra-estrutura subjacente, cujos componentes falham, o servio S torna-se

indisponvel. A notao A uma referncia palavra availability que signica dispobilidade

em ingles. Lanando-se mo da teoria da conabilidade, a disponibilidade individual dos

componentes achada atravs de seus valores de Tempo Mdio entre Falhas (Mean Time

Between Failures MTBF, em ingls) e de Tempo Mdio para Reparo (Mean Time to Repair

MTTR, em ingls). Os valores de MTBF podem ser obtidos a partir de especicaes

de componentes ou por meio de registros (logs). Por outro lado, Os valores de MTTR de-

pendem do tipo de contrato de servio de manuteno desses componentes. Na seqncia,

partindo-se da disponibilidade individual dos componentes de TI e adotando uma abordagem

bottom-up obtm-se a disponibilidade Ado servio de TI. Para tal, sempre se valendo da teo-

ria da conabilidade e do modelo de infra-estrutura adotado, calcula-se a disponibilidade de

um recurso de TI, digamos A

R

j

. A partir dos valores de disponibilidade dos recursos de TI

deriva-se a disponibilidade das classes de recursos. Por m, tendo as disponibilidades das

classes de recursos, determina-se A. os detalhes especcos de como obter cada uma dessas

disponibilidades so apresentados nos apndices A, B, C, D e E.

2.6 Sobre a probabilidade de desistncia

Por m, resta mostrar como derivar B(T

DEF

), a probabilidade de que o tempo de

resposta seja maior que o dado limiar. Para calcular B(T

DEF

) preciso achar

ResponseTimeDistribution(x), a distribuio cumulativa de probabilidade do tempo de

resposta. Para tal m, preciso modelar a carga aplicada aos recursos de TI, os proces-

sos de chegada e de atendimento de transaes, etc. Tal modelo baseado em um modelo

aberto de las

[

Kle76a

]

. Modelos abertos de las so adequados quando h um nmero

grande de potenciais clientes, situao comum no comrcio eletrnico. No trabalho aqui

desenvolvido, assume-se que o processo de chegada caracterizado por uma distribuio de

Poisson, o que de fato uma boa aproximao para curtos espaos de tempo

[

MAR

+

03c

]

e que o tempo de servio caracterizado por uma distribuio exponencial. Uma vez que

2.6 Sobre a probabilidade de desistncia 16

uma dada transao vai usar recursos de possivelmente todas as classes de recursos, o seu

tempo de resposta a soma de |RC| variveis aleatrias cada classe de recursos acessada

tem sua prpria distribuio , o que pode ser resolvido atravs do produto de suas trans-

formadas de Laplace. Vale a pena salientar que, caso os efeitos de cache estejam sendo

considerados, o nmero de classes de recursos acessadas uma varivel aleatria. Portanto,

o tempo de resposta de uma transao no obtido atravs da simples soma |RC| variveis

aleatrias. Este caso um pouco mais delicado e tratado no apndice D. Obtida a dis-

tribuio ResponseTimeDistribution(x), a probabilidade de desistncia do consumidor

nal do servio B(T

DEF

)) = 1 ResponseTimeDistribution(T

DEF

). O clculo de

B(T

DEF

) apresentado detalhadamente nos apndices A, B, C, D e E.

Captulo 3

Resultados da Pesquisa

Este captulo apresenta um guia para facilitar a leitura dos apndices da dissertao. Cada

apndice constitui um artigo cientco publicado ou submetido a algum veculo de publi-

cao internacional. Sendo assim, estes artigos esto escritos em Ingls e destacam-se entre

os artigos pioneiros de BDIM. Em alguns destes artigos o autor desta dissertao atuou como

primeiro autor, enquanto que, nos demais artigos, apesar de no ser o primeiro autor, teve sig-

nicativa participao na elaborao destes. A seguir apresenta-se um resumo, destacando

as contribuies de cada um dos estudos realizados nos artigos cientcos. Alm disso, a par-

ticipao do autor desta dissertao na elaborao de cada um dos artigos explicitada. Tais

artigos representam as contribuies trazidas gerncia de TI, especicamente s atividades

de projeto de infra-estruturas de TI e de Acordos de Nveis de Servio, que resultaram nesta

dissertao. Note que, com o intuito de facilitar o entendimento do trabalho, os apndices

no so apresentados na mesma ordem com que foram publicados ou submetidos.

3.1 Projeto timo de Infra-Estruturas de TI pela Perspec-

tiva do Negcio

Dois artigos abordam o projeto timo de infra-estruturas de TI pela perspectiva do negcio.

O primeiro deles (apndice A) corresponde ao artigo cientco publicado no veculo interna-

cional 39

o

Hawaii International Conference on System Science (HICSS 2006). O segundo

(apndice D) diz respeito ao artigo cientco submetido ao peridico Performance Evalua-

17

3.1 Projeto timo de Infra-Estruturas de TI pela Perspectiva do Negcio 18

tion. A seguir discorre-se sobre cada um deles. O primeiro artigo, intitulado de "Optimal

Design of e-Commerce Site Infrastructure from a Business Perspective", discute um mtodo

formal para projetar infra-estruturas de TI segundo a perspectiva do negcio. Enquanto a

maioria dos estudos atuais trata de metodologias que se restringem a trs mtricas tempo

de resposta, disponibilidade e custo , o modelo formal proposto repensa tal atividade atravs

da adoo de uma quarta mtrica, o impacto negativo nos negcios. O modelo apresentado

neste artigo traz um diferencial em relao ao modelo bsico descrito no captulo anterior:

a carga aplicada aos recursos de TI modelada atravs de um modelo conhecido por Cus-

tomer Behavior Model Graph (CBMG)

[

MAD04

]

. CBMG permite representar a carga que

uma dada sesso iniciada por um consumidor nal dos servios impe infra-estrutura de

TI.

Um CBMG compreende um conjunto de estados com probabilidades de transio as-

sociadas (grafo direcionado e ponderado). Cada estado do CBMG pode ser visto como

uma pgina do site de comrcio eletrnico sendo acessada. H basicamente dois tipos de

sesses: as sesses geradoras de faturamento revenue generating (RG) sessions e as

sesses no-geradoras de faturamento non-revenue generating (NRG) sessions. Sesses

geradoras de faturamento so aquelas que visitam algum estado gerador de faturamento do

CBMG. Como exemplo de estado gerador de faturamento tome a pgina do site de comrcio

eletrnico onde o pagamento dos itens previamente adicionados ao carrinho de compras

realizado. Sesses no-geradoras de faturamento no visitam nenhum estado gerador de fat-

uramento do CBMG, no entanto impem carga aos recursos de TI. Consumidores nais que

s realizam consultas de preos sem efetivamente concretizar a compra, por exemplo, geram

sesses no-geradoras de faturamento. Note que, a considerao de sesses no-geradoras

de faturamento acrescenta mais realismo ao modelo bsico apresentado no captulo anterior.

Por m, considerando que a modelagem da carga imposta feita por meio de CBMG, os

servios de TI so agora modelados por meio de um modelo multi-classe aberto de teoria

das las

[

Kle76a

]

. Neste modelo, cada classe CBMG representa uma classe no modelo de

teoria das las.

Neste trabalho, alm do projeto timo de infra-estruturas de TI pela perspectiva do neg-

cio, o uso da metodologia proposta em infra-estruturas adaptativas

[

KC03

]

tambm foi dis-

cutido.

3.2 Estudo do Efeito de Picos de Demanda no Projeto timo de Infra-Estruturas de TI pela

Perspectiva do Negcio 19

J o segundo artigo, cujo ttulo "Business-Driven Design of Infrastructures for IT Ser-

vices"tem uma abordagem semelhante quela do primeiro artigo discutido acima. A difer-

ena bsica diz respeito ao modelo utilizado. Neste ltimo artigo diversas extenses foram

feitas ao modelo utilizado no primeiro artigo descrito acima. Enumera-se a considerao dos

efeitos de cache entre as classes de recursos e a possibilidade de diversas visitas a um dado

estado do CBMG. Diante de tais extenses este modelo signicativamente mais completo.

Neste artigo, todos os estudos dos outros artigos (inclusive os que sero mostrados nas prx-

imas subsees) so refeitos baseados neste modelo. Portanto, tal artigo pode ser visto como

uma verso nal do trabalho desenvolvido durante o mestrado, condensando os resultados

de todos os demais trabalhos.

Apesar de tambm ter sido escrito por outras pessoas, o autor desta dissertao participou

ativamente do desenvolvimento matemtico das extenses ao modelo analtico e da obteno

dos resultados, sendo dessa forma o segundo autor dos artigos em questo.

3.2 Estudo do Efeito de Picos de Demanda no Projeto

timo de Infra-Estruturas de TI pela Perspectiva do

Negcio

Um outro estudo realizado nesta dissertao corresponde a uma generalizao do modelo

bsico apresentado no captulo anterior, cujo intuito o de permitir que um dado servio

de TI, S, seja submetido a diferentes estados de demanda. Por exemplo, servio S pode ser

submetido a uma demanda de 10 transaes por segundo durante um determinado perodo de

tempo e, em seguida, ser submetido a uma nova demanda de 20 transaes por segundo por

um outro perodo de tempo. Tal generalizao permitiu formalizar e apresentar uma nova

abordagem para projeto de infra-estruturas estticas de TI que considera picos de demanda,

a qual foi apresentada no artigo aceito no veculo internacional 10

o

IEEE/IFIP Network

Operations and Management Symposium (NOMS 2006), constante no apndice B. Picos

de demanda so bastante comuns no contexto de comrcio eletrnico, especialmente nos

perodos que precedem datas comemorativas tradicionais como Natal ou dia das mes. No

considerar a priori a existncia de tais variaes de demanda pode resultar no no atendi-

3.3 Negociao tima de SLAs Internos pela Perspectiva do Negcio 20

mento, durante os tais picos de demanda, dos requisitos de desempenho (leia-se tempo de

resposta). Como implicao direta deste ltimo fato tem-se uma possvel grande perda -

nanceira devido desistncia dos consumidores nais, o que justicada pelos altos tempos

de resposta. Alternativamente, poder-se-ia superdimensionar a infra-estrutura focando o pro-

jeto da infra-estrutura no pico de demanda. No entanto, esta alternativa tende a resultar em

uma infra-estrutura de alto custo e que provavelmente ser subutilizada durante os perodos

normais de demanda.

A idia bsica deste artigo cientco estudar o efeito que picos esperados de demanda

tm no projeto timo de infra-estruturas estticas de TI pela perspectiva do negcio. Picos

inesperados de demanda esto fora do escopo deste artigo e, portanto, no foram investiga-

dos. Picos esperados de demanda so aqueles que ocorremdurante os perodos que precedem

datas comemorativas tradicionais ou promoes planejadas de venda, cujo acontecimento

de conhecimento prvio. Tal trabalho prov respostas s seguintes perguntas: quo difer-

ente a infra-estrutura de TI resultante quando picos de demanda so considerados durante

o processo de projeto de infra-estrutura? Quo caro no considerar picos de demanda?

Como a infra-estrutura tima inuenciada por variaes nas caractersticas de um pico de

demanda?

Neste artigo, o autor da presente dissertao atua como primeiro autor. Sua participao

se deu na extenso ao modelo analtico apresentado, gerao dos resultados e escrita do

mesmo.

3.3 Negociao tima de SLAs Internos pela Perspectiva

do Negcio

O apndice C contm o artigo cientco publicado no veculo internacional intitulado 16

o

IFIP/IEEE Distributed Systems: Operations and Management (DSOM 2005). A essncia

por trs deste trabalho est relacionada especicao de valores timos de SLOs segundo

a viso dos negcios. O referido estudo est restrito ao caso onde ambos, provedor e con-

sumidor do servio, esto no mesmo domnio administrativo. Nas abordagens tradicionais

de projeto para projeto de SLAs, os valores dos SLOs so escolhidos a priori para depois

projetar uma infra-estrutura de TI que os suporte. A abordagem proposta neste artigo se

3.4 Negociao tima de SLAs Envolvendo Servios Terceirizados pela Perspectiva do

Negcio 21

contrape s abordagens tradicionais na medida que os valores dos SLOs so calculados

a posteriori, ou seja, a partir da infra-estrutura tima obtida com a otimizao do impacto

nanceiro (soma do custo da infra-estrutura de TI com a perda nanceira), como relatado

na abordagem descrita no captulo anterior. Desta maneira, evita-se que os SLOs sejam es-

colhidos de forma ad hoc e, portanto, sem nenhuma correlao com as necessidades dos

negcios.

O autor desta dissertao teve participao na elaborao do modelo analtico e par-

ticipou ativamente da seo de resultados constantes neste artigo. Desta forma, o mesmo o

segundo autor do artigo em questo.

3.4 Negociao tima de SLAs Envolvendo Servios Ter-

ceirizados pela Perspectiva do Negcio

No estudo apresentado na subseo anterior, os valores timos de SLOs so obtidos sob o

ponto de vista do cliente do servio. Tal abordagem perfeitamente aceitvel quando tanto

o provedor quanto o cliente do servio confundem-se na mesma empresa. Neste caso, as

mtricas custo da infra-estrutura e perda nanceira incorrem sobre a mesma empresa. Alm

disso, o conceito de penalidade ou recompensa geralmente no se aplica neste tipo de SLA.

Todavia, importante lembrar que a terceirizao dos servios de TI para um prove-

dor externo de servios um cenrio bastante comum atualmente. Logo, se faz necessrio

considerar tambm o ponto de vista do provedor e a existncia de penalidades e recom-

pensas e, portanto a abordagem descrita no estudo apresentado na subseo anterior no

adequada. H uma separao lgica das duas mtricas utilizadas anteriormente: o custo da

infra-estrutura est no lado provedor do servio, enquanto a perda nanceira est no lado no

cliente. Convencionalmente, o provedor do servio ir projetar uma infra-estrutura e consid-

erar as penalidades sob o seu ponto de vista, isto , de forma a maximizar o seu lucro. Desta

forma, no necessariamente h uma expresso clara da correlao existente com os objetivos

do negcio do cliente do servio. A idia bsica deste estudo apresentar uma abordagem

orientada aos negcios, capaz de projetar SLAs que envolvam servios terceirizados. Neste

sentido, prope-se que a perda nanceira do cliente do servio tambm seja considerada

durante o projeto do SLA, melhorando assim a relao cliente-provedor e resultando em

3.4 Negociao tima de SLAs Envolvendo Servios Terceirizados pela Perspectiva do

Negcio 22

melhores valores de lucro para ambos. Tal considerao se d atravs da maximizao da

margem de lucro do provedor e da margem de lucro do consumidor. Logo se tem uma

otimizao multi-objetivo, o que difere da abordagem convencional que otimiza apenas o

lucro do provedor. Para que essa nalidade seja alcanada, o referido trabalho dene uma

srie de mtricas de negcio que permitem obter as margens de lucro do cliente e do prove-

dor do servio de TI. Faturamento do cliente e do provedor do servio TI, assim com o preo

cobrado pelo provedor por este servio so exemplos de mtricas que foram denidas neste

trabalho.

Este artigo consiste do apndice E. Nele, o autor da presente dissertao atua como

primeiro autor onde sua participao se deu na extenso ao modelo analtico apresentado,

gerao dos resultados e escrita do mesmo.

Captulo 4

Discusso sobre a Validao dos Modelos

Este captulo discorrer acerca dos aspectos relacionados validao do trabalho desen-

volvido, principalmente no que diz respeito validade e acurcia dos modelos analticos.

Note que apesar de que nem todos os passos aqui descritos terem sido realizados, o principal

objetivo aqui mostrar possveis caminhos de validao dos modelos analticos desenvolvi-

dos, caracterizando assim um guia para validao. O motivo pelo qual no se realizou um

exerccio completo de validao dos modelos apresentados neste trabalho est diretamente

relacionado quantidade de artigos publicadas ou submetidas a conferncias peridicos in-

ternacionais.

Aabordagemapresentada neste trabalho est fortemente baseada emummodelo de infra-

estrutura de TI capaz de suportar determinados servios de TI. Tal modelo composto de

diversos sub-modelos analticos, apresentados brevemente no captulo 2 e detalhados nos

apndices A, B, C, D e E. Os referidos sub-modelos permitem a obteno de mtricas tais

como o custo da infra-estrutura, a disponibilidade do servio de TI suportado e a proba-

bilidade de desistncia dos consumidores nais. Os valores das referidas mtricas so us-

ados como entrada no processo de otimizao da abordagem proposta, de forma a se obter

a infra-estrutura de TI tima segundo a perspectiva dos negcios. Por modelo analtico

entende-se uma representao simplicada da realidade, a qual est baseada em um formal-

ismo matemtico implicando, portanto, em um entendimento no ambguo do mesmo. O

objetivo de se modelar apenas parte da realidade o de permitir estudar isoladamente um ou

mais comportamentos do sistema real.

Uma das grandes diculdades associadas modelagem de um sistema real diz respeito

23

24

sua complexidade. O comportamento estudado , em geral, inuenciado por um grande

nmero de fatores. Estes fatores, por sua vez, podem dizer respeito tanto ao prprio sistema

quanto a questes sociais, culturais, polticas, econmicas, etc. Logo, saber isolar os fa-

tores que realmente inuenciam o problema estudado evita que seja preciso criar um modelo

cujo nvel de detalhes seja desnecessariamente grande, concentrando esforos no que real-

mente importante. A identicao destes fatores implica, acima de tudo, na compreenso

da natureza do problema estudado. Isto permite a criao de um modelo que corresponda

a uma abstrao vlida do sistema real, isto , capaz de reproduzir os tais fenmenos de

interesse. Por exemplo, ao se modelar o componente de TI hardware, a sua cor no desem-

penha inuncia nas mtricas almejadas na abordagem em questo, e, portanto no precisa

ser includo no modelo de infra-estrutura de TI. Uma outra diculdade relacionada arte de

modelagem a obteno de valores vlidos e representativos para os parmetros de entrada

do modelo. Diante das consideraes acima apresentadas sobre a concepo de um modelo,

ca clara a necessidade de uma atividade de validao de forma a garantir que o modelo

uma abstrao representativa do sistema real, e, portanto, seus resultados so crveis. Por

m, objetivando facilitar o processo de anlise e compreenso dos fenmenos de interesse

interessante que se implemente o modelo.

A implementao do modelo foi realizada no MATLAB

[

Wor

]

. Alm de ser uma lin-

guagem de programao, MATLAB tambm um ambiente para desenvolvimento de algo-

ritmos que apresenta diversas facilidades, tais como: facilidade de gerao e manipulao

de grcos e disponibilidade de bibliotecas de funes estatsticas e matemticas, o que jus-

ticaram a sua adoo. Aps a concluso da implementao do modelo, ele teoricamente

estaria pronto para permitir anlise e compreenso dos fenmenos alvo de estudo do sistema

real. Porm h de se considerar tambm a possibilidade de erros introduzidos durante a sua

implementao. Portanto, preciso realizar tambm uma vericao da implementao. A

vericao tem por objetivo mostrar que a implementao do modelo no MATLAB funciona

como esperado e planejado, sendo, portanto uma correta implementao lgica do modelo.

A vericao da implementao do modelo foi feita atravs de ferramenta test_tools

[

Pie

]

.

Test_tools facilita a criao de testes de unidade para funes escritas em MATLAB, onde os

resultados esperados para diversos cenrios foram calculados caso a caso mo e confronta-

dos com os resultados obtidos a partir da execuo software. Tal procedimento forneceu uma

25

maior conabilidade aos resultados oriundos da implementao lgica do modelo.

Considerando que a abordagem proposta composta de diversos sub-modelos sub-

modelo de tempo de resposta, sub-modelo de custo, sub-modelo de disponibilidade e sub-

modelo de impacto deve-se tambm discutir sobre a validade destes para que seja possvel

obter alguma concluso sobre a abordagem propriamente dita. A seguir apresentado um

resumo de como a validao poderia ser realmente realizada.

A validao do sub-modelo de tempo de resposta pode ser realizada atravs da sua com-

parao com aplicaes multi-camadas reais de comrcio eletrnico rodando em uma de-

terminada congurao em cluster de servidores. Tais aplicaes teriam que ser instrumen-

tadas para possibilitar a obteno, a partir de seus logs, das seguintes mtricas: tempo de

resposta mdio, distribuio do tempo de resposta, probabilidade de desistncia. Uma vez

obtidas tais mtricas, seus valores seriam confrontados com os valores oriundos do sub-

modelo utilizando-se uma congurao de intra-estrutura semelhante. Uma outra alternativa

interessante a comparao com ferramentas de modelagem e avaliao de performance tais

como SPECweb2005

[

SPEb

]

, TPC

[

TPCb

]

, WebStone

[

Web

]

e jAppServer2004

[

SPEa

]

.

A validao do sub-modelo de disponibilidade pode ser realizada atravs da comparao

dos valores de disponibilidade obtidos com o sub-modelo proposto com valores oriundos

de trs fontes: logs histricos de aplicaes reais de comrcio eletrnico, ferramentas de

modelagem e avaliao de disponibilidade como Mbius

[

CDD

+

04

]

e SHARPE

[

ST87

]

e,

por m, outros modelos de disponibilidade de infra-estrutura de TI

[

JST03; UPS

+

05

]

. Vale

salientar que os valores de MTTR e MTBF utilizados como parmetros no referido sub-

modelo so tpicos da tecnologia atual

[

MAD04; JST03; MV00

]

.

Para validar o sub-modelo de custo, uma alternativa seria a comparao do seu valor re-

sultante de custo de uma dada infra-estrutura de TI com o valor obtido a partir da ferramenta

ISIDE (Infrastructure Systems Integrated Design Environment). O ISIDE uma implemen-

tao da metodologia proposta na referncia

[

AFT04

]

para o projeto de infra-estruturas ti-

mas em custo, que possui um banco de dados de componentes de infra-estrutura de TI e de

seus custos. Uma segunda alternativa seria a comparao com valores de infra-estruturas de

TI semelhantes obtidas junto a fornecedores de equipamentos corporativos. Como terceira

alternativa ter-se-ia a comparao com os valores obtidos utilizando-se a ferramenta TPC

Pricing Spec

[

TPCa

]

.

26

No tocante validao do sub-modelo de impacto, poder-se-ia tambm confrontar os

valores obtidos com valores constantes nos logs histricos de uma aplicao de comrcio

eletrnico. bastante provvel que no se obtenha facilmente tal informao, porm, muito

provavelmente, uma empresa que implemente conceitos de Business Intelligence (BI) deve

ter informaes sucientes para permitir tal comparao.

Uma vez validado cada um dos sub-modelos, possvel se trabalhar em torno da val-

idao da metodologia proposta. Sua validao passa basicamente pela resposta a duas

perguntas: A atividade de projeto de infra-estruturas de TI segundo a perspectiva BDIM

realmente melhor do que as outras abordagens existentes? A atividade de projeto de SLAs

segundo a perspectiva BDIM realmente melhor do que as outras abordagens existentes?

O critrio de comparao adotado para denir se uma abordagem melhor do que outra,

logicamente, se refere ao atendimento dos objetivos gerais do negcio. Logo, uma infra-

estrutura melhor que outra caso oferea um maior suporte s operaes de negcio. Um

critrio anlogo adotado para o caso de SLAs. Para quanticar tal comparao, sugere-se

a adoo das mtricas perda nanceira e custo da infra-estrutura de TI. As referncias con-

stantes nos apndices B e E caminharam neste sentido, quando realizaram comparaes da

abordagem proposta com a abordagem convencional, a qual fora previamente formalizada

nos referidos trabalhos. Nestes estudos, cou claro que o resultado nal da metodologia

proposta, quando comparado com o resultado da abordagem tradicional, superior. Tal fato

constatado quando a aplicao da abordagem proposta incorre em um menor impacto -

nanceiro (perda nanceira + custo da infra-estrutura de TI) e melhores valores de lucro (para

o caso do projeto de SLAs que envolvem servios terceirizados).

Captulo 5

Concluses e Trabalhos Futuros

No discorrer deste captulo sero tecidas as consideraes nais sobre o presente trabalho de

dissertao. A seo 5.1 sumariza as principais concluses obtidas com os estudos realizados

nesta dissertao. Por m, a seo 5.2 discute idias de possveis prximos passos para este

trabalho.

Nesta dissertao, realizou-se uma investigao formal de tcnicas para (i) projetar infra-

estruturas de Tecnologia da Informao e (ii) escolher parmetros de Acordos de Nveis de

Servio de TI segundo uma perspectiva de negcio, de forma a otimizar mtricas de neg-

cios. Esta investigao resultou na proposta de uma abordagem que foi aplicada em diversos

estudos realizados nos artigos constantes nos apndices A, B, C, D e E. Como mostrado nos

referidos apndices, a principal diferena desta abordagem, quando comparada com aborda-

gens convencionais, diz respeito s mtricas consideradas. Tradicionalmente, as abordagens

convencionais esto baseadas apenas em mtricas tcnicas. A abordagem proposta consid-

era, alm das mtricas tcnicas convencionais, o impacto negativo nos negcios, oriundo

a partir de falhas na infra-estrutura de TI e de degradaes de performance. Tal impacto

negativo obtido atravs de um modelo de impacto, conforme proposto por BDIM, cuja

funo criar uma relao causa-efeito entre a TI. Este mapeamento (relao causa-efeito)

entre eventos ocorridos na TI com o impacto quantitativo destes nos resultados do negcio,

fornece uma base de informao que propicia a tomada de decises que melhorem os re-

sultados do negcio em si e tambm da TI. Neste sentido, tal informao permitiu que dois

problemas em especial da gerncia de TI (projeto de SLAs e de infra-estruturas) fossem reex-

aminados. Um modelo de impacto em particular foi apresentado e utilizado por este trabalho

27

28

nos diversos estudos realizados. Com ele possvel quanticar o impacto que eventos de TI