Documente Academic

Documente Profesional

Documente Cultură

Pretest Posttest Design

Încărcat de

rzesquieresDescriere originală:

Drepturi de autor

Formate disponibile

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentDrepturi de autor:

Formate disponibile

Pretest Posttest Design

Încărcat de

rzesquieresDrepturi de autor:

Formate disponibile

Work 20 (2003) 159165 IOS Press

159

Speaking of Research

Pretest-posttest designs and measurement of change

Dimiter M. Dimitrov and Phillip D. Rumrill, Jr.

507 White Hall, College of Education, Kent State University, Kent, OH 44242-0001, USA Tel.: +1 330 672 0582; Fax: +1 330 672 3737; E-mail: ddimitro@kent.edu

Abstract. The article examines issues involved in comparing groups and measuring change with pretest and posttest data. Different pretest-posttest designs are presented in a manner that can help rehabilitation professionals to better understand and determine effects resulting from selected interventions. The reliability of gain scores in pretest-posttest measurement is also discussed in the context of rehabilitation research and practice. Keywords: Treatment effects, pretest-posttest designs, measurement of change

1. Introduction Pretest-posttest designs are widely used in behavioral research, primarily for the purpose of comparing groups and/or measuring change resulting from experimental treatments. The focus of this article is on comparing groups with pretest and posttest data and related reliability issues. In rehabilitation research, change is commonly measured in such dependent variables as employment status, income, empowerment, assertiveness, self-advocacy skills, and adjustment to disability. The measurement of change provides a vehicle for assessing the impact of rehabilitation services, as well as the effects of specic counseling and allied health interventions.

2. Basic pretest-posttest experimental designs This section addresses designs in which one or more experimental groups are exposed to a treatment or intervention and then compared to one or more control groups who did not receive the treatment. Brief notes on internal and external validity of such designs are rst necessary. Internal validity is the degree to which the

1051-9815/03/$8.00 2003 IOS Press. All rights reserved

experimental treatment makes a difference in (or causes change in) the specic experimental settings. External validity is the degree to which the treatment effect can be generalized across populations, settings, treatment variables, and measurement instruments. As described in previous research (e.g. [11]), factors that threaten internal validity are: history, maturation, pretest effects, instruments, statistical regression toward the mean, differential selection of participants, mortality, and interactions of factors (e.g., selection and maturation). Threats to external validity include: interaction effects of selection biases and treatment, reactive interaction effect of pretesting, reactive effect of experimental procedures, and multiple-treatment interference. For a thorough discussion of threats to internal and external validity, readers may consult Bellini and Rumrill [1]. Notations used in this section are: Y 1 = pretest scores, T = experimental treatment, Y 2 = posttest scores, D = Y2 Y1 (gain scores), and RD = randomized design (random selection and assignment of participants to groups and, then, random assignment of groups to treatments). With the RDs discussed in this section, one can compare experimental and control groups on (a) posttest scores, while controlling for pretest differences or (b)

160

D.M. Dimitrov and P.D. Rumrill, Jr. / Pretest-posttest designs and measurement of change

mean gain scores, that is, the difference between the posttest mean and the pretest mean. Appropriate statistical methods for such comparisons and related measurement issues are discussed later in this article. Design 1: Randomized control-group pretest-posttest design With this RD, all conditions are the same for both the experimental and control groups, with the exception that the experimental group is exposed to a treatment, T, whereas the control group is not. Maturation and history are major problems for internal validity in this design, whereas the interaction of pretesting and treatment is a major threat to external validity. Maturation occurs when biological and psychological characteristics of research participants change during the experiment, thus affecting their posttest scores. History occurs when participants experience an event (external to the experimental treatment) that affects their posttest scores. Interaction of pretesting and treatment comes into play when the pretest sensitizes participants so that they respond to the treatment differently than they would with no pretest. For example, participants in a job seeking skills training program take a pretest regarding job-seeking behaviors (e.g., how many applications they have completed in the past month, how many job interviews attended). Responding to questions about their job-seeking activities might prompt participants to initiate or increase those activities, irrespective of the intervention. Design 2: Randomized Solomon four-group design This RD involves two experimental groups, E 1 and E2 , and two control groups, C 1 and C2 . All four groups complete posttest measures, but only groups E 1 and C1 complete pretest measures in order to allow for better control of pretesting effects. In general, the Solomon four-group RD enhances both internal and external validity. This design, unlike other pretest-posttest RDs, also allows the researcher to evaluate separately the magnitudes of effects due to treatment, maturation, history, and pretesting. Let D 1 , D2 , D3 , and D4 denote the gain scores for groups E 1 , C1 , E2 , and C2 , respectively. These gain scores are affected by several factors (given in parentheses) as follows: D 1 (pretesting, treatment, maturation, history), D 2 (pretesting, maturation, history), D3 (treatment, maturation, history), and D 4 (maturation, history). With this, the difference D 3 D4 evaluates the effect of treatment alone, D 2 D4 the effect of pretesting alone, and D 1 D2 D3 the effect of interaction of pretesting and treatment [11, pp. 68].

Despite the advantages of the Solomon four-group RD, Design 1 is still predominantly used in studies with pretest-posttest data. When the groups are relatively large, for example, one can randomly split the experimental group into two groups and the control group into two groups to use the Solomon four-group RD. However, sample size is almost always an issue in intervention studies in rehabilitation, which often leaves researchers opting for the simpler, more limited twogroup design. Design 3: Nonrandomized control group pretest-posttest design This design is similar to Design 1, but the participants are not randomly assigned to groups. Design 3 has practical advantages over Design 1 and Design 2, because it deals with intact groups and thus does not disrupt the existing research setting. This reduces the reactive effects of the experimental procedure and, therefore, improves the external validity of the design. Indeed, conducting a legitimate experiment without the participants being aware of it is possible with intact groups, but not with random assignment of subjects to groups. Design 3, however, is more sensitive to internal validity problems due to interaction between such factors as selection and maturation, selection and history, and selection and pretesting. For example, a common quasi-experimental approach in rehabilitation research is to use time sampling methods whereby the rst, say 25 participants receive an intervention and the next 25 or so form a control group. The problem with this approach is that, even if there are posttest differences between groups, those differences may be attributable to characteristic differences between groups rather than to the intervention. Random assignment to groups, on the other hand, equalizes groups on existing characteristics and, thereby, isolates the effects of the intervention. 3. Statistical methods for analysis of pretest-posttest data The following statistical methods are traditionally used in comparing groups with pretest and posttest data: (1) Analysis of variance (ANOVA) on the gain scores, (2) Analysis of covariance (ANCOVA), (3) ANOVA on residual scores, and (4) Repeated measures ANOVA. In all these methods, the use of pretest scores helps to reduce error variance, thus producing more powerful tests than designs with no pretest data [22]. Generally speaking, the power of the test represents the probability of detecting differences between the groups being compared when such differences exist.

D.M. Dimitrov and P.D. Rumrill, Jr. / Pretest-posttest designs and measurement of change

161

3.1. ANOVA on gain scores The gain scores, D = Y2 Y1 , represent the dependent variable in ANOVA comparisons of two or more groups. The use of gain scores in measurement of change has been criticized because of the (generally false) assertion that the difference between scores is much less reliable than the scores themselves [5,14,15]. This assertion is true only if the pretest scores and the posttest scores have equal (or proportional) variances and equal reliability. When this is not the case, which may happen in many testing situations, the reliability of the gain scores is high [18,19,23]. The unreliability of the gain score does not preclude valid testing of the null hypothesis of zero mean gain score in a population of examinees. If the gain score is unreliable, however, it is not appropriate to correlate the gain score with other variables in a population of examinees [17]. An important practical implication is that, without ignoring the caution urged by previous authors, researchers should not always discard gain scores and should be aware of situations when gain scores are useful. 3.2. ANCOVA with pretest-posttest data The purpose of using the pretest scores as a covariate in ANCOVA with a pretest-posttest design is to (a) reduce the error variance and (b) eliminate systematic bias. With randomized designs (e.g., Designs 1 and 2), the main purpose of ANCOVA is to reduce error variance, because the random assignment of subjects to groups guards against systematic bias. With nonrandomized designs (e.g., Design 3), the main purpose of ANCOVA is to adjust the posttest means for differences among groups on the pretest, because such differences are likely to occur with intact groups. It is important to note that when pretest scores are not reliable, the treatment effects can be seriously biased in nonrandomized designs. This is true if measurement error is present on any other covariate in case ANCOVA uses more than one (i.e., the pretest) covariate. Another problem with ANCOVA relates to differential growth of subjects in intact or self selected groups on the dependent variable [3]. Pretest differences (systematic bias) between groups can affect the interpretations of posttest differences. Let us remind ourselves that assumptions such as randomization, linear relationship between pretest and posttest scores, and homogeneity of regression slopes underlie ANCOVA. In an attempt to avoid problems that could be created by a violation of these assump-

tions, some researchers use ANOVA on gain scores without knowing that the same assumptions are required for the analysis of gain scores. Previous research [4] has demonstrated that when the regression slope equals 1, ANCOVA and ANOVA on gain scores produce the same F ratio, with the gain score analysis being slightly more powerful due to the lost degrees of freedom with the analysis of covariance. When the regression slope does not equal 1, which is usually the case, ANCOVA will result in a more powerful test. Another advantage of ANCOVA over ANOVA on gain scores is that when some assumptions do not hold, ANCOVA allows for modications leading to appropriate analysis, whereas the gain score ANOVA does not. For example, if there is no linear relationship between pretest and posttest scores, ANCOVA can be extended to include a quadratic or cubic component. Or, if the regression slopes are not equal, ANCOVA can lead into procedures such as the Johnson-Neyman technique that provide regions of signicance [4]. 3.3. ANOVA on residual scores Residual scores represent the difference between observed posttest scores and their predicted values from a simple regression using the pretest scores as a predictor. An attractive characteristic of residual scores is that, unlike gain scores, they do not correlate with the observed pretest scores. Also, as Zimmerman and Williams [23] demonstrated, residual scores contain less error than gain scores when the variance of the pretest scores is larger than the variance of posttest scores. Compared to the ANCOVA model, however, the ANOVA on residual scores is less powerful and some authors recommend that it be avoided. Maxwell, Delaney, and Manheimer [16] warned researchers about a common misconception that ANOVA on residual scores is the same as ANCOVA. They demonstrated that: (a) when the residuals are obtained from the pooled within-group regression coefcients, ANOVA on residual scores results in an inated -level of signicance and (b) when the regression coefcient for the total sample of all groups combined is used, ANOVA on residual scores yields an inappropriately conservative test [16]. 3.4. Repeated measures ANOVA with pretest-posttest data Repeated measures ANOVA is used with pretestposttest data as a mixed (split-plot) factorial design with one between-subjects factor (the grouping vari-

162

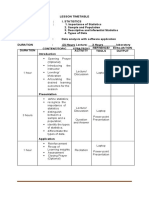

D.M. Dimitrov and P.D. Rumrill, Jr. / Pretest-posttest designs and measurement of change Table 1 Pretest-posttest data for the comparison of three groups

Subject 1 1 1 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18

Group 1 1 1 1 1 1 2 2 2 2 2 2 3 3 3 3 3 3

Pretest 48 70 35 41 43 39 53 67 84 56 44 74 80 72 54 66 69 67

Posttest 60 50 41 62 32 44 71 85 82 55 62 77 84 80 79 84 66 65

Gain 12 20 6 21 11 5 18 18 2 1 18 3 4 8 25 18 3 2

4. Measurement of change with pretest-posttest data 4.1. Classical approach As noted earlier in this article, the classical approach of using gain scores in measuring change has been criticized for decades [5,14,15] because of the (not always true) assertion that gain scores have low reliability. Again, this assertion is true only when the pretest scores and the posttest scores are equally reliable and have equal variances. Therefore, although the reliability of each of the pretest scores and posttest scores should be a legitimate concern, the reliability of the difference between them should not be thought of as always being low and should not preclude using gain scores in change evaluations. Unfortunately, some researchers still labor under the inertia of traditional, yet inappropriate, generalizations. It should also be noted that there are other, and more serious, problems with the traditional measurement of change that deserve special attention. First, although measurement of change in terms mean gain scores is appropriate in industrial and agricultural research, its methodological appropriateness and social benet in behavioral elds is questionable. Bock [2, pp. 76] noted, nor is it clear that, for example, a method yielding a lower mean score in an instructional experiment is uniformly inferior to its competitor, even when all of the conditions for valid experimentation are met. It is possible, even likely, that the method with the lower mean is actually the more benecial for some minority of students. A second, more technical, problem with using rawscore differences in measuring change relates to the fact that such differences are generally misleading because they depend on the level of difculty of the test items. This is because the raw scores do not adequately represent the actual ability that underlies the performance on a (pre- or post) test. In general, the relationship between raw scores and ability scores is not linear and, therefore, equal (raw) gain scores do not represent equal changes of ability. Fischer [7] demonstrated that, if a low ability person and a high ability person have made the same change on a particular ability scale (i.e., derived exactly the same benets from the treatment), the raw-score differences will misrepresent this fact. Specically, with a relatively easy test, the raw-score differences will (falsely) indicate higher change for the low ability person and, conversely, with a more difcult test, they will (falsely) indicate higher change for

able) and one within-subjects (pretest-posttest) factor. Unfortunately, this is not a healthy practice because previous research [10,12] has demonstrated that the results provided by repeated measures ANOVA for pretestposttest data can be misleading. Specically, the F test for the treatment main effect (which is of primary interest) is very conservative because the pretest scores are not affected by the treatment. A very little known fact is also that the F statistic for the interaction between the treatment factor and the pretest-posttest factor is identical to the F statistic for the treatment main effect with a one-way ANOVA on gain scores [10]. Thus, when using repeated measures ANOVA with pretest-posttest data, the interaction F ratio, not the main effect F ratio, should be used for testing the treatment main effect. A better practice is to directly use one-way ANOVA on gain scores or, even better, use ANCOVA with the pretest scores as a covariate. Table 1 contain pretest-posttest data for the comparison of three groups on the dependent variable Y. Table 2 shows the results from both the ANOVA on gain scores and the repeated measures ANOVA with one between subjects factor (Group) and one within subjects factor, Time (pretest-posttest). As one can see, the F value with the ANOVA on gain scores is identical to the F value for the interaction Group x Time with the repeated measures ANOVA design: F (2, 15) = 0.56, p = 0.58. In fact, using the F value for the between subjects factor, Group, with the repeated measures ANOVA would be a (common) mistake: F (2, 15) = 11.34, p = 0.001. This leads in this case to a false rejection of the null hypothesis about differences among the compared groups.

D.M. Dimitrov and P.D. Rumrill, Jr. / Pretest-posttest designs and measurement of change Table 2 ANOVA on gain scores and repeated measures ANOVA on demonstration data Model/Source of variation df ANOVA on gain scores Between subjects Group (G) 2 S within-group error 15 F p

163

0.56

0.58

Repeated measures ANOVA Between subjects Group (G) 2 11.34 S within-group error 15 Within subjects Time (T) 1 TG 2 T G within-group error 15 5.04 0.56

0.00

0.04 0.58

Note. The F value for G with the ANOVA on gain score is the same as the F value for T G with repeated measures ANOVA.

the high ability person. Indeed, researchers should be aware of limitations and pitfalls with using raw-score differences and should rely on dependable theoretical models for measurement and evaluation of change. 4.2. Modern approaches for measurement of change The brief discussion of modern approaches for measuring change in this section requires the denition of some concepts from classical test theory (CTT) and item response theory (IRT). In CTT, each observed score, X, is a sum of a true score, T , and an error of measurement, E (i.e., X = T + E). The true score is unobservable, because it represents the theoretical mean of all observed scores that an individual may have under an unlimited number of administrations of the same test under the same conditions. The statistical tests for measuring change in true scores from pretest to posttest have important advantages to the classical raw-score differences in terms of accuracy, exibility, and control of error sources. Theoretical frameworks, designs, procedures, and software for such tests, based on structural equation modeling, have been developed and successfully used in the last three decades [13,21]. In IRT, the term ability connotes a latent trait that underlies performance on a test [9]. The ability score of an individual determines the probability for that individual to answer correctly any test item or perform a measured task. The units of the ability scale, called logits, typically range from 4 to 4. It is important to note that item difculties and ability scores are located on the same (logit) scale. With the one-parameter IRT model (Rasch model), the probability of a correct answer on any item for a person depends on the difculty

parameter of the item and the ability score of the person. Fischer [7] extended the Rasch model to a Linear Logistic Model for Change (LLMC) for measuring both individual and group changes on the logit scale. One of the valuable features of the LLMC is that it separates the ability change into two parts: (1) treatment effect, the change part due to the experimental treatment, and (2) trend effect, the change part due to factors such as biological maturation, cognitive development, and other natural trends that have occurred during the pretest to posttest time period. Another important feature of the LLMC is that the two change components, treatment effect and trend effect, are represented on a ratio-type scale. Thus, the ratio of any two (treatment or trend) change effects indicates how many times one of them is greater (or smaller) than the other. Such information, not available with other methods for measuring change, can help researchers in conducting valid interpretations of change magnitudes and trends, and in making subsequent informed decisions. The LLMC has been applied in measuring change in various pretest-posttest situations [6,20]. A user-friendly computer software for the LLCM is also available [8].

5. Conclusion Important summary points are as follows: 1. The experimental and control groups with Designs 1 and 2 discussed in the rst section of this article are assumed to be equivalent on the pretest or other variables that may affect their posttest scores on the basis of random selection. Both designs control well for threats to internal and external validity. Design 2 (Solomon four-group design) is superior to Design 1 because, along with controlling for effects of history, maturation, and pretesting, it allows for evaluation of the magnitudes of such effects. With Design 3 (nonrandomized control-group design), the groups being compared cannot be assumed to be equivalent on the pretest. Therefore, the data analysis with this design should use ANCOVA or other appropriate statistical procedure. An advantage of Design 3 over Designs 1 and 2 is that it involves intact groups (i.e., keeps the participants in natural settings), thus allowing a higher degree of external validity. 2. The discussion of statistical methods for analysis of pretest-posttest data in this article focuses

164

D.M. Dimitrov and P.D. Rumrill, Jr. / Pretest-posttest designs and measurement of change

on several important facts. First, contrary to the traditional misconception, the reliability of gain scores is high in many practical situations, particularly when the pre- and posttest scores do not have equal variance and equal reliability. Second, the unreliability of gain scores does not preclude valid testing of the null hypothesis related to the mean gain score in a population of examinees. It is not appropriate, however, to correlate unreliable gain scores with other variables. Third, ANCOVA should be the preferred method for analysis of pretest-posttest data. ANOVA on gain scores is also useful, whereas ANOVA on residual scores and repeated measures ANOVA with pretest-posttest data should be avoided. With randomized designs (Designs 1 and 2), the purpose of ANCOVA is to reduce error variance, whereas with nonrandomized designs (Design 3) ANCOVA is used to adjust the posttest means for pretest differences among intact groups. If the pretest scores are not reliable, the treatment effects can be seriously biased, particularly with nonrandomized designs. Another caution with ANCOVA relates to possible differential growth on the dependent variable in intact or self-selected groups. 3. The methodological appropriateness and social benet of measuring change in terms of mean gain score is questionable; it is not clear, for example, that a method yielding a lower mean gain score in a rehabilitation experiment is uniformly inferior to the other method(s) involved in this experiment. Also, the results from using raw-score differences in measuring change are generally misleading because they depend on the level of difculty of test items. Specically, for subjects with equal actual (true score or ability) change, an easy test (a ceiling effect test) will falsely favor low ability subjects and, conversely, a difcult test (a oor effect test) will falsely favor high ability subjects. These problems with raw-score differences are eliminated by using (a) modern approaches such as structural equation modeling for measuring true score changes or (b) item response models (e.g., LLMC) for measuring changes in the ability underlying subjects performance on a test. Researchers in the eld of rehabilitation can also benet from using recently developed computer software with modern theoretical frameworks and procedures for measuring change across two (pretest-pottest) or more time points.

References

[1] [2] J. Bellini and P. Rumrill, Research in rehabilitation counseling, Springeld, IL: Charles C. Thomas. R.D. Bock, Basic issues in the measurement of change. in: Advances in Psychological and Educational Measurement, D.N.M. DeGruijter and L.J.Th. Van der Kamp, eds, John Wiley & Sons, NY, 1976, pp. 7596. A.D. Bryk and H. I. Weisberg, Use of the nonequivalent control group design when subjects are growing, Psychological Bulletin 85 (1977), 950962. I.S. Cahen and R.L. Linn, Regions of signicant criterion difference in aptitude- treatment interaction research, American Educational Research Journal 8 (1971), 521530. L.J. Cronbach and L. Furby, How should we measure change - or should we? Psychological Bulletin 74 (1970), 6880. D.M. Dimitrov, S. McGee and B. Howard, Changes in students science ability produced by multimedia learning environments: Application of the Linear Logistic Model for Change, School Science and Mathematics 102(1) (2002), 15 22. G.H. Fischer, Some probabilistic models for measuring change, in: Advances in Psychological and Educational Measurement, D.N.M. DeGruijter and L.J.Th. Van der Kamp, eds, John Wiley & Sons, NY, 1976, pp. 97110. G.H. Fischer and E. Ponocny-Seliger, Structural Rasch modeling, Handbook of the usage of LPCM-WIN 1.0, Progamma, Netherlands, 1998. R.K. Hambleton, H. Swaminathan and H. J. Rogers, Fundamentals of Item Response Theory, Sage, Newbury Park, CA, 1991. S.W. Huck and R.A. McLean, Using a repeated measures ANOVA to analyze data from a pretest-posttest design: A potentially confusing task, Psychological Bulletin 82 (1975), 511518. S. Isaac and W.B. Michael, Handbook in research and evaluation 2nd. ed., EdITS, San Diego, CA, 1981. E. Jennings, Models for pretest-posttest data: repeated measures ANOVA revisited, Journal of Educational Statistics 13 (1988), 273280. K.G. J reskog and D.S rbom, Statistical models and methods o o for test-retest situations, in: Advances in Psychological and Educational Measurement, D.N.M. DeGruijter and L.J.Th. Van der Kamp, eds, John Wiley & Sons, NY, 1976, pp. 135 157. L. Linn and J.A. Slindle, The determination of the signicance of change between pre- and posttesting periods, Review of Educational Research 47 (1977), 121150. F.M. Lord, The measurement of growth, Educational and Psychological Measurement 16 (1956), 421437. S. Maxwell, H.D. Delaney and J. Manheimer, ANOVA of residuals and ANCOVA: Correcting an illusion by using model comparisons and graphs, Journal of Educational Statistics 95 (1985), 136147. G.J. Mellenbergh, A note on simple gain score precision, Applied Psychological Measurement 23 (1999), 8789. J.E. Overall and J. A. Woodward, Unreliability of difference scores: A paradox for measurement of change, Psychological Bulletin 82 (1975), 8586. D. Rogosa, D. Brandt and M. Zimowski, A growth curve approach to the measurement of change, Psychological Bulletin 92 (1982), 726748. I. Rop, The application of a linear logistic model describing the effects of preschool education on cognitive growth, in: Some

[3]

[4]

[5] [6]

[7]

[8]

[9]

[10]

[11] [12]

[13]

[14]

[15] [16]

[17] [18]

[19]

[20]

D.M. Dimitrov and P.D. Rumrill, Jr. / Pretest-posttest designs and measurement of change mathematical models for social psychology, W.H. Kempf and B.H. Repp, eds, Huber, Bern, 1976. [21] D. S rbom, A statistical model for the measurement of change o in true scores, in: Advances in Psychological and Educational Measurement, D.N.M. DeGruijter and L.J.Th. Van der Kamp, eds, John Wiley & Sons, NY, 1976, pp. 11591170. [22]

165

J. Stevens, Applied multivariate statistics for the social sciences 3rd ed., Lawrence Erlbaum, Mahwah, NJ, 1996. [23] D.W. Zimmerman and R.H. Williams, Gain scores in research can be highly reliable, Journal of Educational Measurement 19 (1982), 149154.

S-ar putea să vă placă și

- The Importance of Educational Goals: Education and LearningDe la EverandThe Importance of Educational Goals: Education and LearningÎncă nu există evaluări

- Language Learning Anxiety and Oral Perfo PDFDocument10 paginiLanguage Learning Anxiety and Oral Perfo PDFMel MacatangayÎncă nu există evaluări

- PR1 Group6 Final PaperDocument21 paginiPR1 Group6 Final PaperSTACEY JENILYN YUÎncă nu există evaluări

- Research Perspectives Overview: Understanding Epistemology and Theoretical FrameworksDocument15 paginiResearch Perspectives Overview: Understanding Epistemology and Theoretical FrameworksUma Maheshwari100% (1)

- Synthesis of The ArtDocument2 paginiSynthesis of The ArtPiolo Vincent Fernandez100% (1)

- A CORRELATIONAL STUDY OF PARENTING ATTITUDE PARENTAL STRESS AND ANXIETY IN MOTHERS OF CHILDREN IN GRADE 6thDocument98 paginiA CORRELATIONAL STUDY OF PARENTING ATTITUDE PARENTAL STRESS AND ANXIETY IN MOTHERS OF CHILDREN IN GRADE 6thAnupam Kalpana GuptaÎncă nu există evaluări

- Power Point 1.1Document30 paginiPower Point 1.1Robert Matthew TiquiaÎncă nu există evaluări

- CrisDocument15 paginiCrisJohn Kenneth Carba RamosoÎncă nu există evaluări

- Research Methodology ChapterDocument3 paginiResearch Methodology ChapterRenan S. GuerreroÎncă nu există evaluări

- Practical Research IIDocument40 paginiPractical Research IIFrancoraphael Tido100% (1)

- Leading To Emotional Stress of The Student in PUP Paranaque CampusDocument7 paginiLeading To Emotional Stress of The Student in PUP Paranaque CampusElla Marziela Villanueva SelloriaÎncă nu există evaluări

- A Study On The Junior High School Students' Spatial Ability and Mathematics Problem Solving in MojokertoDocument9 paginiA Study On The Junior High School Students' Spatial Ability and Mathematics Problem Solving in MojokertoGlobal Research and Development ServicesÎncă nu există evaluări

- Chapter 3 of ThesisDocument10 paginiChapter 3 of ThesisRani Mari DebilÎncă nu există evaluări

- Chapter IDocument4 paginiChapter IvonyÎncă nu există evaluări

- Factors Influencing Grade 11 Students' Choice of STEM StrandDocument5 paginiFactors Influencing Grade 11 Students' Choice of STEM StrandRonald PastoresÎncă nu există evaluări

- Perdev 9Document14 paginiPerdev 9Rodalyn Dela Cruz NavarroÎncă nu există evaluări

- The Effectiveness of Learning Theories Specifically The Gestalt and Psychoanalytic Theory To PUP Manila StudentsDocument19 paginiThe Effectiveness of Learning Theories Specifically The Gestalt and Psychoanalytic Theory To PUP Manila StudentsPauline GutierrezÎncă nu există evaluări

- Task 4 Research Report FinalDocument9 paginiTask 4 Research Report FinalKawaii Lexi ChanÎncă nu există evaluări

- Perception of Grade 12 STEM Fidelis SeniDocument7 paginiPerception of Grade 12 STEM Fidelis Senidv vargasÎncă nu există evaluări

- Effects of extracurricular activities on academic performanceDocument13 paginiEffects of extracurricular activities on academic performanceRonabel VillamorTiongson LorenzoRamosÎncă nu există evaluări

- Educational Psychology Journal Editorial Highlights Social Factors in Student AchievementDocument4 paginiEducational Psychology Journal Editorial Highlights Social Factors in Student AchievementMariuxi León MolinaÎncă nu există evaluări

- ResearchDocument4 paginiResearchZharien VitangcolÎncă nu există evaluări

- Academic Stress Introduction RevisedDocument3 paginiAcademic Stress Introduction RevisedMaria Theresa Deluna MacairanÎncă nu există evaluări

- RATE OF AWARENESS ON ANTI-UNDERAGE DRINKING ACTDocument6 paginiRATE OF AWARENESS ON ANTI-UNDERAGE DRINKING ACTprincessmagpatocÎncă nu există evaluări

- Summary, Conclusions, and RecommendationsDocument2 paginiSummary, Conclusions, and RecommendationsJess SabelloÎncă nu există evaluări

- Improve Study Habits High School StudentsDocument4 paginiImprove Study Habits High School StudentsAnoif Naputo AidnamÎncă nu există evaluări

- MEASURE FINANCIAL STRESS SURVEYDocument2 paginiMEASURE FINANCIAL STRESS SURVEYJHON RHEY RESUERAÎncă nu există evaluări

- Effects of Teaching Aids on Math AchievementDocument28 paginiEffects of Teaching Aids on Math AchievementOgunshola Stephen Adeshina-ayomi92% (13)

- The Impact of Family Issues To The Academic Performance ofDocument7 paginiThe Impact of Family Issues To The Academic Performance ofCharles Darwin ErmitaÎncă nu există evaluări

- Research - G12 HUMSS 1Document56 paginiResearch - G12 HUMSS 1Neil Marjunn TorresÎncă nu există evaluări

- Career Inclinations of Stem Students of STDocument10 paginiCareer Inclinations of Stem Students of STChristelle Dumat-ol VillanuevaÎncă nu există evaluări

- The Impact of Student Behavior on Teacher Teaching MethodsDocument32 paginiThe Impact of Student Behavior on Teacher Teaching MethodsAaron FerrerÎncă nu există evaluări

- Factors Influencing The Academic Performance in Physics of DMMMSU - MLUC Laboratory High School Fourth Year Students S.Y. 2011-2012 1369731433 PDFDocument11 paginiFactors Influencing The Academic Performance in Physics of DMMMSU - MLUC Laboratory High School Fourth Year Students S.Y. 2011-2012 1369731433 PDFRon BachaoÎncă nu există evaluări

- Mathematics Learning Profile of Junior High SchoolDocument11 paginiMathematics Learning Profile of Junior High SchoolGlobal Research and Development ServicesÎncă nu există evaluări

- Solving Math Equations ProblemsDocument26 paginiSolving Math Equations ProblemsJorrel SeguiÎncă nu există evaluări

- Chapter 3Document17 paginiChapter 3inggitÎncă nu există evaluări

- Chapter 3Document3 paginiChapter 3Jane SandovalÎncă nu există evaluări

- Dependent T-Test for Paired SamplesDocument2 paginiDependent T-Test for Paired SamplesShuzumiÎncă nu există evaluări

- Math Anxiety and Academic PerformanceDocument19 paginiMath Anxiety and Academic PerformancejhazÎncă nu există evaluări

- CHAPTER 3 and 4Document12 paginiCHAPTER 3 and 4albert matandagÎncă nu există evaluări

- Self-Efficacy and Attitude As Predictors of Mathematics Performance of Senior High School StudentsDocument10 paginiSelf-Efficacy and Attitude As Predictors of Mathematics Performance of Senior High School StudentsIJAR JOURNAL100% (1)

- Student and Teacher Factors in Mathematics PerformanceDocument6 paginiStudent and Teacher Factors in Mathematics PerformanceALJERR LAXAMANAÎncă nu există evaluări

- Chapter I Chapter IiDocument19 paginiChapter I Chapter IiCheryl Joy Domingo SoteloÎncă nu există evaluări

- The Relationship of Math Interest and Final Grades in Math of Grade 12 STEM Students in University of CebuDocument12 paginiThe Relationship of Math Interest and Final Grades in Math of Grade 12 STEM Students in University of CebuErica ArroganteÎncă nu există evaluări

- Effects of Technology in Student Motivation and Engagement in Classroom IntroductionDocument2 paginiEffects of Technology in Student Motivation and Engagement in Classroom IntroductionManny Karganilla100% (1)

- Factors Influencing The Mathematics Achievement of Grade 11 Stemstudents in The New NormalDocument104 paginiFactors Influencing The Mathematics Achievement of Grade 11 Stemstudents in The New NormalRobelyn CasipeÎncă nu există evaluări

- Senior High School Academic Progression in MathematicsDocument10 paginiSenior High School Academic Progression in MathematicsGlobal Research and Development ServicesÎncă nu există evaluări

- The Relationship Between Academic Intelligence and Procrastination of Grade 12 StudentsDocument8 paginiThe Relationship Between Academic Intelligence and Procrastination of Grade 12 Studentsjeliena-malazarteÎncă nu există evaluări

- STEM ResearchProposalPaperDocument26 paginiSTEM ResearchProposalPaperIa NavarroÎncă nu există evaluări

- Types of Probability SamplingDocument1 paginăTypes of Probability SamplingMark Robbiem BarcoÎncă nu există evaluări

- Sleep Duration and Math Achievement 1Document26 paginiSleep Duration and Math Achievement 1JB SibayanÎncă nu există evaluări

- RRLDocument17 paginiRRLCleizel Mei FlorendoÎncă nu există evaluări

- The Impact of Procrastination To Academic Performance Among Senior High School Students of Pajo NatiDocument8 paginiThe Impact of Procrastination To Academic Performance Among Senior High School Students of Pajo NatichristophermaqÎncă nu există evaluări

- Common Coping Mechanisms of Senior High School StudentsDocument5 paginiCommon Coping Mechanisms of Senior High School StudentsJimuel AntonioÎncă nu există evaluări

- Materials & MethodsDocument3 paginiMaterials & MethodsJohnpatrick DejesusÎncă nu există evaluări

- Chapter 3Document6 paginiChapter 3Ibeth Bello De CastroÎncă nu există evaluări

- Grade 12 ResearchDocument26 paginiGrade 12 ResearchNikka QuerolÎncă nu există evaluări

- Study Habits of Secondary School Students in Relation To Type of School and Type of FamilyDocument7 paginiStudy Habits of Secondary School Students in Relation To Type of School and Type of Familywillie2210Încă nu există evaluări

- Correlational Study between Facebook Intensity Usage and Social Anxiety among Senior High School StudentsDocument64 paginiCorrelational Study between Facebook Intensity Usage and Social Anxiety among Senior High School StudentsRobin SalivaÎncă nu există evaluări

- Day 2 Reporting PR 2Document5 paginiDay 2 Reporting PR 2Loujean Gudes MarÎncă nu există evaluări

- Research Foundations and FundamentalsDocument22 paginiResearch Foundations and FundamentalsClyden Jaile RamirezÎncă nu există evaluări

- Wilcoxon Matched Pairs Signed Rank TestDocument3 paginiWilcoxon Matched Pairs Signed Rank TestDawn Ilish Nicole DiezÎncă nu există evaluări

- Lab Reports Grade 12Document5 paginiLab Reports Grade 12abe scessÎncă nu există evaluări

- Application of The 3Rs Principles For Animals Used For Experiments at The Beginning of The 21st CenturyDocument7 paginiApplication of The 3Rs Principles For Animals Used For Experiments at The Beginning of The 21st CenturySteven BrownÎncă nu există evaluări

- Test of Significance For Small SamplesDocument35 paginiTest of Significance For Small SamplesArun KumarÎncă nu există evaluări

- Methods in Social ResearchDocument395 paginiMethods in Social ResearchJeremiahOmwoyo100% (1)

- Cognition, Religion, and Theology Project: GeneralDocument5 paginiCognition, Religion, and Theology Project: GeneralIgor DemićÎncă nu există evaluări

- PPTDocument1 paginăPPTawdqÎncă nu există evaluări

- Strocss 2019 ChecklistDocument4 paginiStrocss 2019 ChecklistindahÎncă nu există evaluări

- Mathematical Principles of Theoretical Physics (PDFDrive)Document518 paginiMathematical Principles of Theoretical Physics (PDFDrive)Fai Kuen Tang100% (1)

- Public PolicyDocument464 paginiPublic PolicyprabodhÎncă nu există evaluări

- Qualitative Research: Material From Groat and Wang (2013) Prepared by Neil Menjares, MSDocument33 paginiQualitative Research: Material From Groat and Wang (2013) Prepared by Neil Menjares, MSaldreinÎncă nu există evaluări

- Unit 6 Data Presentation and AnalysisDocument85 paginiUnit 6 Data Presentation and AnalysiswossenÎncă nu există evaluări

- Punit GhoshDocument4 paginiPunit Ghoshpunit ghoshÎncă nu există evaluări

- Probability Predict StataDocument2 paginiProbability Predict StataAgung SetiawanÎncă nu există evaluări

- Sources: Ceneco, Vresco, NocecoDocument1 paginăSources: Ceneco, Vresco, NocecoPhillip CellonaÎncă nu există evaluări

- Gec410 Note VDocument25 paginiGec410 Note VOsan ThorpeÎncă nu există evaluări

- RTA HeterocDocument4 paginiRTA HeterocJaime Andres Chica PÎncă nu există evaluări

- Quantum Numbers WorksheetDocument2 paginiQuantum Numbers WorksheetkishoreddiÎncă nu există evaluări

- Sbe13ch17a PPDocument48 paginiSbe13ch17a PPalifahÎncă nu există evaluări

- SSBB BrouchDocument4 paginiSSBB Brouchsribalaji22Încă nu există evaluări

- Solution Manual For Decision Analysis For Management Judgment, 4th EditionDocument1 paginăSolution Manual For Decision Analysis For Management Judgment, 4th EditionMaria Cabral0% (2)

- MODULE 1 STATISTICS AND DATA ANALYSIS FinalDocument9 paginiMODULE 1 STATISTICS AND DATA ANALYSIS FinalJa CalibosoÎncă nu există evaluări

- Saint Tonis College, IncDocument9 paginiSaint Tonis College, IncAbby MonteverdeÎncă nu există evaluări

- Research Methods: Content Analysis Field ResearchDocument30 paginiResearch Methods: Content Analysis Field ResearchBeytullah ErdoğanÎncă nu există evaluări

- Analysis of Square Lattice Designs Using R and SASDocument43 paginiAnalysis of Square Lattice Designs Using R and SASNimona FufaÎncă nu există evaluări

- Check in Activity 2Document4 paginiCheck in Activity 2Erika CadawanÎncă nu există evaluări

- APA Handbook of Behavior AnalysisDocument6 paginiAPA Handbook of Behavior AnalysisVũ Nam TrầnÎncă nu există evaluări

- 0 10, Trees : Physics, The University Hew York, New YorkDocument24 pagini0 10, Trees : Physics, The University Hew York, New YorkPrashant GokarajuÎncă nu există evaluări

- Maths Made Easy by Ashish PandeyDocument242 paginiMaths Made Easy by Ashish PandeyAshish Pandey0% (2)