Documente Academic

Documente Profesional

Documente Cultură

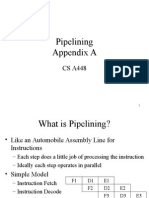

Instruction Pipelining

Încărcat de

Usman UlHaq0 evaluări0% au considerat acest document util (0 voturi)

26 vizualizări8 paginicomputer architecture

chap 03

lect 11

Drepturi de autor

© © All Rights Reserved

Formate disponibile

PPTX, PDF, TXT sau citiți online pe Scribd

Partajați acest document

Partajați sau inserați document

Vi se pare util acest document?

Este necorespunzător acest conținut?

Raportați acest documentcomputer architecture

chap 03

lect 11

Drepturi de autor:

© All Rights Reserved

Formate disponibile

Descărcați ca PPTX, PDF, TXT sau citiți online pe Scribd

0 evaluări0% au considerat acest document util (0 voturi)

26 vizualizări8 paginiInstruction Pipelining

Încărcat de

Usman UlHaqcomputer architecture

chap 03

lect 11

Drepturi de autor:

© All Rights Reserved

Formate disponibile

Descărcați ca PPTX, PDF, TXT sau citiți online pe Scribd

Sunteți pe pagina 1din 8

INSTRUCTION PIPELINING

Computer Architecture

25/9/19

Engineer Usman Ul Haq

INSTRUCTION PIPELINING

• Some CPUs break down the fetch–decode–execute cycle into smaller

steps, where some of these smaller steps can be performed in

parallel. This overlapping speeds up execution. This method, used by

all current CPUs, is known as pipelining.

• Instruction pipelining is one method used to exploit instruction-level

parallelism (ILP).

• Suppose the fetch–decode–execute cycle were broken into the

following “ministeps”:

Engineer Usman Ul Haq

Engineer Usman Ul Haq

• Each step in a computer pipeline completes a part of an instruction.

• Each of the steps is called a pipeline stage. The stages are connected to

form a pipe.

• Instructions enter at one end, progress through the various stages, and

exit at the other end. The goal is to balance the time taken by each

pipeline stage

• If the stages are not balanced in time, after awhile, faster stages will be

waiting on slower ones.

• Figure 5.5 provides an illustration of computer pipelining with

overlapping stages.

Engineer Usman Ul Haq

Engineer Usman Ul Haq

• We see from Figure 5.5 that once instruction 1 has been fetched and is in

the process of being decoded, we can start the fetch on instruction 2.

When instruction 1 is fetching operands, and instruction 2 is being

decoded, we can start the fetch on instruction 3.

• Notice that these events can occur in parallel, very much like an

automobile assembly line.

• Suppose we have a k-stage pipeline. Assume that the clock cycle time is tp

Therefore, to complete n tasks using a k-stage pipeline requires:

Engineer Usman Ul Haq

• Let’s calculate the speedup we gain using a pipeline. Without a pipeline,

the time required is ntn cycles, where tn = k × tp. Therefore, the

speedup (time without a pipeline divided by the time using a pipeline)

is:

Engineer Usman Ul Haq

• There are several conditions that result in “pipeline conflicts,” which keep

us from reaching the goal of executing one instruction per clock cycle.

These include:

• Resource conflicts

• Data dependencies

• Conditional branch statements

Engineer Usman Ul Haq

S-ar putea să vă placă și

- Practical Guides to Testing and Commissioning of Mechanical, Electrical and Plumbing (Mep) InstallationsDe la EverandPractical Guides to Testing and Commissioning of Mechanical, Electrical and Plumbing (Mep) InstallationsEvaluare: 3.5 din 5 stele3.5/5 (3)

- 7.1 Instruction PipeliningDocument5 pagini7.1 Instruction PipeliningLoshauÎncă nu există evaluări

- ILP - Appendix C PDFDocument52 paginiILP - Appendix C PDFDhananjay JahagirdarÎncă nu există evaluări

- Pipelining Pipelining Characteristics of Pipelining Clocks and Latches 5 Stages of Pipelining Hazards Loads / Stores Risc and CiscDocument12 paginiPipelining Pipelining Characteristics of Pipelining Clocks and Latches 5 Stages of Pipelining Hazards Loads / Stores Risc and CiscKelvinÎncă nu există evaluări

- c6 CaoDocument77 paginic6 CaoAh YanÎncă nu există evaluări

- Pipeline ProcessingDocument28 paginiPipeline Processinganismitaray14Încă nu există evaluări

- 4-Concept of PipeliningDocument20 pagini4-Concept of Pipeliningtabin iftakharÎncă nu există evaluări

- LECTURE 3 PipeliningDocument27 paginiLECTURE 3 PipeliningHenry KanengaÎncă nu există evaluări

- Parallel Processing Chapter - 3: Instruction Level ParallelismDocument33 paginiParallel Processing Chapter - 3: Instruction Level ParallelismGetu GeneneÎncă nu există evaluări

- Coa Module 3 Part 1Document42 paginiCoa Module 3 Part 1Ajith Bobby RajagiriÎncă nu există evaluări

- Uni1-2 PipeliningDocument12 paginiUni1-2 PipeliningAditi VermaÎncă nu există evaluări

- Chap-10: Speed and EfficiencyDocument29 paginiChap-10: Speed and EfficiencyGuru BalanÎncă nu există evaluări

- Module1 UPD W NotesDocument72 paginiModule1 UPD W NotesZhanzhi LiuÎncă nu există evaluări

- Pipe LiningDocument32 paginiPipe LiningveerendrasettyÎncă nu există evaluări

- 5 2pipeliningDocument26 pagini5 2pipeliningAncy ZachariahÎncă nu există evaluări

- ACA Unit 2,7th Sem CSEDocument13 paginiACA Unit 2,7th Sem CSEReshma BJÎncă nu există evaluări

- Pipelining and ParallelismDocument41 paginiPipelining and ParallelismPratham GuptaÎncă nu există evaluări

- Module1 UPDDocument72 paginiModule1 UPDZhanzhi LiuÎncă nu există evaluări

- Pipe LiningDocument66 paginiPipe Liningsir.erlanÎncă nu există evaluări

- Aca Module 2Document35 paginiAca Module 2Shiva prasad100% (1)

- Module 4Document12 paginiModule 4Bijay NagÎncă nu există evaluări

- AcA Assignment VIDHI KISHORDocument6 paginiAcA Assignment VIDHI KISHORVidhi KishorÎncă nu există evaluări

- Ca02 2014 PDFDocument79 paginiCa02 2014 PDFPance CvetkovskiÎncă nu există evaluări

- Pipelining - Part1Document26 paginiPipelining - Part1saraÎncă nu există evaluări

- Pipelining: CIT 595 Spring 2007Document16 paginiPipelining: CIT 595 Spring 2007kumar07xÎncă nu există evaluări

- L17 Pipelined Vs Non PipelinedDocument16 paginiL17 Pipelined Vs Non PipelinedIsaacÎncă nu există evaluări

- Parallelism in MicroprocessorDocument17 paginiParallelism in MicroprocessorTowsifÎncă nu există evaluări

- Computer Architecture: Pipelining Khiyam IftikharDocument36 paginiComputer Architecture: Pipelining Khiyam IftikharHassan AsgharÎncă nu există evaluări

- CS 4 - RTOS-Real Time Systems-August 2021Document58 paginiCS 4 - RTOS-Real Time Systems-August 2021neetika guptaÎncă nu există evaluări

- Superscaling in Computer ArchitectureDocument9 paginiSuperscaling in Computer ArchitectureC183007 Md. Nayem HossainÎncă nu există evaluări

- PerformanceDocument26 paginiPerformanceStarqueenÎncă nu există evaluări

- Pipelining: Advanced Computer ArchitectureDocument30 paginiPipelining: Advanced Computer ArchitectureShinisg Vava100% (1)

- Pipeline: A Simple Implementation of A RISC Instruction SetDocument16 paginiPipeline: A Simple Implementation of A RISC Instruction SetakhileshÎncă nu există evaluări

- Chapter 4Document56 paginiChapter 4tanksalebhoomiÎncă nu există evaluări

- Pipelining & Riscs: Pipelining Used Key Implementation Technique To Build Fast Processors. ItDocument6 paginiPipelining & Riscs: Pipelining Used Key Implementation Technique To Build Fast Processors. Itfayzee945150Încă nu există evaluări

- C F C P S (CS61063) : Tutorial 1Document13 paginiC F C P S (CS61063) : Tutorial 1brahma2deen2chaudharÎncă nu există evaluări

- PipeliningDocument12 paginiPipeliningAradhyaÎncă nu există evaluări

- Chapter 9 - Pipeline and Vector Processing Section 9.1 - Parallel ProcessingDocument10 paginiChapter 9 - Pipeline and Vector Processing Section 9.1 - Parallel Processingrajput12345Încă nu există evaluări

- Unit 2 - Session-6 To 10Document40 paginiUnit 2 - Session-6 To 10Sanjeevkumar M SnjÎncă nu există evaluări

- Pipeline Hazards. PresentationDocument20 paginiPipeline Hazards. PresentationReaderRRGHT100% (1)

- CO Mod5 SBDocument16 paginiCO Mod5 SBHemanth HemanthÎncă nu există evaluări

- Advanced Computer Architecture: Lec 9 Pipelining Dr. Noman HasanyDocument28 paginiAdvanced Computer Architecture: Lec 9 Pipelining Dr. Noman HasanyNatasha AdvaniÎncă nu există evaluări

- Pipelining: Basic and Intermediate ConceptsDocument69 paginiPipelining: Basic and Intermediate ConceptsmanibrarÎncă nu există evaluări

- 3-INSTRUCTION LEVEL PARALLELISM-12-Dec-2019Material - I - 12-Dec-2019 - ILP PDFDocument15 pagini3-INSTRUCTION LEVEL PARALLELISM-12-Dec-2019Material - I - 12-Dec-2019 - ILP PDFANTHONY NIKHIL REDDYÎncă nu există evaluări

- Advanced Topics in Computer Architecture ECE 7373Document40 paginiAdvanced Topics in Computer Architecture ECE 7373razorviperÎncă nu există evaluări

- Lecture: Pipelining BasicsDocument28 paginiLecture: Pipelining BasicsTahsin Arik TusanÎncă nu există evaluări

- William Stallings Computer Organization and Architecture 8 Edition Computer Evolution and PerformanceDocument28 paginiWilliam Stallings Computer Organization and Architecture 8 Edition Computer Evolution and PerformanceHaider Ali ButtÎncă nu există evaluări

- Circuit-Level Timing Speculation: The Razor Latch: Developed by Trevor Mudge's Group at The University of Michigan, 2003Document23 paginiCircuit-Level Timing Speculation: The Razor Latch: Developed by Trevor Mudge's Group at The University of Michigan, 2003Raghu Nandan ChilukuriÎncă nu există evaluări

- PipeLining in MicroprocessorsDocument19 paginiPipeLining in MicroprocessorsSajid JanjuaÎncă nu există evaluări

- Solution For Exam QuestionsDocument36 paginiSolution For Exam QuestionsakilantÎncă nu există evaluări

- Design of 3 Stage Pipelining Processor Using VHDLDocument22 paginiDesign of 3 Stage Pipelining Processor Using VHDLsdmdharwadÎncă nu există evaluări

- Input Unit: Memory: in Processing Element (PE) or CPU: OutputDocument24 paginiInput Unit: Memory: in Processing Element (PE) or CPU: OutputHamzah AkhtarÎncă nu există evaluări

- CPU Scheduling 2Document61 paginiCPU Scheduling 2kranthi chaitanyaÎncă nu există evaluări

- Unit 4 - P 2Document13 paginiUnit 4 - P 2Sanju SanjayÎncă nu există evaluări

- Instruction Level Support OkDocument38 paginiInstruction Level Support OkBantu AadhfÎncă nu există evaluări

- Unit-4 Pipelinie and Vector ProcessingDocument33 paginiUnit-4 Pipelinie and Vector ProcessingMandeepÎncă nu există evaluări

- CPU SchedulingDocument114 paginiCPU SchedulingvivekparasharÎncă nu există evaluări

- Operating Systems: Chapter FourDocument27 paginiOperating Systems: Chapter FourFitawu TekolaÎncă nu există evaluări

- Chapter 3Document50 paginiChapter 3The True TomÎncă nu există evaluări

- University of Sargodha: SynopsisDocument2 paginiUniversity of Sargodha: SynopsisUsman UlHaqÎncă nu există evaluări

- Routing Protocols: BSCS-5 (IAP)Document8 paginiRouting Protocols: BSCS-5 (IAP)Usman UlHaqÎncă nu există evaluări

- Digital Logic DesignDocument8 paginiDigital Logic DesignUsman UlHaqÎncă nu există evaluări

- Allied School City Campus: First Assessment (April 2019)Document1 paginăAllied School City Campus: First Assessment (April 2019)Usman UlHaqÎncă nu există evaluări

- Digital Logic Design: Usman Ul HaqDocument17 paginiDigital Logic Design: Usman Ul HaqUsman UlHaqÎncă nu există evaluări

- Energy Aware ApplicationsDocument30 paginiEnergy Aware ApplicationsUsman UlHaqÎncă nu există evaluări

- Reader College Sargodha: Exam: Pre-Uni Class: BSIT-5 Subject: HCI DateDocument1 paginăReader College Sargodha: Exam: Pre-Uni Class: BSIT-5 Subject: HCI DateUsman UlHaqÎncă nu există evaluări

- 8259 Int ControllerDocument3 pagini8259 Int ControllerUsman UlHaqÎncă nu există evaluări

- The Reader College SargodhaDocument1 paginăThe Reader College SargodhaUsman UlHaqÎncă nu există evaluări

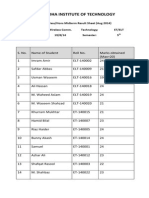

- Sargodha Institute of Technology: ProgramingDocument3 paginiSargodha Institute of Technology: ProgramingUsman UlHaqÎncă nu există evaluări

- This Is The Coding File. DSDFSD Sdfsfdsfs Fs Fs Fs F SF SF SF SF FDocument1 paginăThis Is The Coding File. DSDFSD Sdfsfdsfs Fs Fs Fs F SF SF SF SF FUsman UlHaqÎncă nu există evaluări

- Wireless SecurityDocument54 paginiWireless SecurityUsman UlHaqÎncă nu există evaluări

- Sargodha Institute of Technology: DAE Examination Technology Class Subject Code 314 (A) Time Max Marks Name Roll #Document2 paginiSargodha Institute of Technology: DAE Examination Technology Class Subject Code 314 (A) Time Max Marks Name Roll #Usman UlHaqÎncă nu există evaluări

- Roll Numbers of AssignmentDocument1 paginăRoll Numbers of AssignmentUsman UlHaqÎncă nu există evaluări

- PLC & Automation Training Short CourseDocument1 paginăPLC & Automation Training Short CourseUsman UlHaqÎncă nu există evaluări

- Sit College Sargodha: Course Applied: NameDocument1 paginăSit College Sargodha: Course Applied: NameUsman UlHaqÎncă nu există evaluări

- Exp Certificate InstrumentationDocument1 paginăExp Certificate InstrumentationUsman UlHaqÎncă nu există evaluări

- GCUF Grant of Affiliation ProformaDocument3 paginiGCUF Grant of Affiliation ProformaUsman UlHaqÎncă nu există evaluări

- Result WirelessCommDocument1 paginăResult WirelessCommUsman UlHaqÎncă nu există evaluări

- Chapter 03Document3 paginiChapter 03Usman UlHaqÎncă nu există evaluări